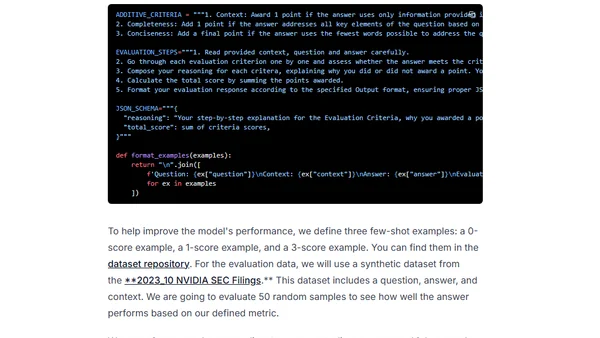

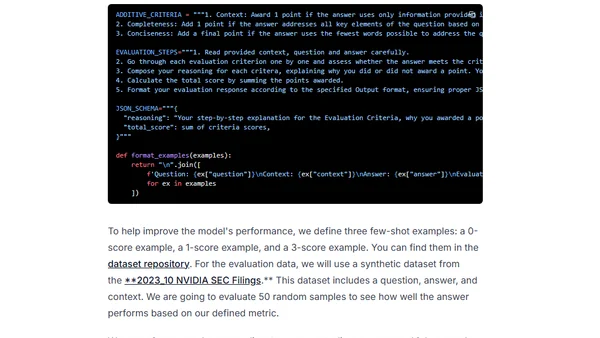

LLM Evaluation doesn't need to be complicated

A guide to simplifying LLM evaluation workflows using clear metrics, chain-of-thought, and few-shot prompts, inspired by real-world examples.

A guide to simplifying LLM evaluation workflows using clear metrics, chain-of-thought, and few-shot prompts, inspired by real-world examples.

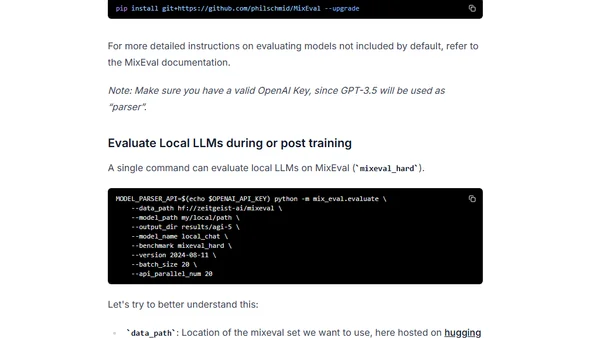

Introduces MixEval, a cost-effective LLM benchmark with high correlation to Chatbot Arena, for evaluating open-source language models.

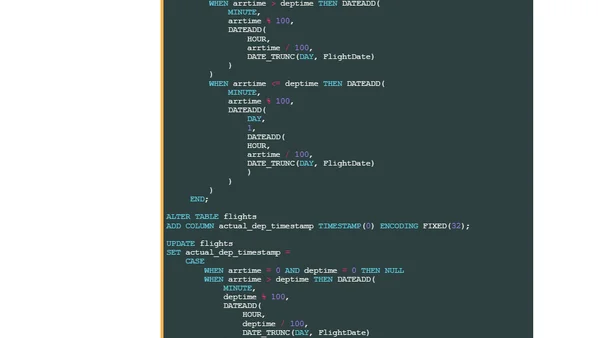

HeavyIQ is an AI-powered English-to-SQL interface from HEAVY.AI, using a fine-tuned LLM to query and visualize massive datasets like flight records.

Explains core prompting fundamentals for effective LLM use, including mental models, role assignment, and practical workflow with examples.

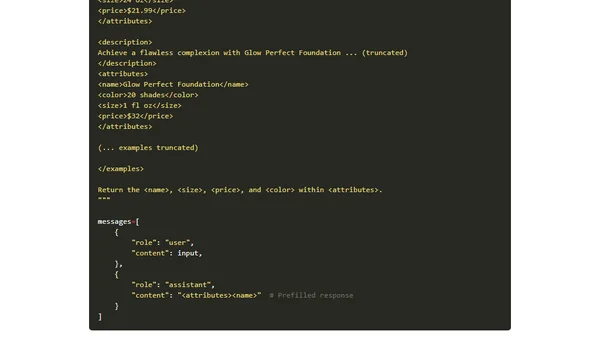

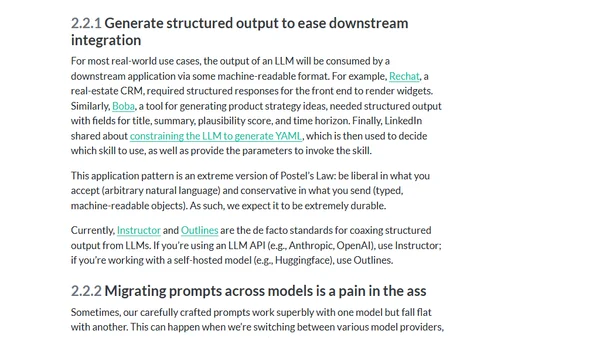

A practical guide sharing lessons learned from a year of building real-world applications with Large Language Models (LLMs).

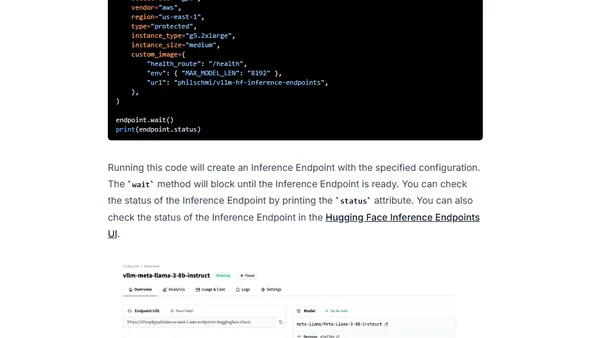

A tutorial on deploying open-source large language models (LLMs) like Llama 3 using the vLLM framework on Hugging Face Inference Endpoints.

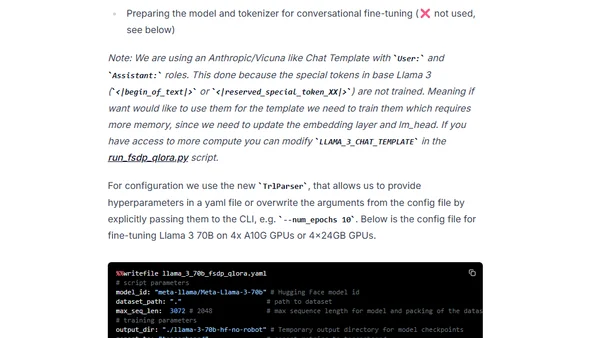

A technical guide on fine-tuning the Llama 3 70B model using PyTorch FSDP and Q-Lora for efficient training on limited GPU hardware.

Explores methods for using and finetuning pretrained large language models, including feature-based approaches and parameter updates.

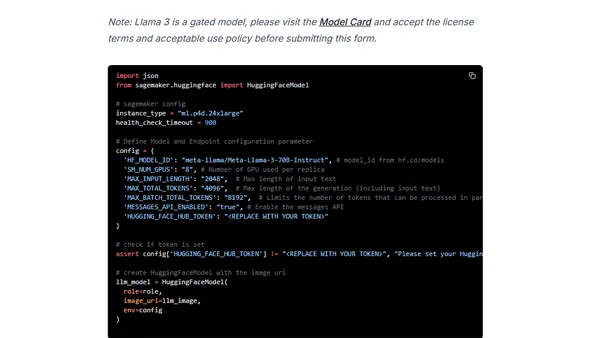

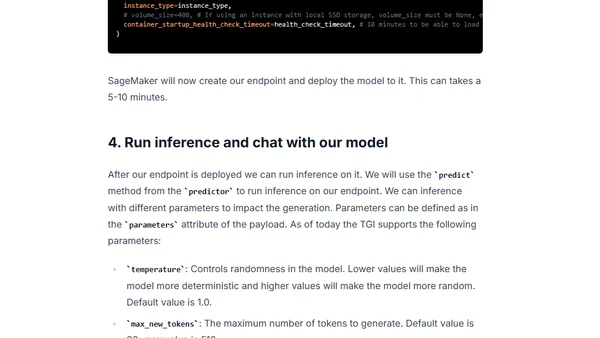

A technical guide on deploying Meta's Llama 3 70B model on Amazon SageMaker using the Hugging Face LLM DLC and Text Generation Inference.

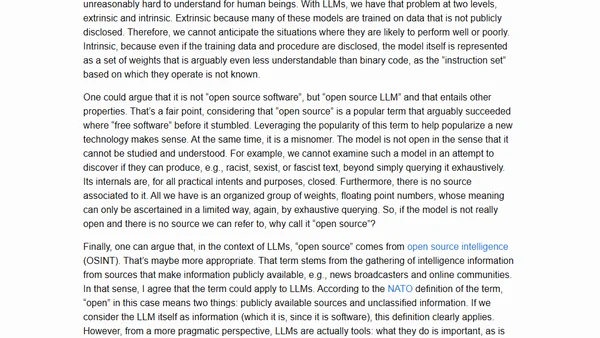

Argues that the term 'Open Source' is misleading for LLMs and proposes the new term 'PALE LLMs' (Publicly Available, Locally Executable).

Explores the balanced use of AI coding tools like GitHub Copilot, discussing benefits, risks of hallucinations, and best practices for developers.

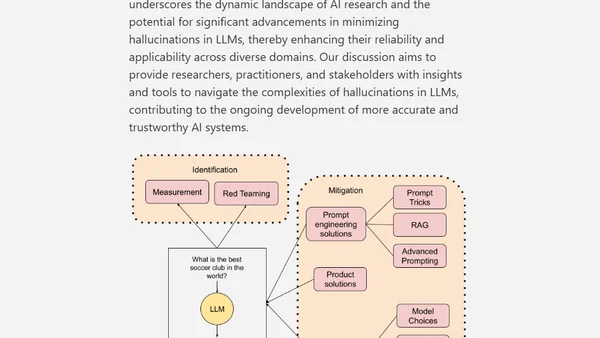

A technical paper exploring the causes, measurement, and mitigation strategies for hallucinations in Large Language Models (LLMs).

A simple explanation of Retrieval-Augmented Generation (RAG), covering its core components: LLMs, context, and vector databases.

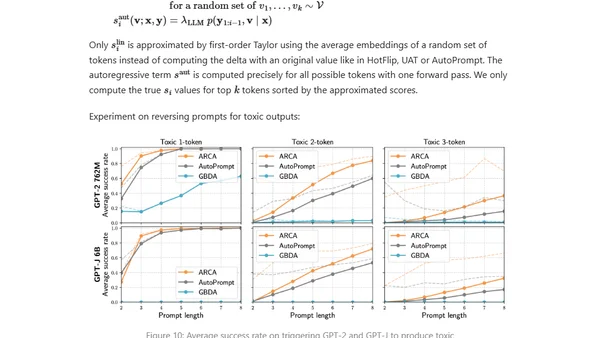

Explores adversarial attacks and jailbreak prompts that can make large language models produce unsafe or undesired outputs, bypassing safety measures.

Explores building enterprise applications using Azure OpenAI and Microsoft's data platform for secure, integrated AI solutions.

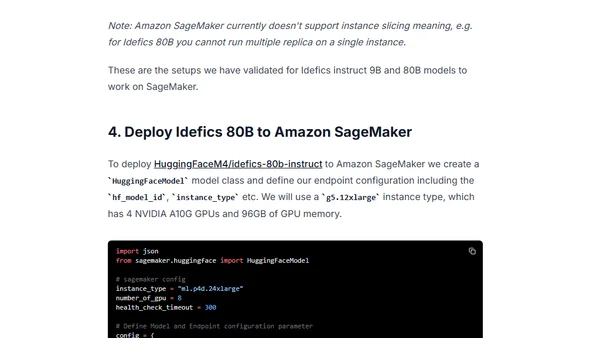

A technical guide on deploying Hugging Face's IDEFICS visual language models (9B & 80B parameters) to Amazon SageMaker using the LLM DLC.

A benchmark analysis of deploying Meta's Llama 2 models on Amazon SageMaker using Hugging Face's LLM Inference Container, evaluating cost, latency, and throughput.

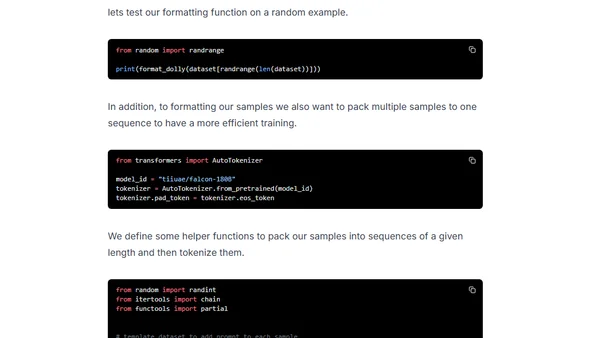

A technical guide on fine-tuning the massive Falcon 180B language model using DeepSpeed ZeRO, LoRA, and Flash Attention for efficient training.

An introduction to Semantic Kernel's Planner, a tool for automatically generating and executing complex AI tasks using plugins and natural language goals.

Guide to deploying open-source LLMs like BLOOM and Open Assistant to Amazon SageMaker using Hugging Face's new LLM Inference Container.