LLM Evaluation doesn't need to be complicated

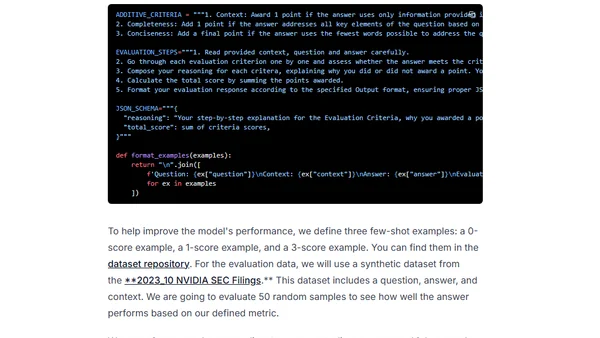

Read OriginalThis article argues that evaluating Large Language Models (LLMs) doesn't require complex infrastructure. It outlines a simplified workflow using an LLM as a judge, detailing how to create effective evaluation prompts with clear metrics, additive scoring, chain-of-thought reasoning steps, and few-shot examples, drawing inspiration from Discord's approach and recent research.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser