Deploy open LLMs with vLLM on Hugging Face Inference Endpoints

A tutorial on deploying open-source large language models (LLMs) like Llama 3 using the vLLM framework on Hugging Face Inference Endpoints.

A tutorial on deploying open-source large language models (LLMs) like Llama 3 using the vLLM framework on Hugging Face Inference Endpoints.

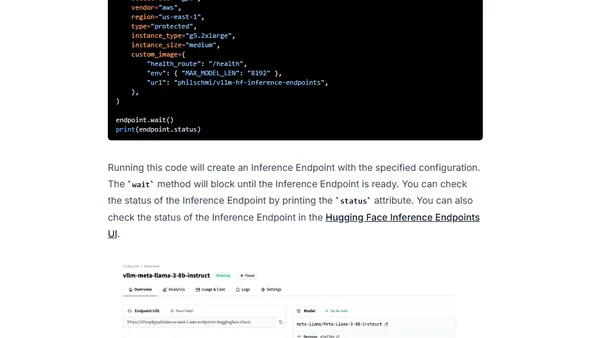

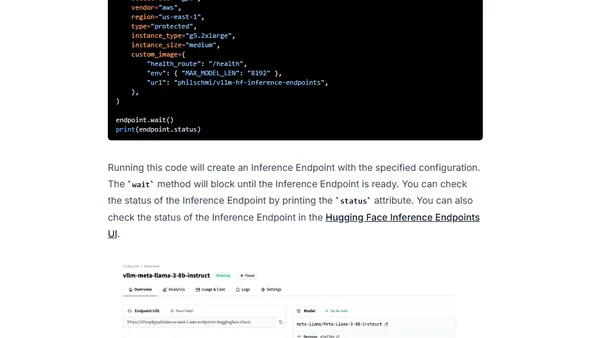

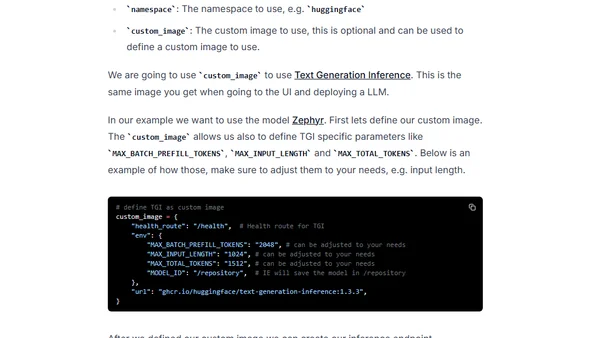

Learn to programmatically manage Hugging Face Inference Endpoints using the huggingface_hub Python library for automated model deployment.

A guide to deploying open-source Large Language Models (LLMs) like Falcon using Hugging Face's managed Inference Endpoints service.

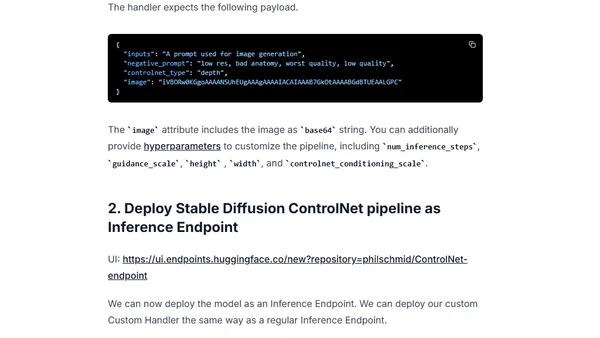

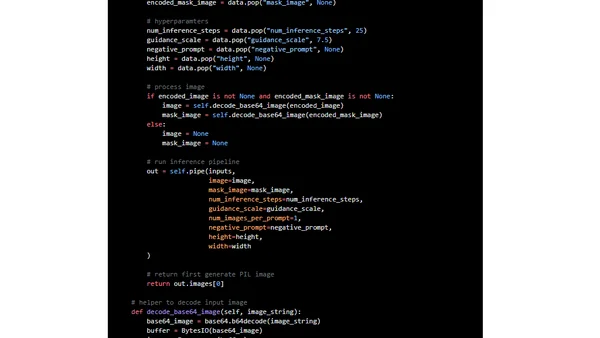

Learn how to deploy and use ControlNet for controlled text-to-image generation via Hugging Face Inference Endpoints as a scalable API.

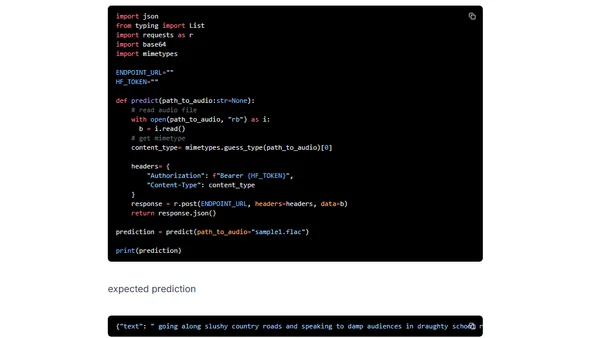

A tutorial on deploying OpenAI's Whisper speech recognition model using Hugging Face Inference Endpoints for scalable transcription APIs.

A tutorial on using Hugging Face Inference Endpoints to deploy and run Stable Diffusion 2 for AI image inpainting via a custom API.

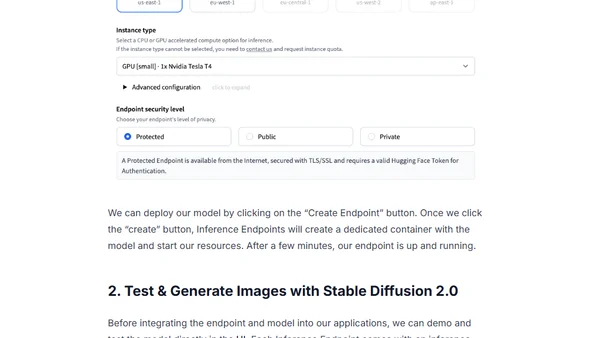

A tutorial on deploying Stable Diffusion 2.0 for image generation using Hugging Face Inference Endpoints and integrating it via an API.

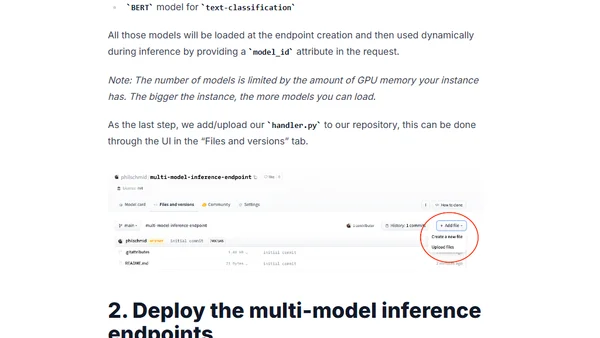

Learn how to deploy multiple ML models on a single GPU using Hugging Face Inference Endpoints for scalable, cost-effective inference.

A tutorial on deploying the T5 11B language model for inference using Hugging Face Inference Endpoints on a budget.

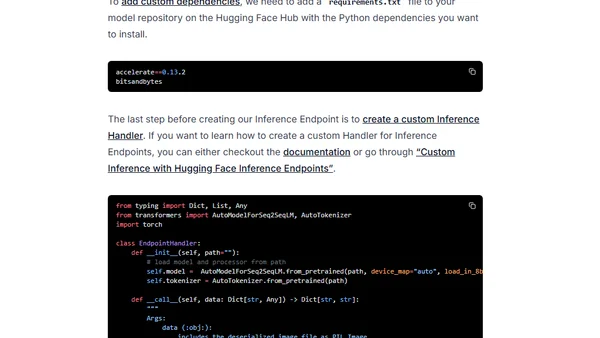

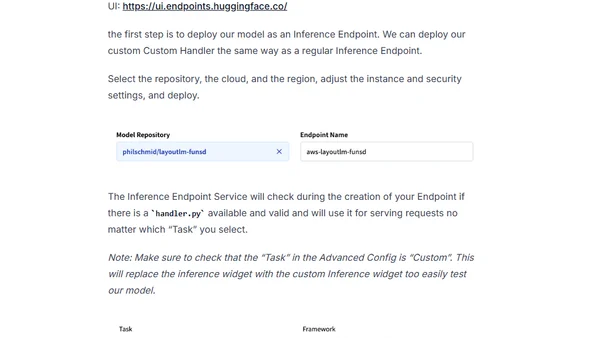

A tutorial on deploying the LayoutLM document understanding model using Hugging Face Inference Endpoints for production API integration.

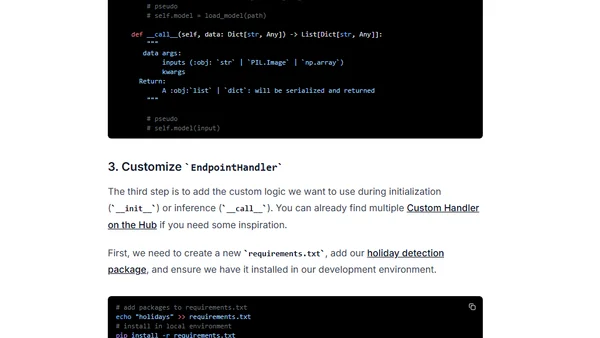

A tutorial on creating custom inference handlers for Hugging Face Inference Endpoints to add business logic and dependencies.