Deploy open LLMs with vLLM on Hugging Face Inference Endpoints

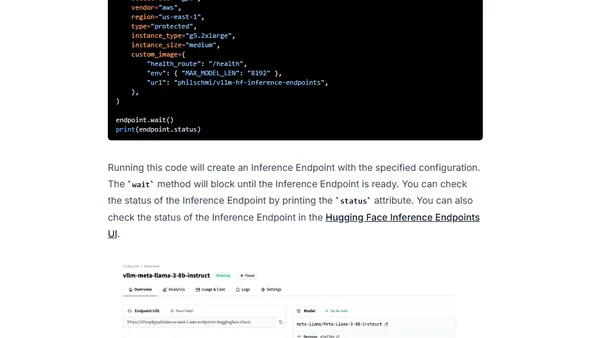

Read OriginalThis technical guide explains how to deploy open large language models (LLMs) using the vLLM framework on Hugging Face Inference Endpoints. It details the process of configuring a custom container, setting environment variables like MAX_MODEL_LEN, and programmatically creating an endpoint for the Llama 3 8B Instruct model using the huggingface_hub Python library.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser