Adversarial Attacks on LLMs

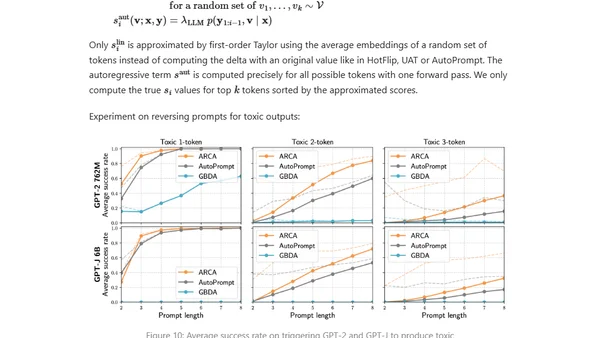

Read OriginalThis technical article examines the threat of adversarial attacks on large language models (LLMs) like ChatGPT. It discusses how, despite safety alignment efforts, specially crafted prompts can jailbreak models to generate harmful content. The post covers threat models, differences between white-box and black-box attacks, and contrasts adversarial methods for text generation versus traditional image classification tasks.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser