Deploy Llama 3 on Amazon SageMaker

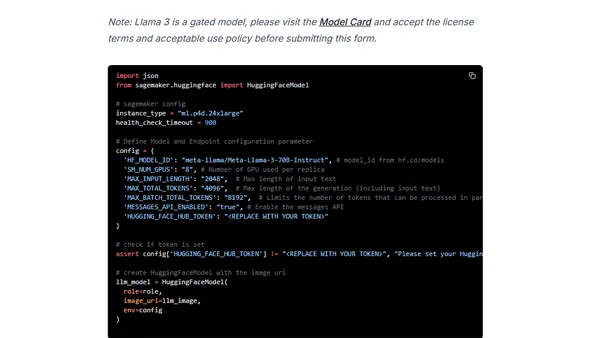

Read OriginalThis tutorial provides a step-by-step guide for deploying the Meta-Llama-3-70B-Instruct model to Amazon SageMaker. It covers setting up the development environment, hardware requirements, deployment using the Hugging Face LLM DLC powered by Text Generation Inference (TGI), running inference, benchmarking, and cleanup procedures.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser