Measuring and Mitigating Hallucinations in Large Language Models: A Multifaceted Approach

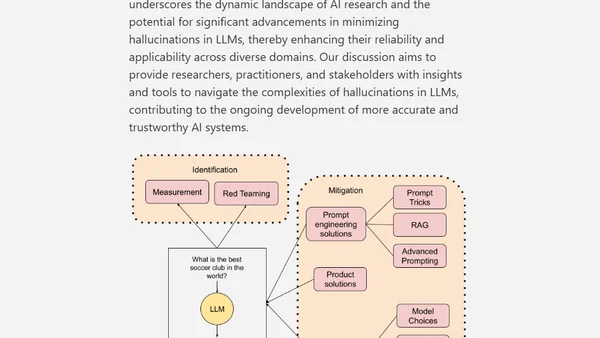

Read OriginalThis academic paper analyzes the challenge of hallucinations in Large Language Models, examining their origins and manifestations. It provides a comprehensive overview of mitigation strategies, including advanced prompting, model selection, configuration adjustments, and alignment techniques, aiming to enhance LLM reliability for researchers and practitioners.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser