Tips for LLM Pretraining and Evaluating Reward Models

Analysis of recent AI research papers on continued pretraining for LLMs and reward modeling for RLHF, with insights into model updates and alignment.

Analysis of recent AI research papers on continued pretraining for LLMs and reward modeling for RLHF, with insights into model updates and alignment.

Discusses strategies for continual pretraining of LLMs and evaluating reward models for RLHF, based on recent research papers.

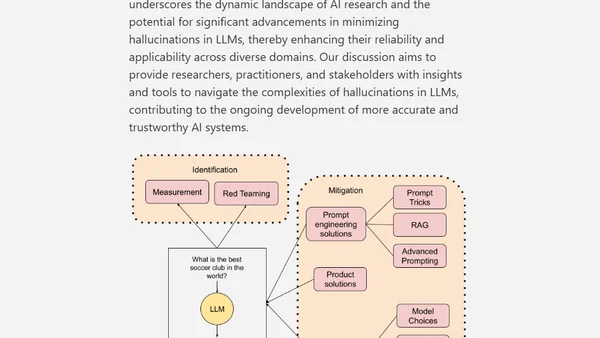

A technical paper exploring the causes, measurement, and mitigation strategies for hallucinations in Large Language Models (LLMs).