Instruction Pretraining LLMs

Explores recent research on instruction finetuning for LLMs, including a cost-effective method for generating synthetic training data from scratch.

Explores recent research on instruction finetuning for LLMs, including a cost-effective method for generating synthetic training data from scratch.

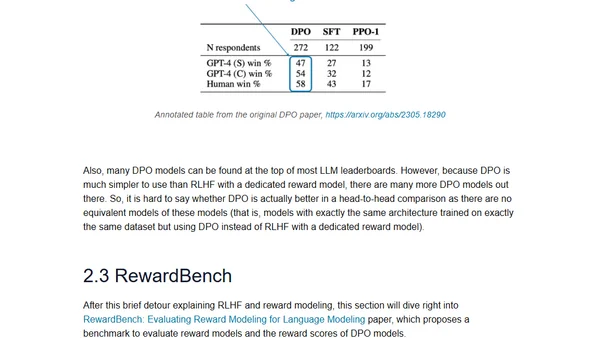

Analysis of recent AI research papers on continued pretraining for LLMs and reward modeling for RLHF, with insights into model updates and alignment.

Discusses strategies for continual pretraining of LLMs and evaluating reward models for RLHF, based on recent research papers.