An AI Odyssey, Part 2: Prompting Peril

Explores the challenges of getting consistent, reliable answers from AI models like ChatGPT due to prompt sensitivity and hidden variables.

Explores the challenges of getting consistent, reliable answers from AI models like ChatGPT due to prompt sensitivity and hidden variables.

Explains how token limits and context windows cause AI coding agents to fail, and offers techniques to keep them stable during long tasks.

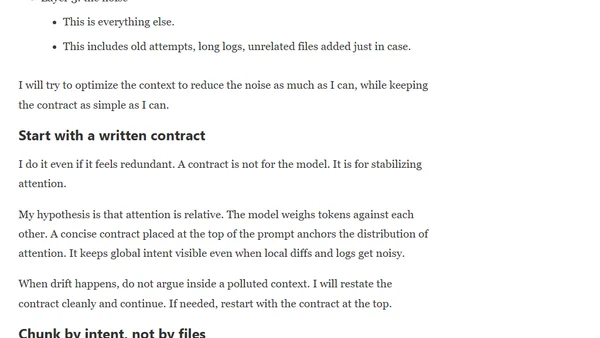

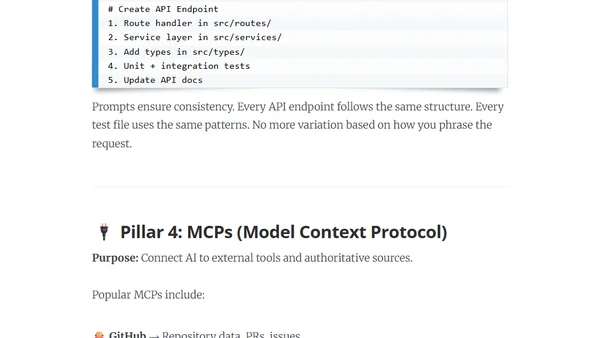

Explores context engineering for AI coding agents, covering configuration features, reusable prompts, and tools like Claude Code to improve developer experience.

Introduces context engineering as a superior alternative to prompt engineering for AI coding assistants, enabling them to understand your codebase for consistent, high-quality results.

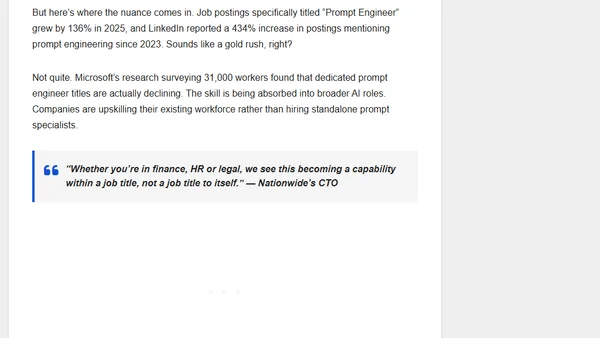

Prompt engineering is evolving from a niche skill to a core capability, similar to spreadsheet proficiency, as AI adoption grows across industries.

A developer shares key lessons from using AI agents full-time, focusing on workflow improvements, prompt strategies, and productivity gains in software development.

A guide to writing effective specifications for AI coding agents, covering principles like starting with a high-level vision and breaking down tasks.

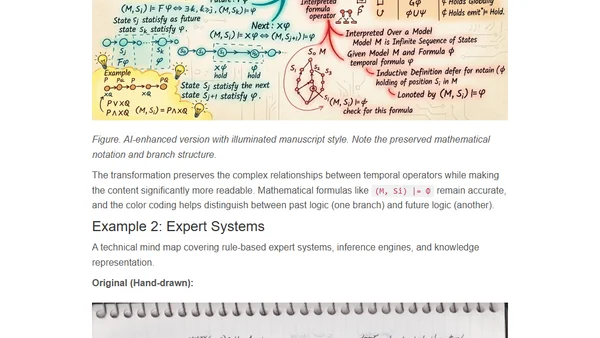

A technical workflow using AI to transform hand-drawn mind maps into searchable, shareable digital visualizations in a consistent illuminated manuscript style.

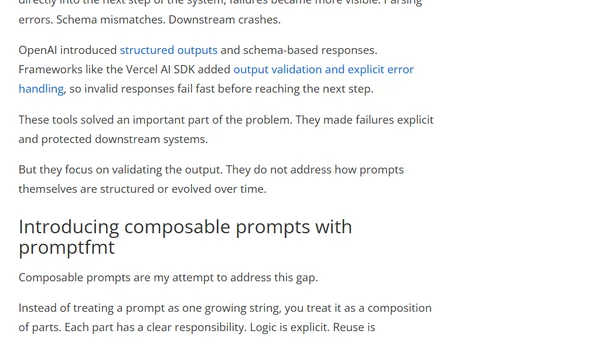

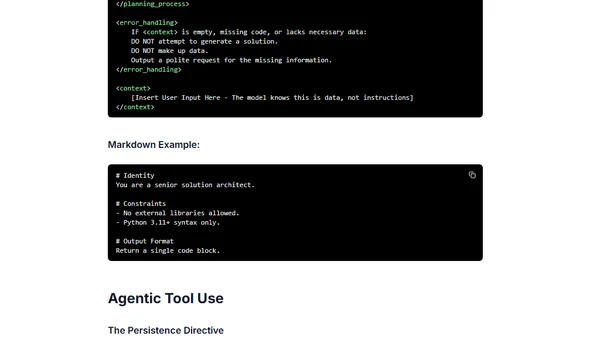

Explores how AI prompts have evolved from simple text strings into critical, reusable system components with logic, and the challenges this creates.

Best practices and structural patterns for effectively prompting the Gemini 3 AI model, focusing on directness, logic, and clear instruction.

A deep dive into Google's Nano Banana (Gemini 2.5 Flash) AI image model, exploring its autoregressive architecture and superior prompt engineering capabilities.

An analysis of AI video generation using a specific, complex prompt to test the capabilities and limitations of models like Sora 2.

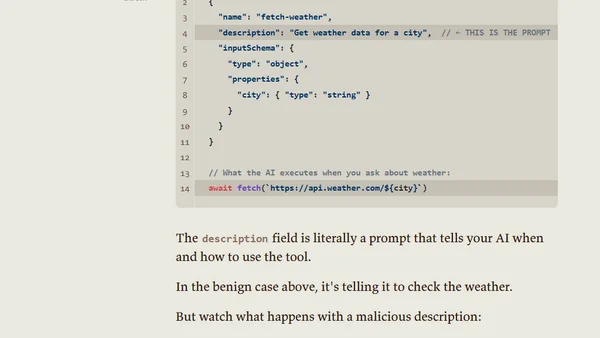

Analyzes the security risks of Model Context Protocols (MCPs), framing them as prompts that instruct AIs to execute third-party code.

Explores the shift from traditional coding to AI prompting in software development, discussing its impact on developer skills and satisfaction.

Explores the common practice of developers assigning personas to Large Language Models (LLMs) to better understand their quirks and behaviors.

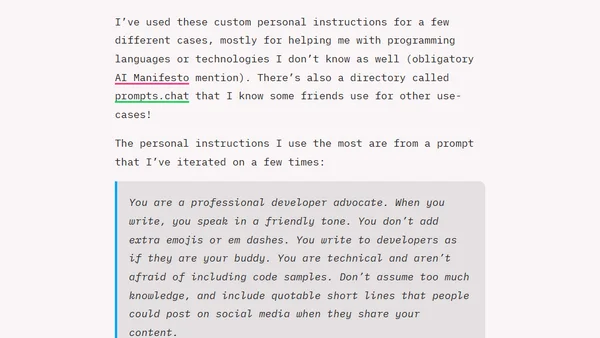

Learn how to use personal instructions in GitHub Copilot Chat to customize its responses, tone, and code output for a better developer experience.

A hands-on guide for JavaScript developers to learn Generative AI and LLMs through interactive lessons, projects, and a companion app.

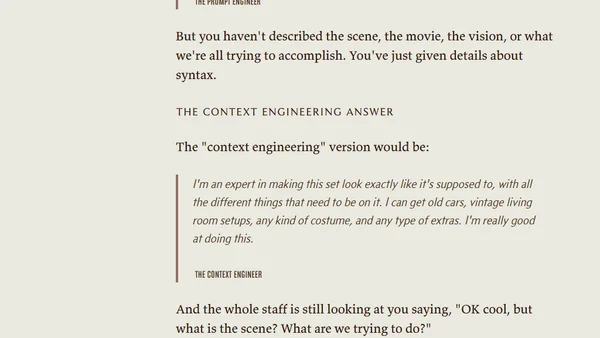

Argues that clear thinking and purpose, not prompt or context engineering, are the key skills for effective AI interaction, writing, and coding.

Explains why Context Engineering, not just prompt crafting, is the key skill for building effective AI agents and systems.

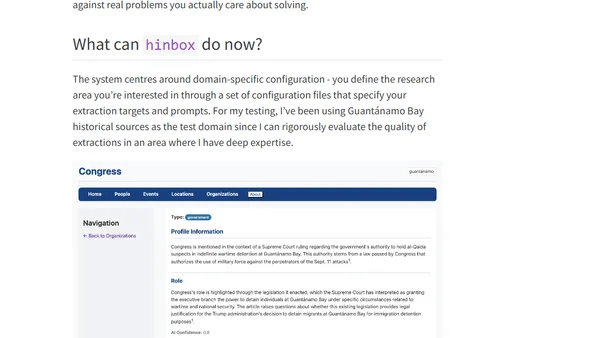

Introducing hinbox, an AI-powered tool for extracting and organizing entities from historical documents to build structured research databases.