Context gathering for AI: a tradeoff guide

A guide comparing strategies for providing context to AI models in workflows, analyzing tradeoffs in cost, latency, and observability.

A guide comparing strategies for providing context to AI models in workflows, analyzing tradeoffs in cost, latency, and observability.

Explains how token limits and context windows cause AI coding agents to fail, and offers techniques to keep them stable during long tasks.

Introduces context engineering as a superior alternative to prompt engineering for AI coding assistants, enabling them to understand your codebase for consistent, high-quality results.

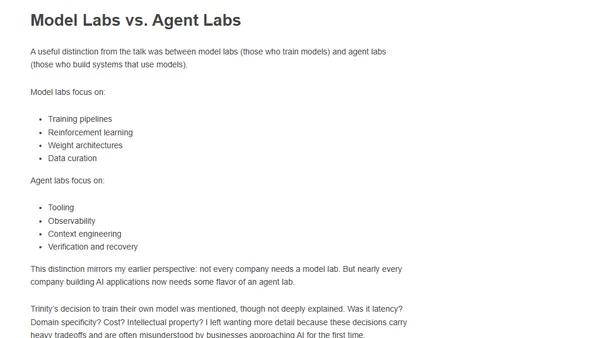

A developer reflects on AI agent architectures, context management, and the industry's overemphasis on model development vs. building applications.

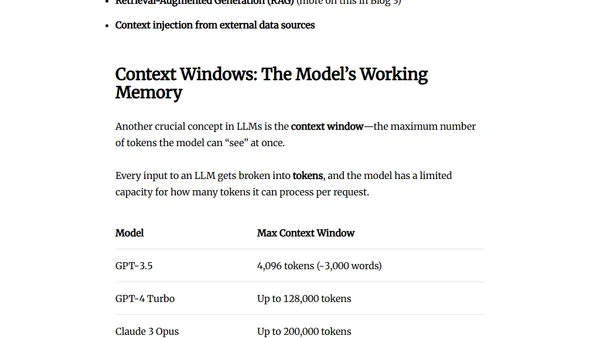

Explains how LLMs work by converting words to numerical embeddings, using vector spaces for semantic understanding, and managing context windows.

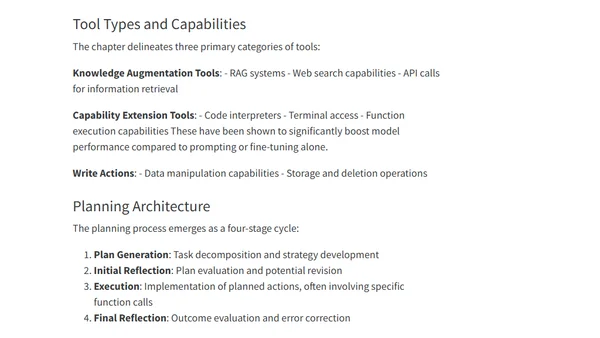

Analysis of Chapter 6 from Chip Huyen's 'AI Engineering' book, focusing on RAG systems and AI agents, their architecture, costs, and relationship.