Java with Generative AI and LLMs

Explores the integration of Java with Generative AI and Large Language Models (LLMs) for building innovative applications like AI chatbots.

Explores the integration of Java with Generative AI and Large Language Models (LLMs) for building innovative applications like AI chatbots.

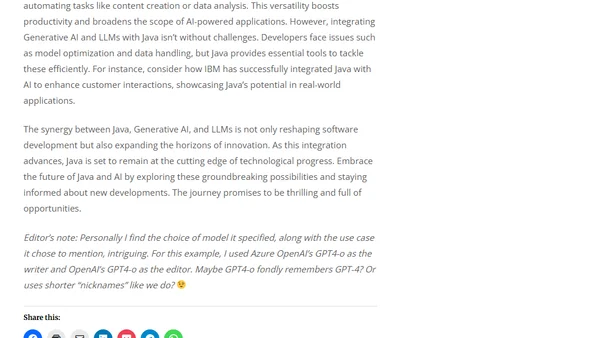

Explores three key methods to enhance LLM performance: fine-tuning, prompt engineering, and RAG, detailing their use cases and trade-offs.

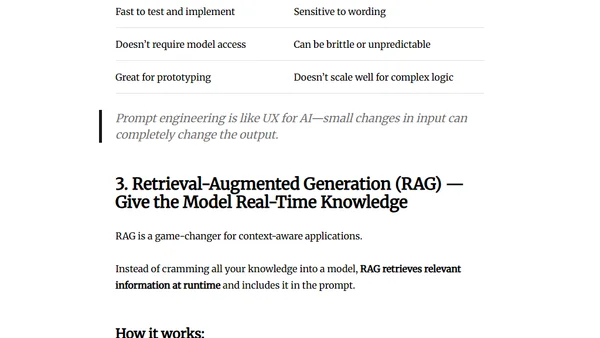

Explains how LLMs work by converting words to numerical embeddings, using vector spaces for semantic understanding, and managing context windows.

Explores the evolution of AI from symbolic systems to modern Large Language Models (LLMs), detailing their capabilities and limitations.

An explanation of the Model Context Protocol (MCP), an open standard for connecting LLMs to data and tools, and why it's important for AI development.

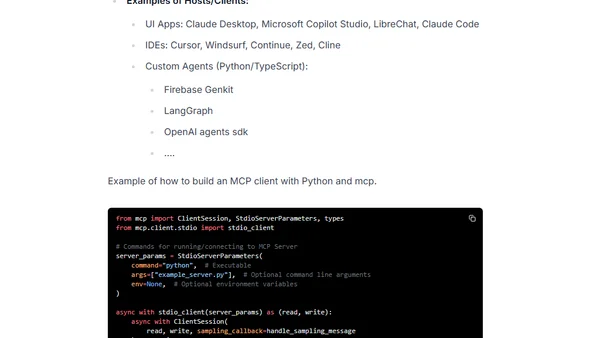

An overview of the Model Context Protocol (MCP), an open standard for connecting AI applications to external tools and data sources.

A guide to building a production-ready, vendor-neutral AI agent using IBM watsonx.ai, MatrixHub, and MCP Gateway, focusing on decoupled architecture.

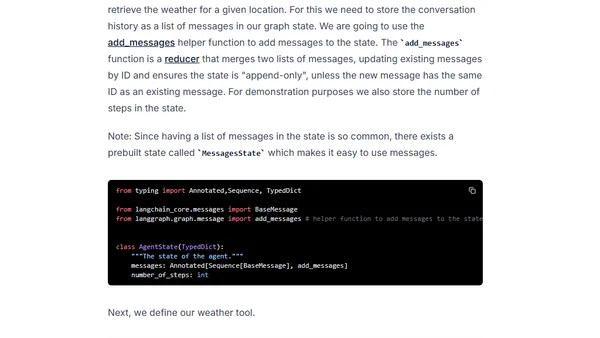

A tutorial on building a ReAct AI agent from scratch using Google's Gemini 2.5 Pro/Flash and the LangGraph framework for complex reasoning and tool use.

Explores the ethics of LLM training data and proposes a technical method to poison AI crawlers using nofollow links.

Using an LLM to label Hacker News titles and train a Ridge regression model for personalized article ranking based on user preferences.

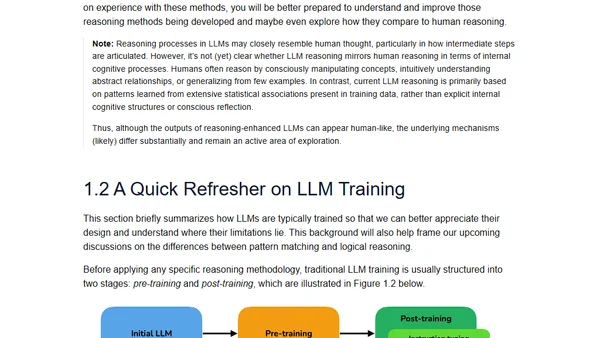

An introduction to reasoning in Large Language Models, covering key concepts like chain-of-thought and methods to improve LLM reasoning abilities.

Explains a technique using AI-generated summaries of SQL queries to improve the accuracy of text-to-SQL systems with LLMs.

Summary of a panel discussion at NVIDIA GTC 2025 on insights and lessons learned from building real-world LLM-powered applications.

Explores how large language models (LLMs) are transforming industrial recommendation systems and search, covering hybrid architectures, data generation, and unified frameworks.

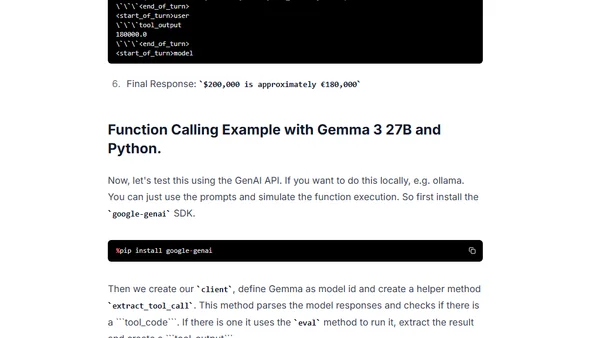

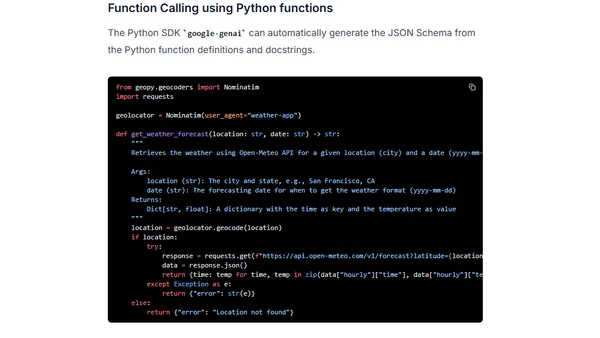

A tutorial on implementing function calling with Google's Gemma 3 27B LLM, showing how to connect it to external tools and APIs.

A clear explanation of the attention mechanism in Large Language Models, focusing on how words derive meaning from context using vector embeddings.

Argues that AI can improve beyond current transformer models by examining biological examples of superior sample efficiency and planning.

Explores the concept of 'generality' in AI models, using examples of ML failures and LLM inconsistencies to question how we assess their capabilities.

A practical guide to implementing function calling with Google's Gemini 2.0 Flash model, enabling LLMs to interact with external tools and APIs.

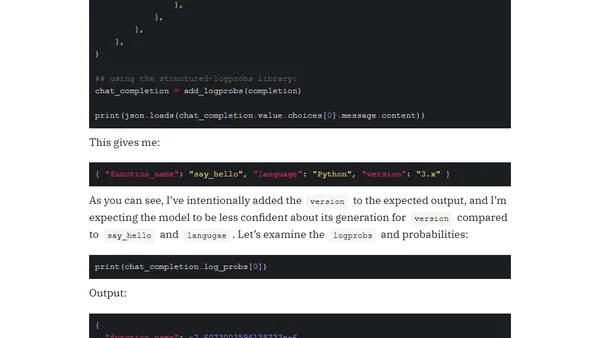

Explains how to extract logprobs from OpenAI's structured JSON outputs using the structured-logprobs Python library for better LLM confidence insights.