Headroom for AI development

Argues that AI can improve beyond current transformer models by examining biological examples of superior sample efficiency and planning.

John Langford writes deeply analytical essays on machine learning theory, AI research, and the limits of current architectures. His work explores sample efficiency, representation, long-term memory, and how future AI systems might move beyond today’s transformer-based models.

9 articles from this blog

Argues that AI can improve beyond current transformer models by examining biological examples of superior sample efficiency and planning.

A critical analysis of GPT-4's capabilities, questioning the 'miracle' narrative and exploring the technical foundations behind its success.

Highlights ICML 2021 invited talks on applying machine learning to scientific domains like drug discovery, climate science, poverty alleviation, and neuroscience.

An interview with AI researcher Joelle Pineau discussing her work in reinforcement learning, its applications, and advice for newcomers to the field.

Analyzes the fallout from Timnit Gebru's firing from Google and debates appropriate community responses in the AI research field.

Analyzes three experiments on the ICML 2020 peer-review process, focusing on resubmission bias, discussion effects, and reviewer recruiting.

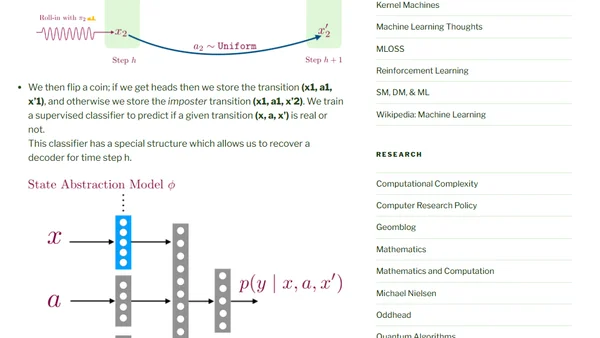

Introduces HOMER, a new reinforcement learning algorithm that solves key problems like global exploration and decoding latent dynamics with provable guarantees.

Analyzes the privacy and effectiveness issues of digital contact tracing, comparing location-based and proximity-based approaches.

Analyzes the potential impact of the COVID-19 pandemic on major machine learning conferences, discussing outbreak scenarios and contingency plans.