A Journey from AI to LLMs and MCP - 3 - Boosting LLM Performance — Fine-Tuning, Prompt Engineering, and RAG

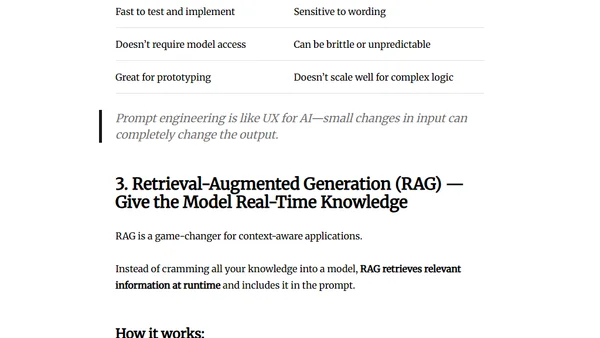

Read OriginalThis technical article provides a detailed comparison of three primary techniques for improving Large Language Model (LLM) performance: fine-tuning, prompt engineering, and Retrieval-Augmented Generation (RAG). It explains how each method works, their ideal use cases, and the associated trade-offs, helping developers choose the right enhancement strategy for their specific AI and software engineering projects.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser