The Claude Code Drawbacks

A developer shares critical drawbacks of using Claude Code for AI-assisted programming, focusing on hidden issues like problematic test generation and maintenance challenges.

A developer shares critical drawbacks of using Claude Code for AI-assisted programming, focusing on hidden issues like problematic test generation and maintenance challenges.

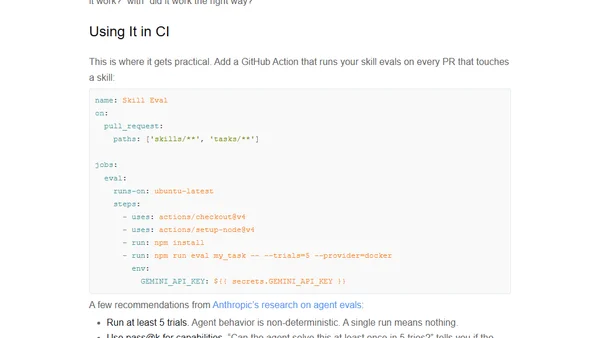

Introduces Skill Eval, a TypeScript framework for testing and benchmarking AI coding agent skills to ensure reliability and correct behavior.

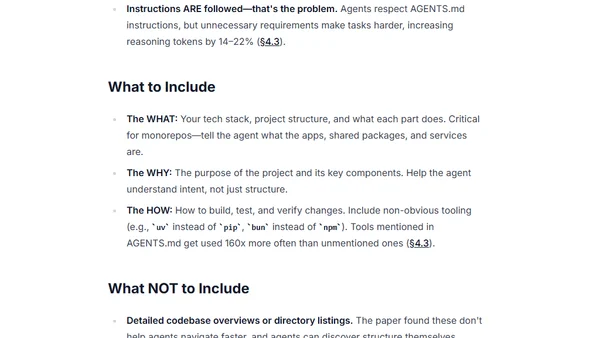

A guide to writing effective AGENTS.md files for AI coding agents, based on research data and best practices.

A developer shares how using Claude Code enabled them to build 17 diverse side projects, including TUIs, games, and tools, in just two months.

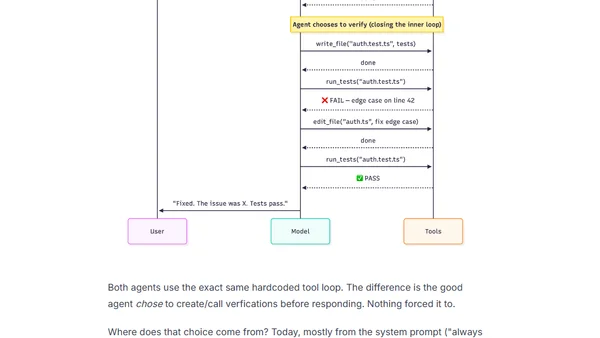

Explains the difference between an AI agent's inner loop (verifying work within a task) and outer loop (learning across tasks).

Compares Star Schema and Snowflake Schema data models, explaining their structures, trade-offs, and when to use each for optimal data warehousing.

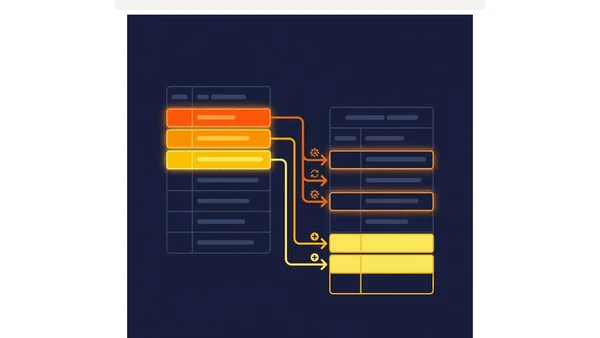

Explores how data modeling principles adapt for modern lakehouse architectures using open formats like Apache Iceberg and the Medallion pattern.

Explains dimensional modeling for analytics, covering facts, dimensions, grains, and table design for query performance.

Explains the three levels of data modeling (conceptual, logical, physical) and their importance in database design.

A comprehensive guide to data modeling, explaining its meaning, three abstraction levels, techniques, and importance for modern data systems.

A guide to designing reliable, fault-tolerant data pipelines with architectural principles like idempotency, observability, and DAG-based workflows.

A guide to the core principles and systems thinking required for data engineering, beyond just learning specific tools.

Explains how to safely evolve data schemas using API-like discipline to prevent breaking downstream systems like dashboards and ML pipelines.

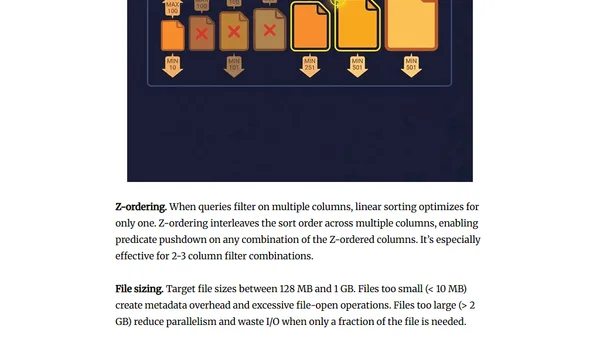

Explains data partitioning and organization strategies to drastically improve query performance in analytical databases.

A guide to choosing between batch and streaming data processing models based on actual freshness requirements and cost.

Explains idempotent data pipelines, patterns like partition overwrite and MERGE, and how to prevent duplicate data during retries.

Argues that data quality must be enforced at the pipeline's ingestion point, not patched in dashboards, to ensure consistent, reliable data.

Explains the importance of pipeline observability for data health, covering metrics, logs, and lineage to detect issues beyond simple execution monitoring.

A practical, tool-agnostic checklist of essential best practices for designing, building, and maintaining reliable data engineering pipelines.

Explains the importance of automated testing for data pipelines, covering schema validation, data quality checks, and regression testing.