Practical Guide to Evaluating and Testing Agent Skills

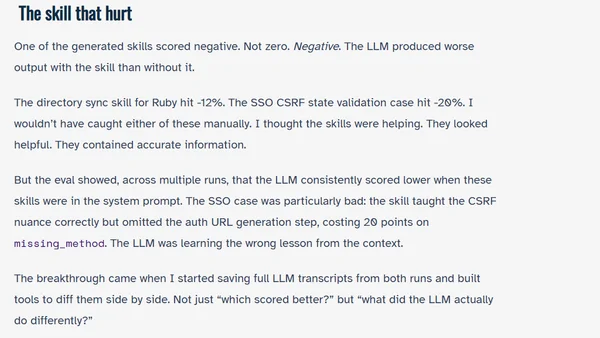

A guide to systematically evaluating and testing AI agent skills, covering success criteria, building an evaluation harness, and improving skill performance.

A guide to systematically evaluating and testing AI agent skills, covering success criteria, building an evaluation harness, and improving skill performance.

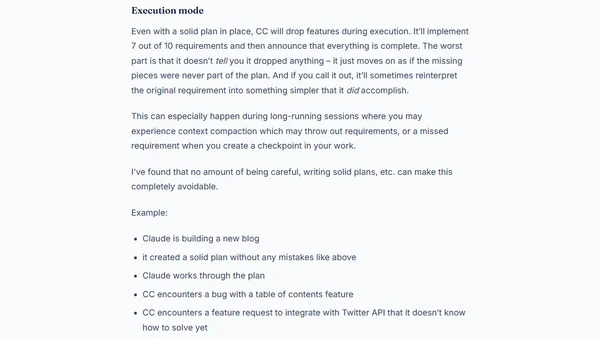

A developer shares critical drawbacks of using Claude Code for AI-assisted programming, focusing on hidden issues like problematic test generation and maintenance challenges.

A developer details the process of building evaluation systems for two AI-powered developer tools to measure their real-world effectiveness.

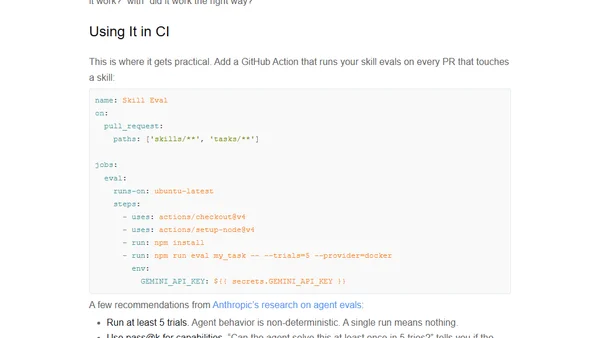

Introduces Skill Eval, a TypeScript framework for testing and benchmarking AI coding agent skills to ensure reliability and correct behavior.

Explains the importance of automated testing for data pipelines, covering schema validation, data quality checks, and regression testing.

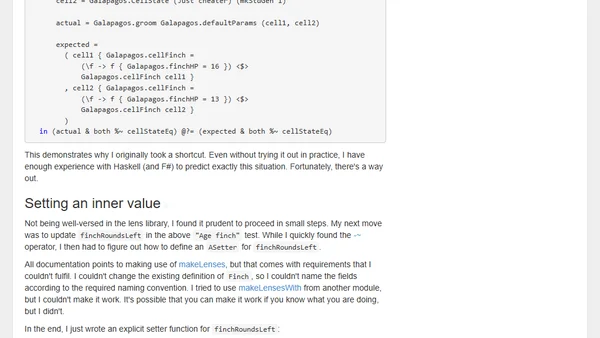

Explores using lenses in Haskell to simplify test assertions for nested data structures, improving test readability and precision.

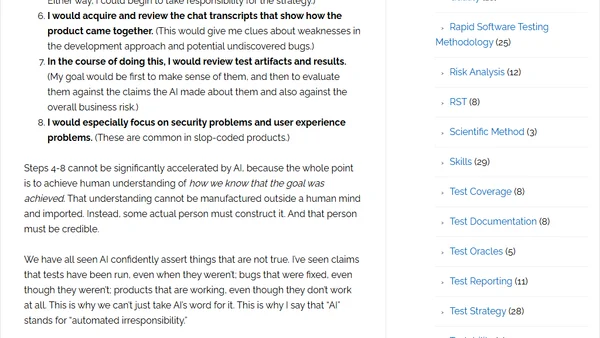

A critical analysis of the '10x productivity' claims in AI-assisted software development, questioning quality and oversight.

A developer explains the practical benefits of implementing a --dry-run option in a reporting application for safe testing and validation.

Tips for using AI coding agents to generate high-quality Python tests, leveraging existing patterns and tools like pytest.

Tips for using AI coding agents to generate high-quality Python tests, focusing on leveraging existing test suites and patterns.

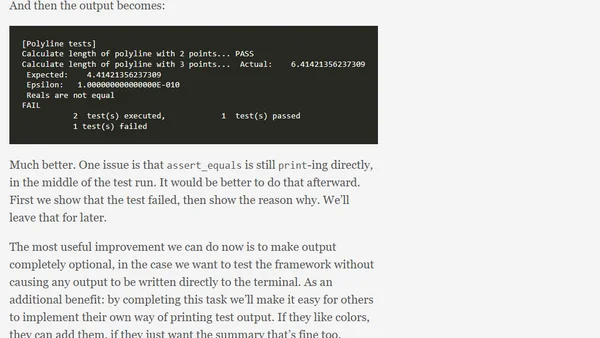

A technical guide on implementing centralized test result printing and progress reporting in a Fortran testing framework.

![Don't Trip[wire] Yourself: Testing Error Recovery in Zig](https://alldevblogs.blob.core.windows.net/thumbs/article-6021fe649cc1-full-f3db1d76.webp)

Introducing Tripwire, a Zig library for injecting failures to test error handling and recovery paths, ensuring robust error cleanup.

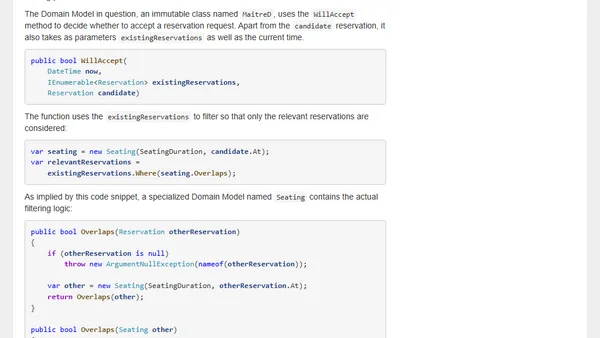

Explores strategies for implementing and testing complex data filtering logic, balancing correctness and performance between in-memory and database queries.

Argues that software engineers must prove their code works through manual and automated testing, not just rely on AI tools and code reviews.

A developer explains their migration from Jest to Vitest, citing ESM support, TypeScript compatibility, and performance improvements.

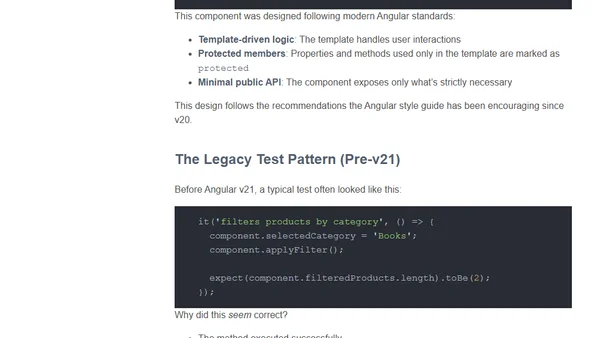

Angular v21's switch to Vitest now includes HTML templates in test coverage, forcing developers to rethink component testing strategies.

A look at JustHTML, a new Python HTML parser library, and how it was built using AI-assisted programming and 'vibe engineering'.

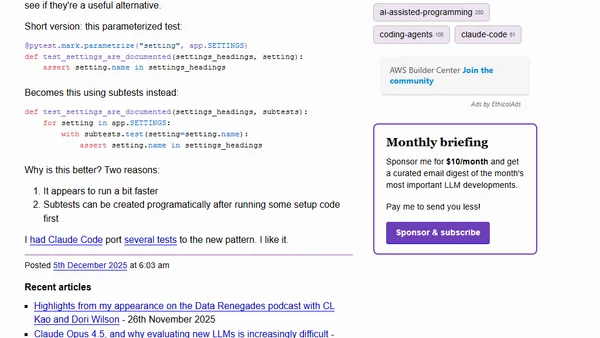

Explores the new subtests feature in pytest 9.0.0, comparing it to parametrized tests for performance and flexibility.

Armin Ronacher discusses challenges in AI agent design, including abstraction issues, testing difficulties, and API synchronization problems.

Using data-* attributes to expose internal state for stable, declarative testing of web components, avoiding brittle shadow DOM selectors.