In defence of correctness

An article arguing for the importance of correctness in software, especially in critical systems like reporting and ETL, and discussing when it is essential.

An article arguing for the importance of correctness in software, especially in critical systems like reporting and ETL, and discussing when it is essential.

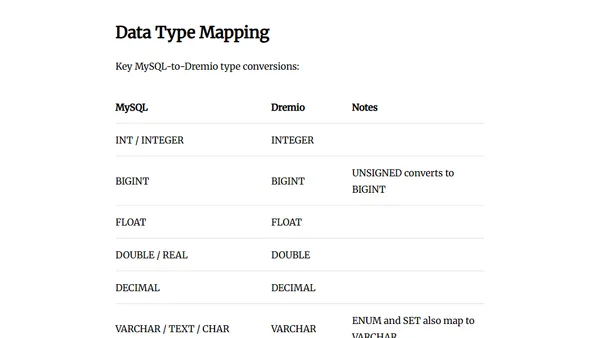

Guide on connecting MySQL to Dremio Cloud for federated analytics, eliminating ETL pipelines and improving query performance.

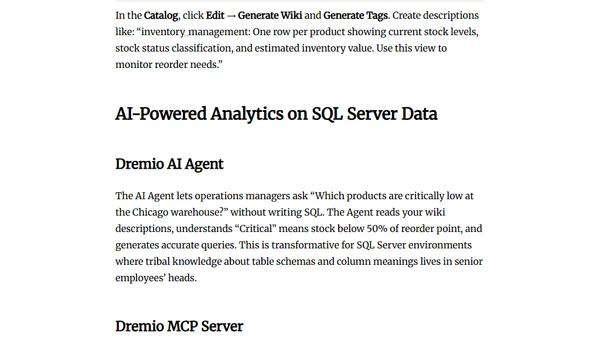

Guide on connecting Microsoft SQL Server to Dremio Cloud for federated analytics, avoiding ETL and reducing license costs.

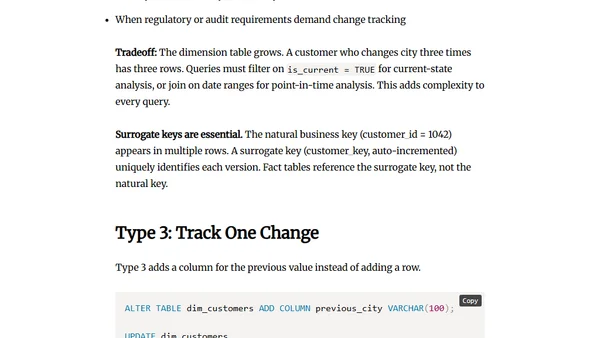

Explains Slowly Changing Dimensions (SCD) types 1-3 for managing data history in data warehouses, with practical examples.

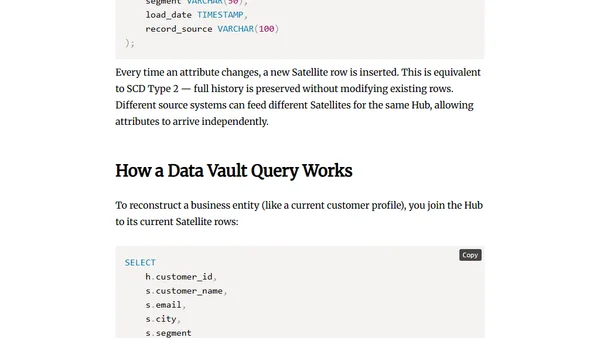

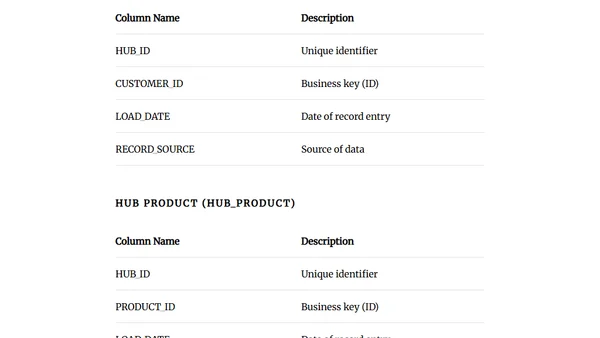

Explains Data Vault data modeling, its core components (Hubs, Links, Satellites), and the problems it solves for complex, evolving data sources.

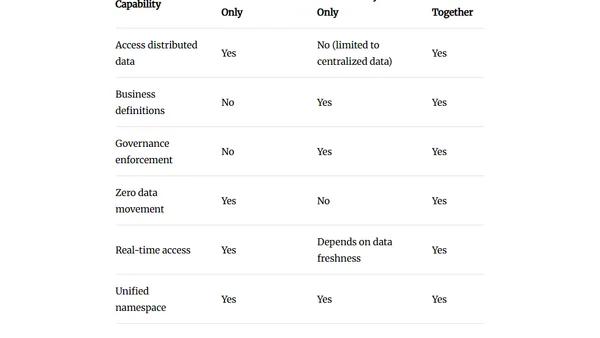

Explains how data virtualization and a semantic layer enable querying distributed data without copying, reducing costs and improving freshness.

Explains idempotent data pipelines, patterns like partition overwrite and MERGE, and how to prevent duplicate data during retries.

A practical, tool-agnostic checklist of essential best practices for designing, building, and maintaining reliable data engineering pipelines.

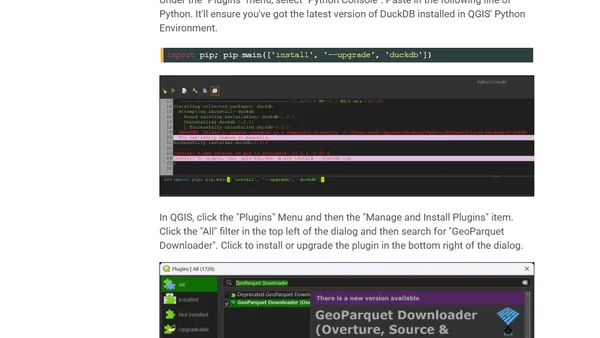

Exploring the Layercake project's analysis-ready OpenStreetMap data in Parquet format, including setup and performance on a high-end workstation.

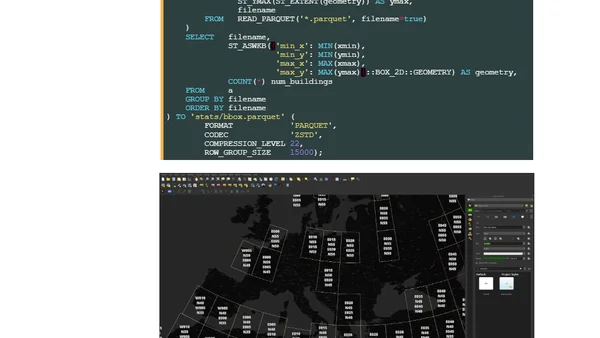

Analysis of a new global building dataset (2.75B structures), detailing the data processing, technical setup, and tools used for exploration.

Explains core data engineering concepts, comparing ETL and ELT data pipeline strategies and their use cases.

Explores workflow orchestration in data engineering, covering DAGs, tools, and best practices for managing complex data pipelines.

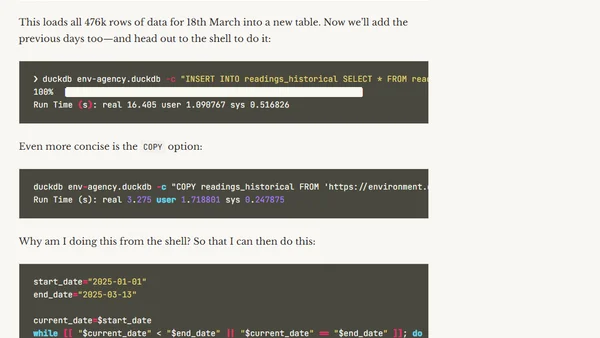

A guide to building a data pipeline using DuckDB, covering data ingestion, transformation, and analytics with real-world environmental data.

A data engineer reflects on their 2-year career journey at the City of Boston, sharing lessons learned in data warehousing, ETL, and civic tech.

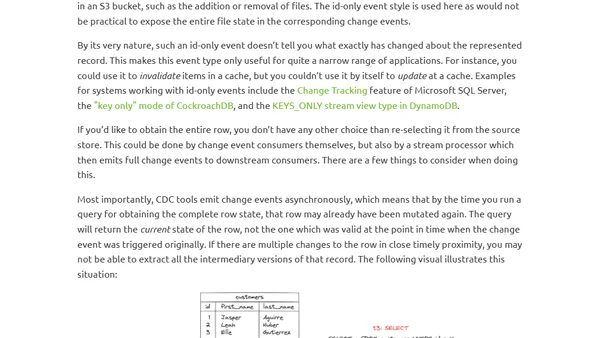

Explores a taxonomy of data change events in CDC, detailing Full, Delta, and Id-only events and their use cases.

Explores three types of data change events in Change Data Capture (CDC): Full, Delta, and Id-only events, detailing their structure and use cases.

An introduction to Data Vault modeling, a flexible data warehouse design method using Hubs, Links, and Satellites for scalable data integration.

A weekly tech learning digest covering Microsoft Fabric, AI topics, computer vision, Azure AI Document Intelligence, embeddings, and vector search.

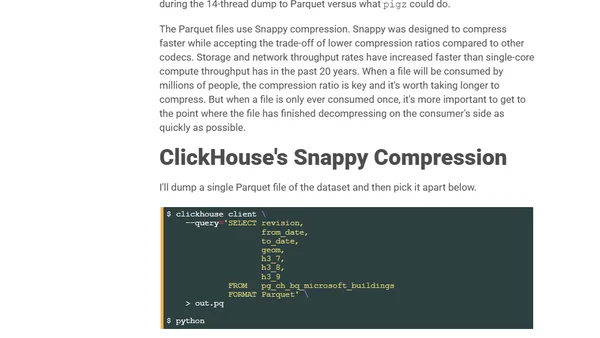

A technical guide on using ClickHouse to export PostgreSQL data to Parquet format for faster loading into Google BigQuery.

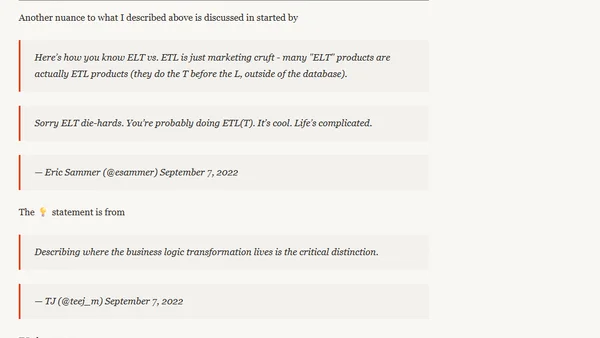

Explains the evolution from ETL to ELT in data engineering, clarifying the role of modern tools like dbt in the transformation process.