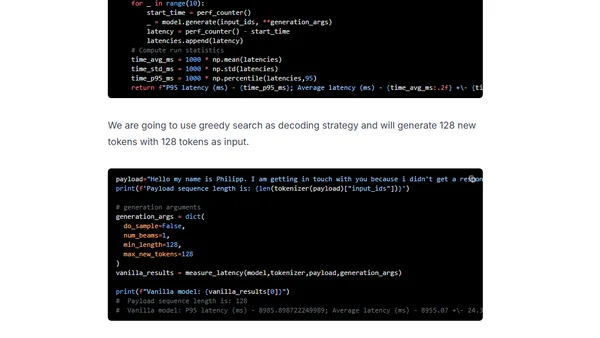

Deploy T5 11B for inference for less than $500

A tutorial on deploying the T5 11B language model for inference using Hugging Face Inference Endpoints on a budget.

Philipp Schmid is a Staff Engineer at Google DeepMind, building AI Developer Experience and DevRel initiatives. He specializes in LLMs, RLHF, and making advanced AI accessible to developers worldwide.

189 articles from this blog

A tutorial on deploying the T5 11B language model for inference using Hugging Face Inference Endpoints on a budget.

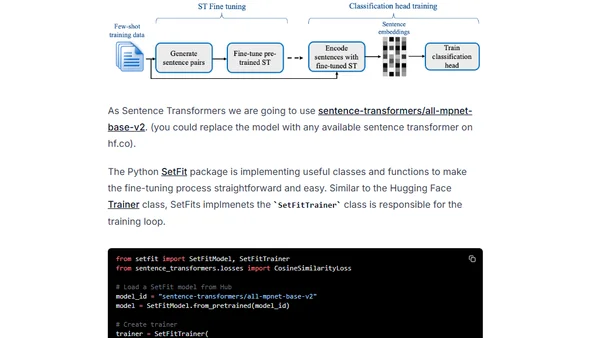

Learn how SetFit, a new approach from Intel Labs and Hugging Face, outperforms GPT-3 for text classification with minimal labeled data.

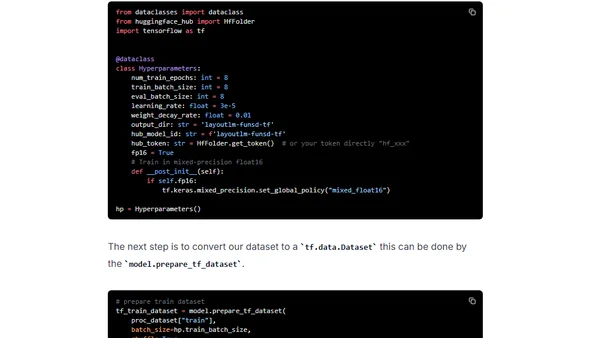

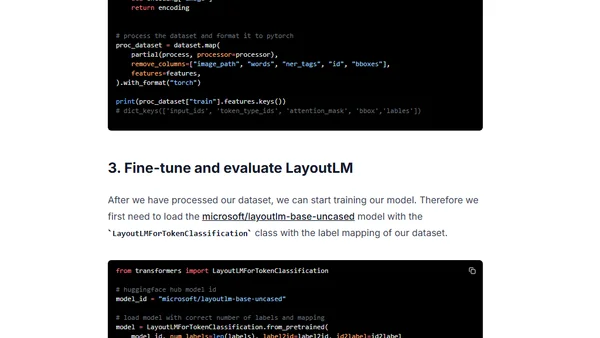

A tutorial on fine-tuning Microsoft's LayoutLM model for document understanding using TensorFlow, Keras, and the FUNSD dataset.

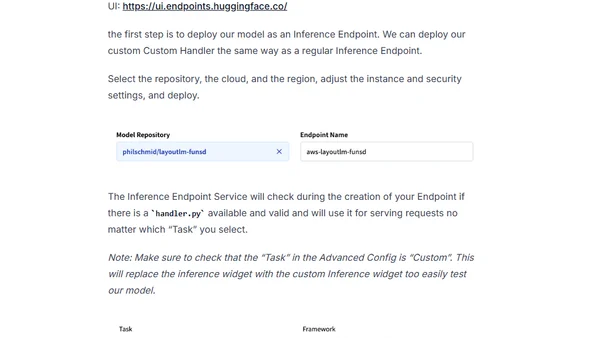

A tutorial on deploying the LayoutLM document understanding model using Hugging Face Inference Endpoints for production API integration.

A tutorial on fine-tuning Microsoft's LayoutLM model for document understanding and information extraction using the Hugging Face Transformers library.

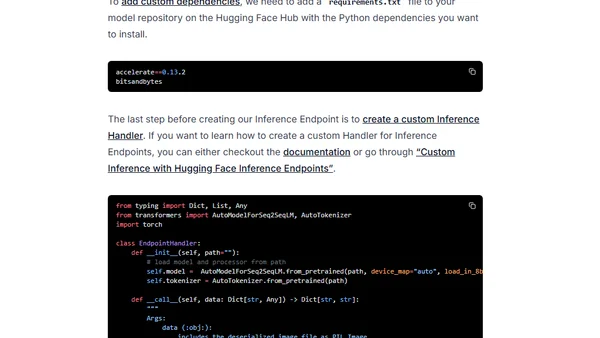

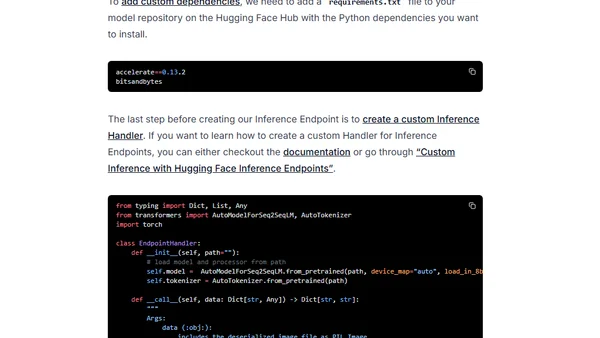

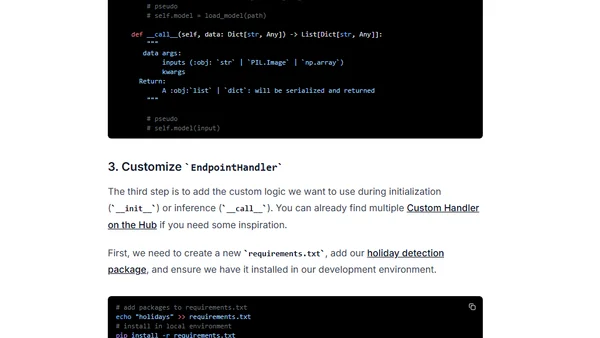

A tutorial on creating custom inference handlers for Hugging Face Inference Endpoints to add business logic and dependencies.

Learn to optimize GPT-J inference using DeepSpeed-Inference and Hugging Face Transformers for faster GPU performance.

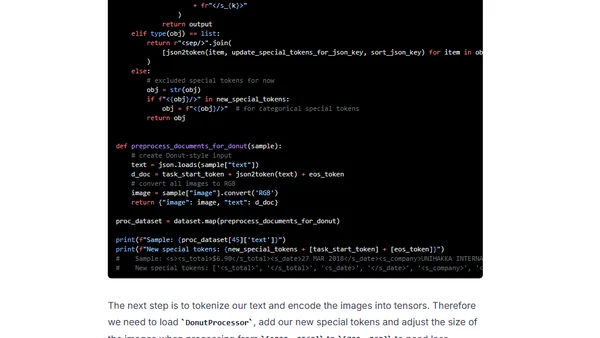

A tutorial on fine-tuning the Donut model for document parsing using Hugging Face Transformers and the SROIE dataset.

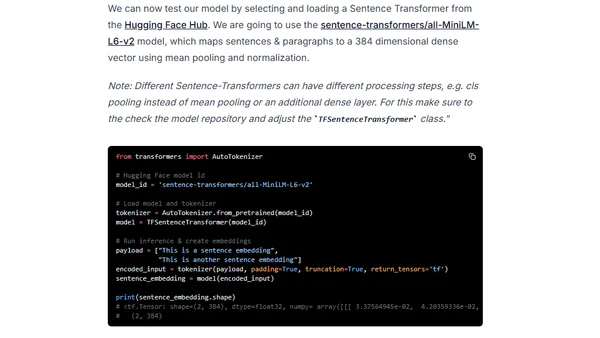

A tutorial on using Sentence Transformers models with TensorFlow and Keras to create text embeddings for semantic search and similarity tasks.

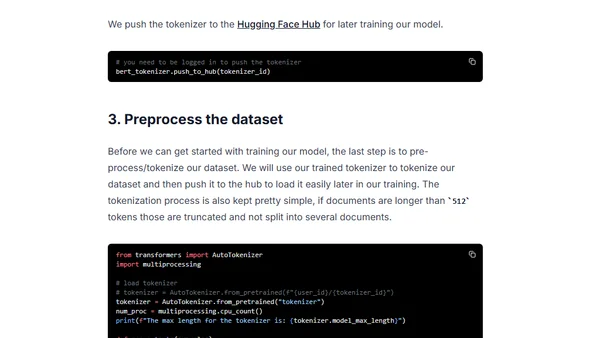

A tutorial on pre-training a BERT model from scratch using Hugging Face Transformers and Habana Gaudi accelerators on AWS.

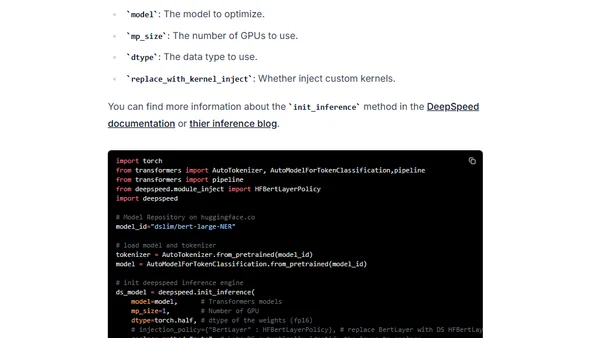

Learn to optimize BERT and RoBERTa models for faster GPU inference using DeepSpeed-Inference, reducing latency from 30ms to 10ms.

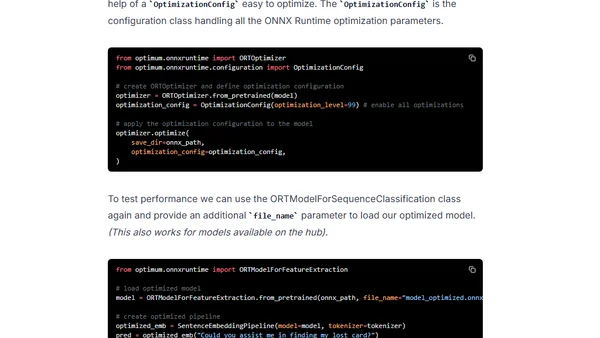

Learn to optimize Sentence Transformers models for faster inference using Hugging Face Optimum, ONNX Runtime, and dynamic quantization.

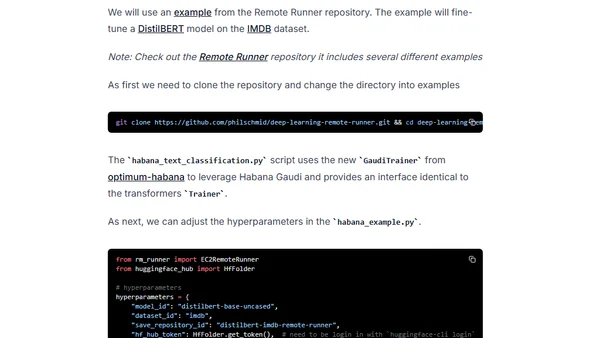

A guide to simplifying deep learning workflows using AWS EC2 Remote Runner and Habana Gaudi processors for efficient, cost-effective model training.

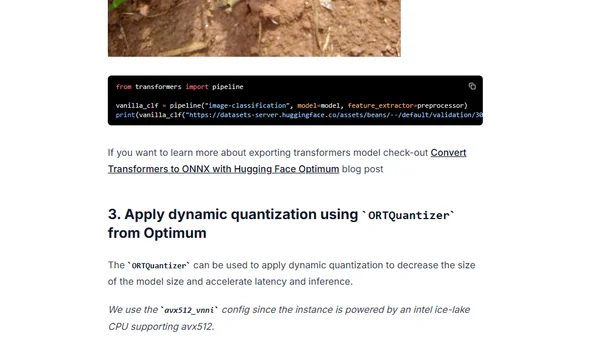

Learn to accelerate Vision Transformer (ViT) models using quantization with Hugging Face Optimum and ONNX Runtime for improved latency.

Learn to optimize Hugging Face Transformers models for GPU inference using Optimum and ONNX Runtime to reduce latency.

Learn how to fine-tune the XLM-RoBERTa model for multilingual text classification using Hugging Face libraries on cost-efficient Habana Gaudi AWS instances.

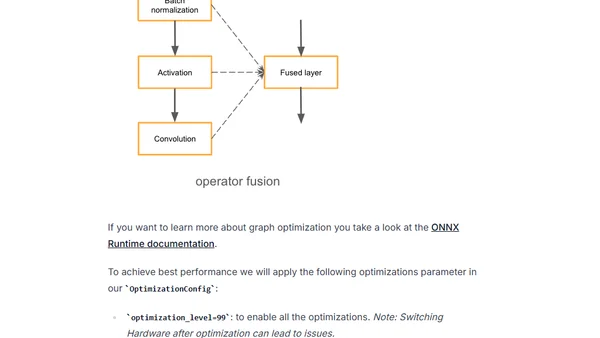

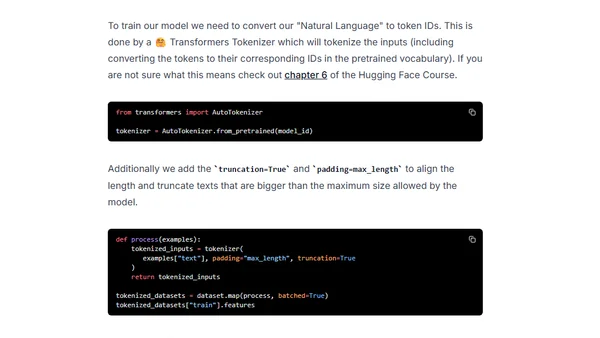

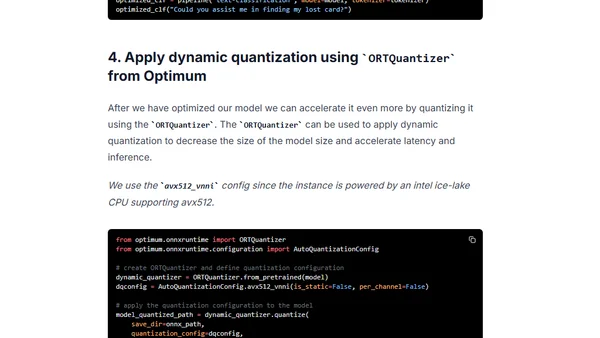

Learn to optimize Hugging Face Transformers models using Optimum and ONNX Runtime for faster inference with dynamic quantization.

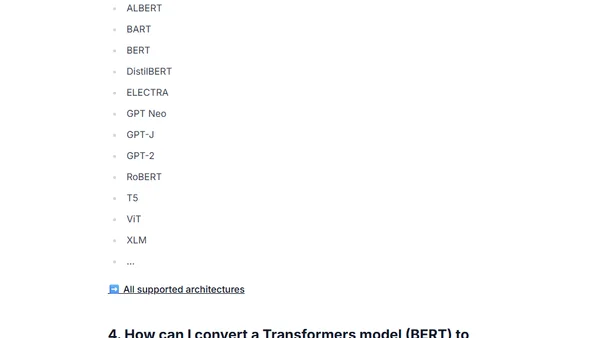

A guide on converting Hugging Face Transformers models to the ONNX format using the Optimum library for optimized deployment.

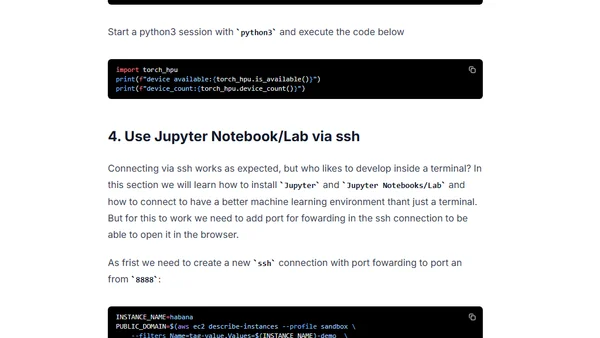

Guide to setting up a deep learning environment on AWS using Habana Gaudi accelerators and Hugging Face libraries for transformer models.

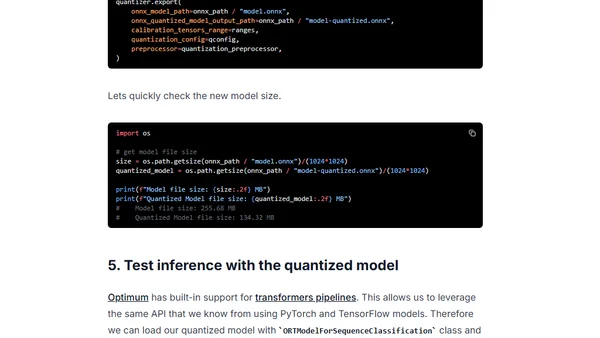

Learn how to use Hugging Face Optimum and ONNX Runtime to apply static quantization to a DistilBERT model, achieving ~3x latency improvements.