Accelerate Vision Transformer (ViT) with Quantization using Optimum

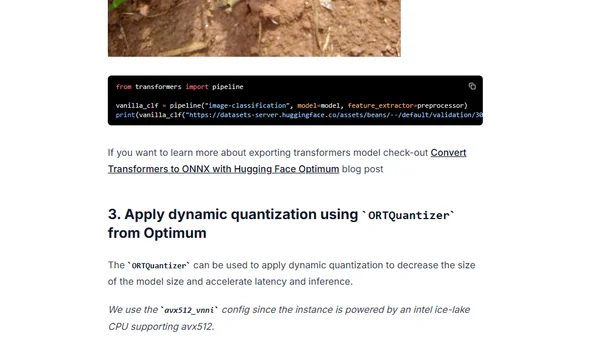

Read OriginalThis technical tutorial explains how to optimize Vision Transformer models by applying dynamic quantization using Hugging Face Optimum and ONNX Runtime. It covers converting a model to ONNX, applying quantization, and evaluating the performance gains in latency while maintaining accuracy.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser