From logistic regression to AI

Explores the evolution from simple logistic regression to modern AI, comparing model complexity, data requirements, and the surprising effectiveness of large neural networks.

Explores the evolution from simple logistic regression to modern AI, comparing model complexity, data requirements, and the surprising effectiveness of large neural networks.

A reflection on a decade-old blog post about deep learning, examining past predictions on architecture, scaling, and the field's evolution.

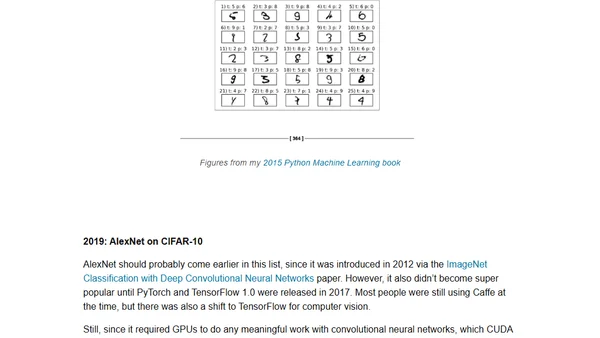

A historical overview of beginner-friendly 'Hello World' examples in machine learning and AI, from 2013's Random Forests to 2025's Qwen3 with RLVR.

Argues that AI should be judged by its outputs and capabilities, not by debates over its internal mechanisms or consciousness.

Explores AI as a new computing paradigm (Software 2.0), where automation shifts from specifiable tasks to verifiable ones, explaining its impact on job markets and AI progress.

Explores Andrej Karpathy's concept of Software 2.0, where AI writes programs through objectives and gradient descent, focusing on task verifiability.

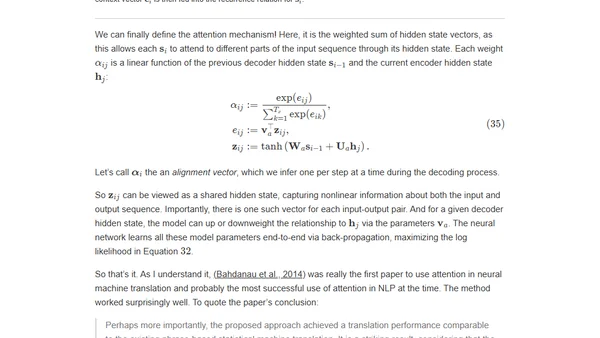

A detailed academic history tracing the core ideas behind large language models, from distributed representations to the transformer architecture.

A technical AI researcher questions if human 'world models' are as emergent and training-dependent as those in large language models (LLMs).

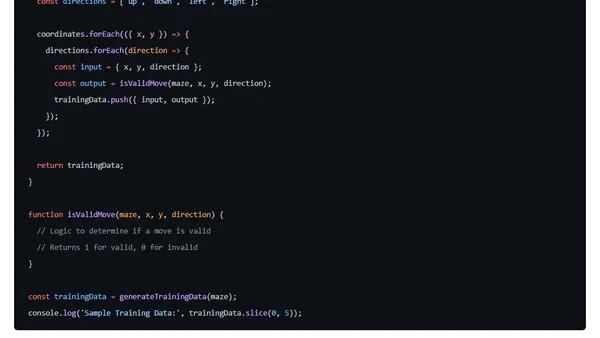

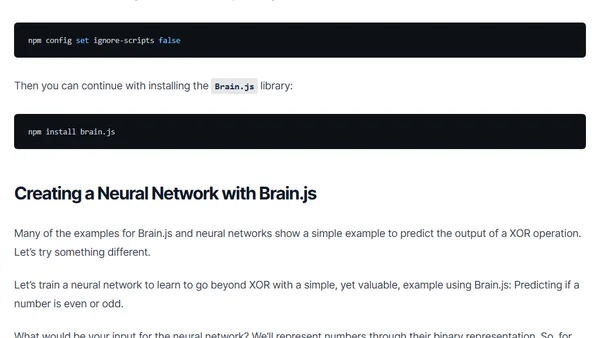

A tutorial on training a neural network in JavaScript to solve ASCII mazes using the brain.js library.

A guide for tech leaders on choosing between traditional coding, training models, and prompting LLMs for software development, based on Andrej Karpathy's concepts.

A course teaching how to code Large Language Models (LLMs) from scratch to deeply understand their inner workings and fundamentals.

A course teaching how to code Large Language Models from scratch to deeply understand their inner workings, with practical video tutorials.

An AI researcher shares her journey into GPU programming and introduces WebGPU Puzzles, a browser-based tool for learning GPU fundamentals from scratch.

A guide to building neural networks using JavaScript and the Brain.js library, covering fundamental concepts for web developers.

Argues that the 'age of data' for AI is not ending but evolving into an era of 'superhuman data' with higher quality and knowledge density.

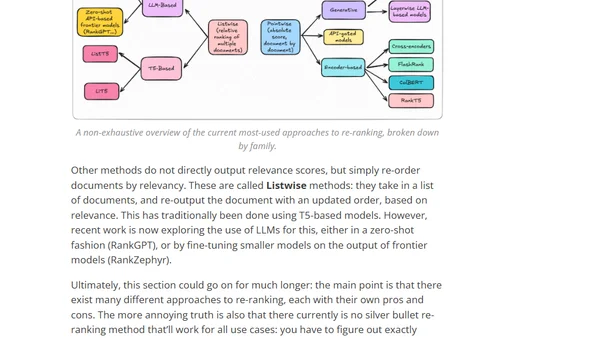

Introduces rerankers, a lightweight Python library providing a unified interface for various document re-ranking models used in information retrieval pipelines.

A technical article exploring deep neural networks by comparing classic computational methods to modern ML, using sine function calculation as an example and implementing it in Kotlin.

An analysis of the ARC Prize AI benchmark, questioning if human-level intelligence can be achieved solely through deep learning and transformers.

A robotics AI lead reflects on the field's future, discussing scaling robot autonomy with neural networks and parallels to large language models.

Announcing libactivation, a new Python package on PyPI providing activation functions and their derivatives for machine learning and neural networks.