MicroGrad.jl: Part 3 Automation with IRTools

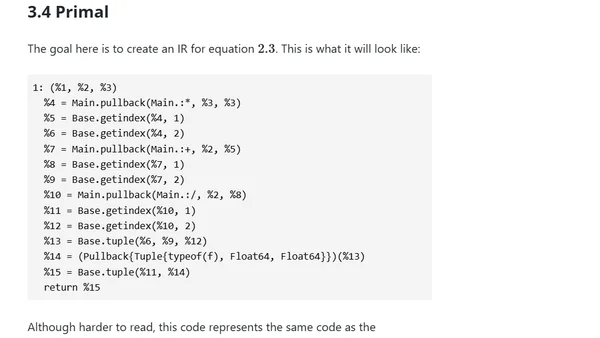

Explores using IRTools.jl for robust automatic differentiation in Julia, focusing on metaprogramming to generate forward and backward passes.

Explores using IRTools.jl for robust automatic differentiation in Julia, focusing on metaprogramming to generate forward and backward passes.

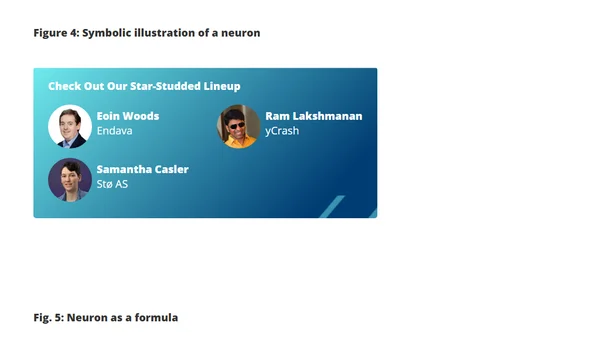

A technical article exploring deep neural networks by comparing classic computational methods to modern ML, using sine function calculation as an example and implementing it in Kotlin.

An introduction to building a minimal automatic differentiation package in Julia, focusing on explicit chain rules and the Julia AD ecosystem.