MicroGrad.jl: Part 5 MLP

Part 5 of a series on building an automatic differentiation package in Julia, demonstrating its use to create and train a multi-layer perceptron on the moons dataset.

Part 5 of a series on building an automatic differentiation package in Julia, demonstrating its use to create and train a multi-layer perceptron on the moons dataset.

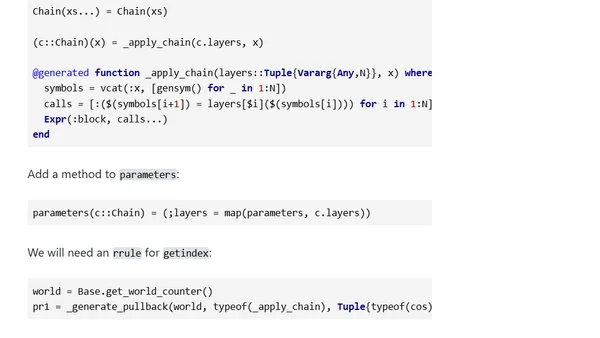

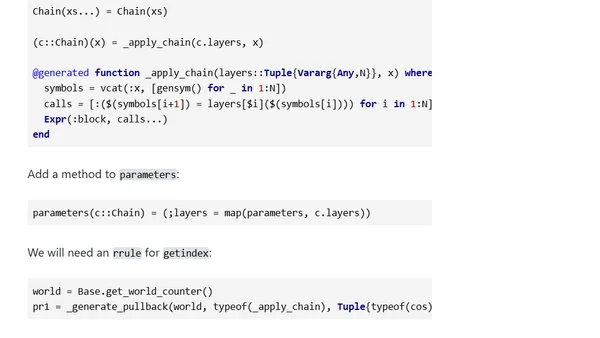

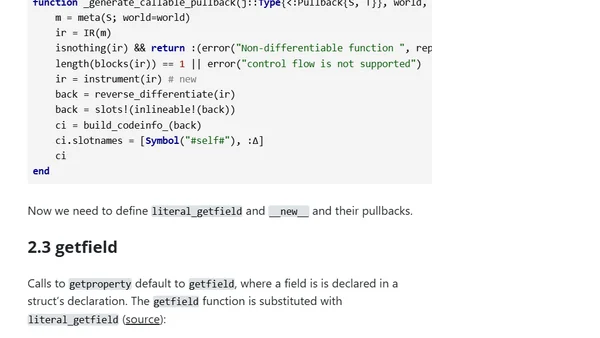

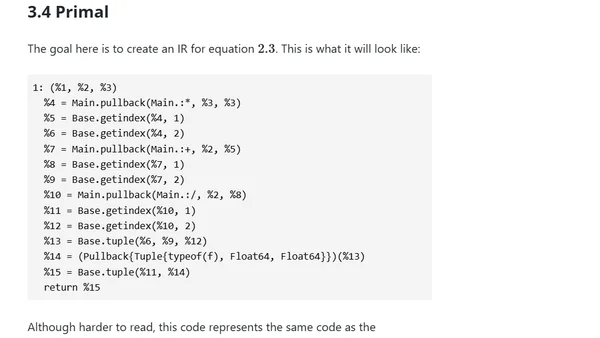

Extends a Julia automatic differentiation library (MicroGrad.jl) to handle map, getfield, and anonymous functions, enabling gradient descent for polynomial fitting.

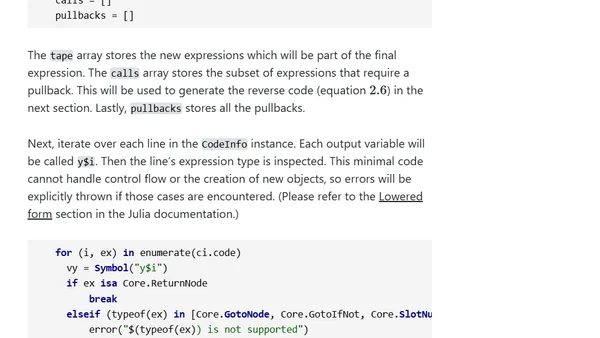

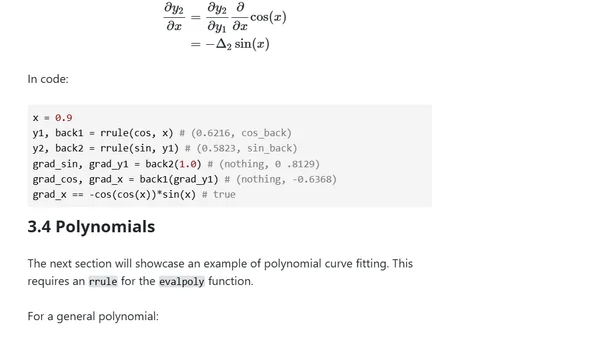

Explores using IRTools.jl for robust automatic differentiation in Julia, focusing on metaprogramming to generate forward and backward passes.

Explores automating automatic differentiation in Julia using metaprogramming and expression-based approaches to generate forward and backward passes.

An introduction to building a minimal automatic differentiation package in Julia, focusing on explicit chain rules and the Julia AD ecosystem.

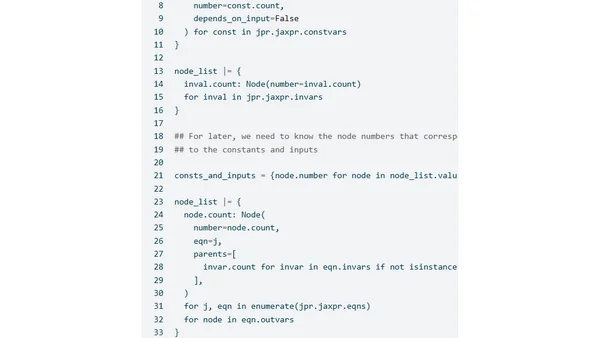

A technical exploration of implementing Laplace approximations using JAX, focusing on sparse autodiff and JAXPR manipulation for efficient gradient computation.

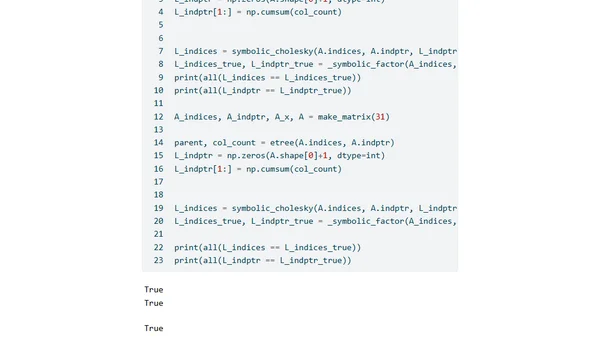

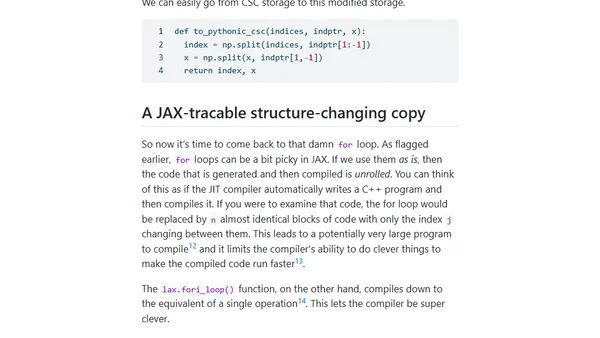

Exploring JAX-compatible sparse Cholesky decomposition, focusing on symbolic factorization and JAX's control flow challenges.

Part 6 of a series on making sparse linear algebra differentiable in JAX, focusing on implementing Jacobian-vector products for custom primitives.

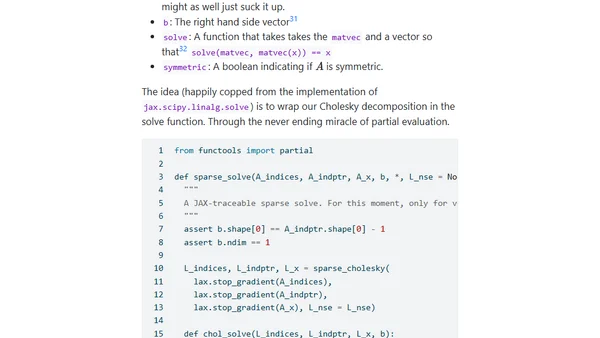

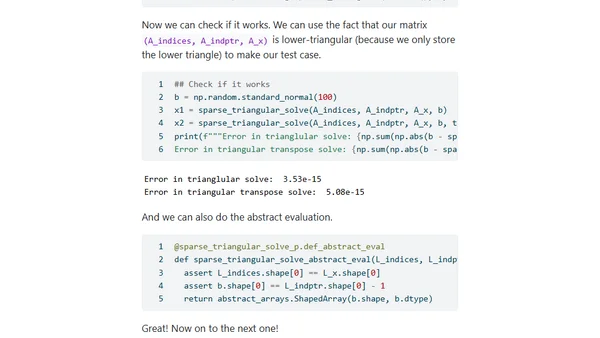

Part five of a series on implementing differentiable sparse linear algebra in JAX, focusing on registering new JAX-traceable primitives.

Explores design options for implementing autodifferentiable sparse matrices in JAX to accelerate statistical models, focusing on avoiding redundant computations.

Explores challenges integrating sparse Cholesky factorizations with JAX for faster statistical inference in PyMC.

Explores the practical uses of reversible computing, from automatic differentiation in deep learning to distributed systems and database operations.