Accelerate GPT-J inference with DeepSpeed-Inference on GPUs

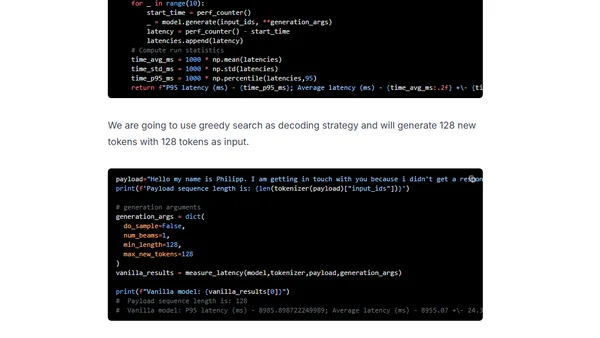

Read OriginalThis technical tutorial demonstrates how to accelerate GPT-J and similar transformer models for inference on GPUs using DeepSpeed-Inference. It covers setting up the environment, loading the model, applying DeepSpeed optimizations, and evaluating performance gains, specifically targeting single-GPU setups for models like GPT-2, GPT-Neo, and GPT-J 6B.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser