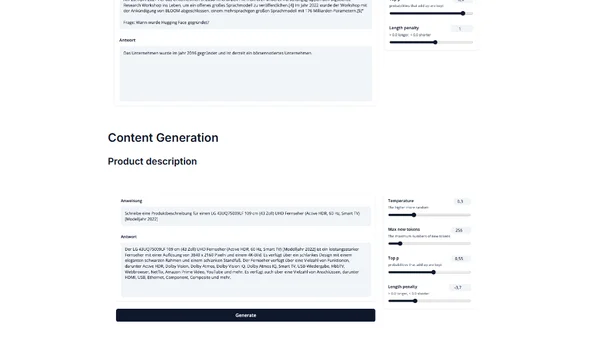

Train and Deploy BLOOM with Amazon SageMaker and PEFT

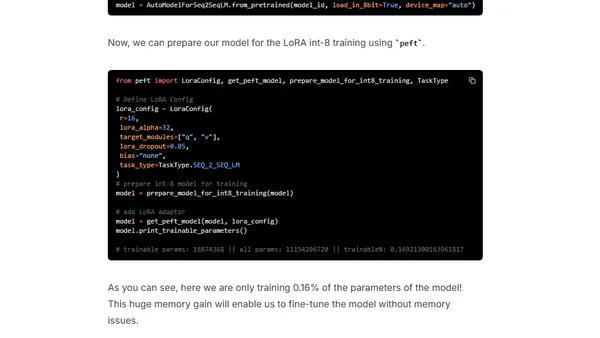

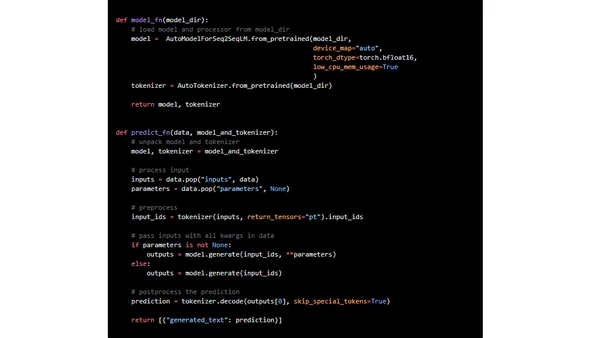

A technical guide on fine-tuning the BLOOMZ language model using PEFT and LoRA techniques, then deploying it on Amazon SageMaker.

Philipp Schmid is a Staff Engineer at Google DeepMind, building AI Developer Experience and DevRel initiatives. He specializes in LLMs, RLHF, and making advanced AI accessible to developers worldwide.

189 articles from this blog

A technical guide on fine-tuning the BLOOMZ language model using PEFT and LoRA techniques, then deploying it on Amazon SageMaker.

Introduces IGEL, an instruction-tuned German large language model based on BLOOM, for NLP tasks like translation and QA.

A technical guide on fine-tuning the large FLAN-T5 XXL model efficiently using LoRA and Hugging Face libraries on a single GPU.

A technical guide on deploying Google's FLAN-UL2 20B large language model for real-time inference using Amazon SageMaker and Hugging Face.

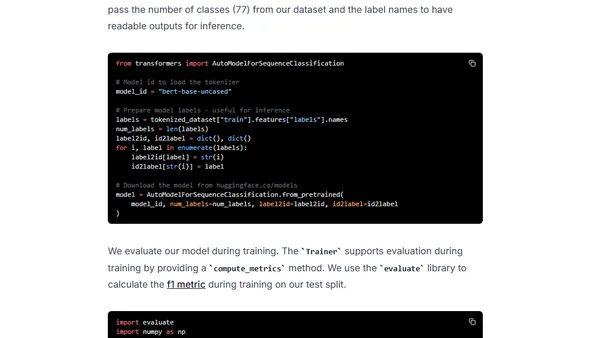

A tutorial on fine-tuning a BERT model for text classification using the new PyTorch 2.0 framework and the Hugging Face Transformers library.

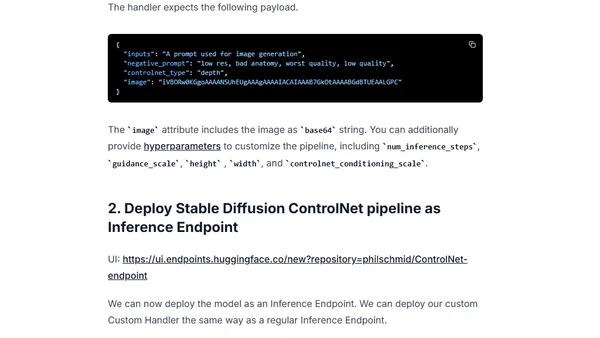

Learn how to deploy and use ControlNet for controlled text-to-image generation via Hugging Face Inference Endpoints as a scalable API.

Guide to fine-tuning the large FLAN-T5 XXL model using Amazon SageMaker managed training and DeepSpeed for optimization.

A technical guide on fine-tuning large FLAN-T5 models (XL/XXL) using DeepSpeed ZeRO and Hugging Face Transformers for efficient multi-GPU training.

A technical guide on deploying the FLAN-T5-XXL large language model for real-time inference using Amazon SageMaker and Hugging Face.

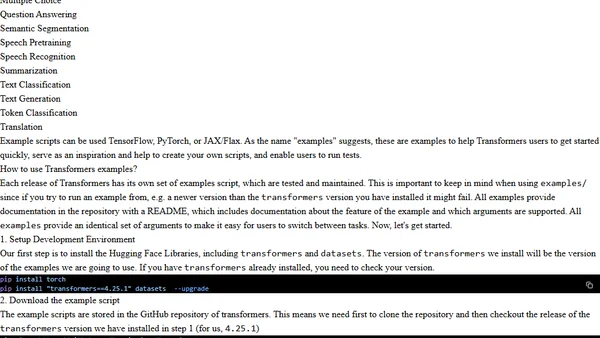

A guide to using Hugging Face Transformers library with examples for fine-tuning models like BERT and BART for NLP and computer vision tasks.

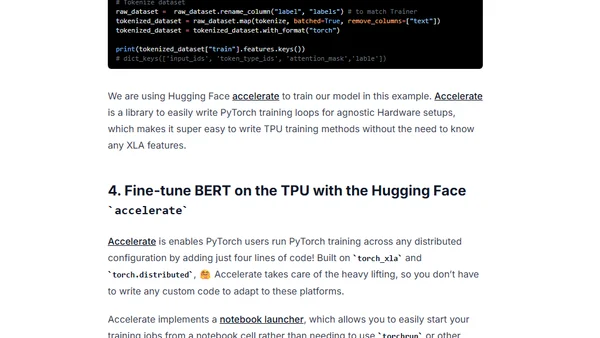

A tutorial on fine-tuning a BERT model for text classification using Hugging Face Transformers and Google Cloud TPUs with PyTorch.

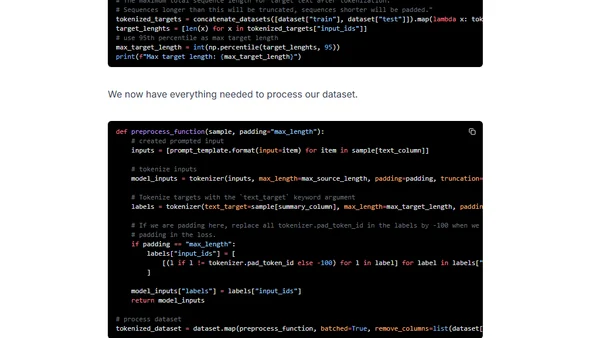

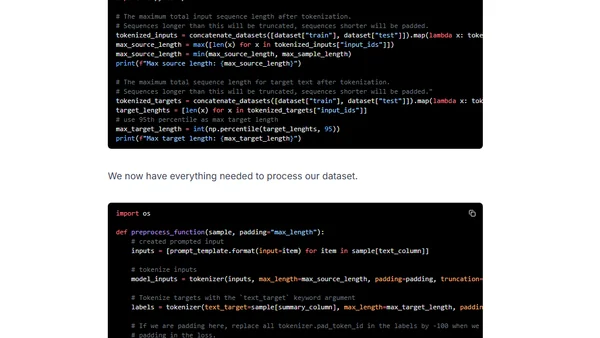

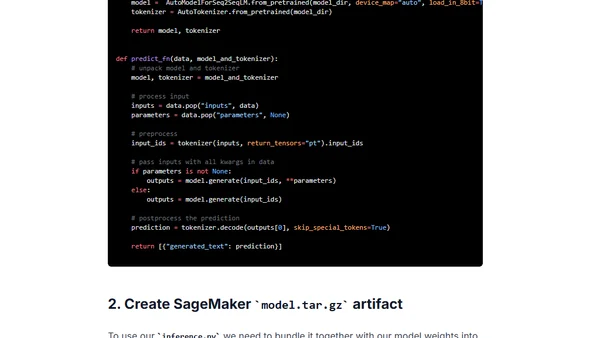

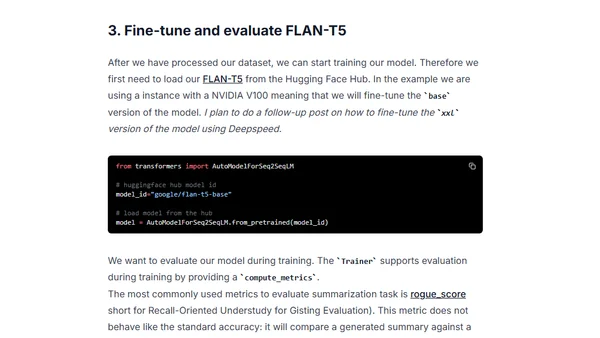

A tutorial on fine-tuning Google's FLAN-T5 model for summarizing chat and dialogue using the samsum dataset and Hugging Face Transformers.

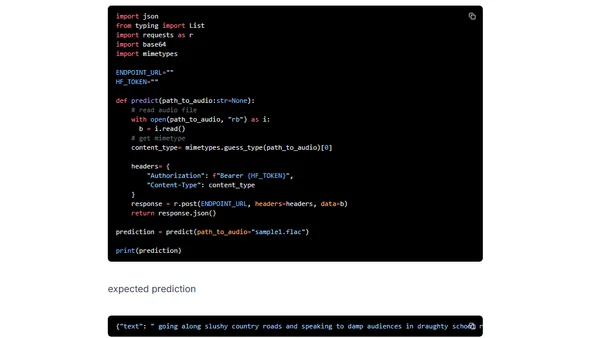

A tutorial on deploying OpenAI's Whisper speech recognition model using Hugging Face Inference Endpoints for scalable transcription APIs.

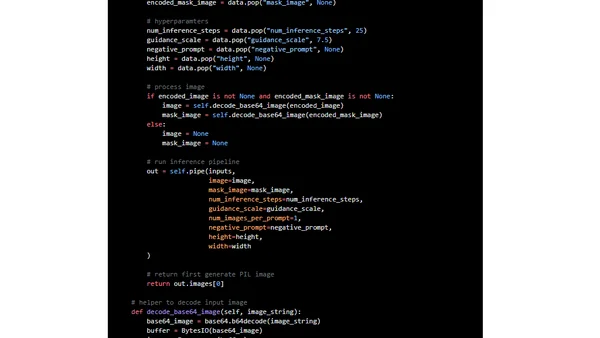

A tutorial on using Hugging Face Inference Endpoints to deploy and run Stable Diffusion 2 for AI image inpainting via a custom API.

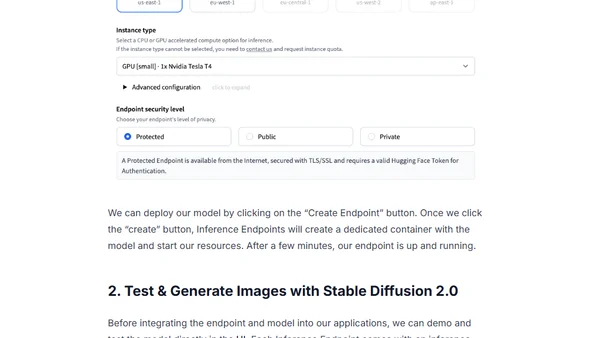

A tutorial on deploying Stable Diffusion 2.0 for image generation using Hugging Face Inference Endpoints and integrating it via an API.

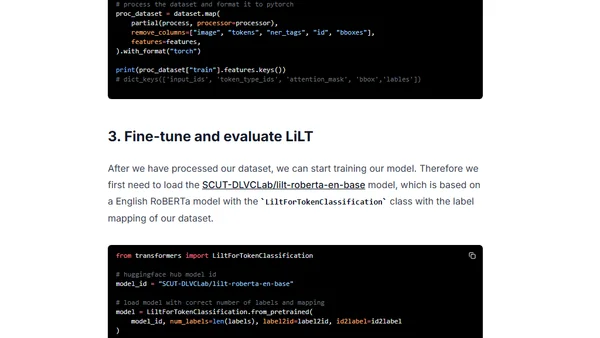

A tutorial on fine-tuning the LiLT model for language-agnostic document understanding and information extraction using Hugging Face Transformers.

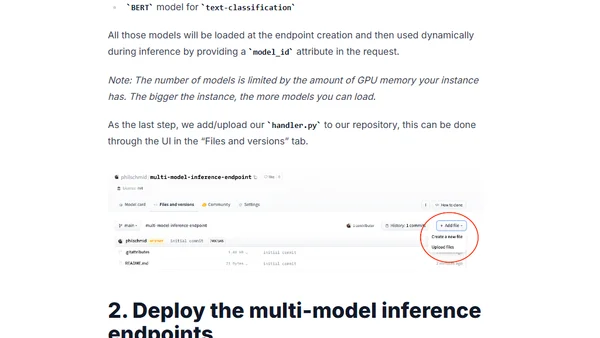

Learn how to deploy multiple ML models on a single GPU using Hugging Face Inference Endpoints for scalable, cost-effective inference.

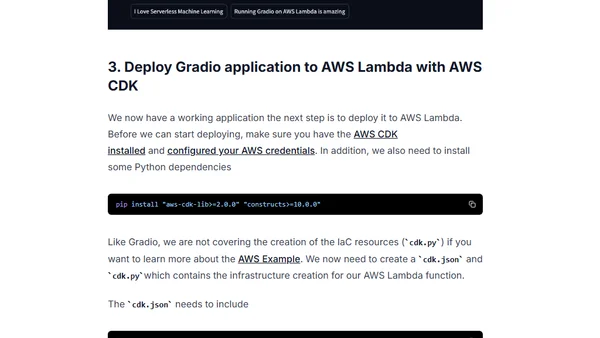

A tutorial on deploying a Hugging Face Gradio machine learning app for sentiment analysis to AWS Lambda using a serverless architecture.

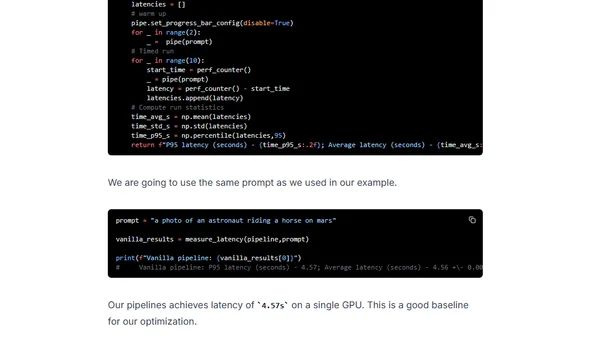

Learn to optimize Stable Diffusion for faster GPU inference using DeepSpeed-Inference and Hugging Face Diffusers.

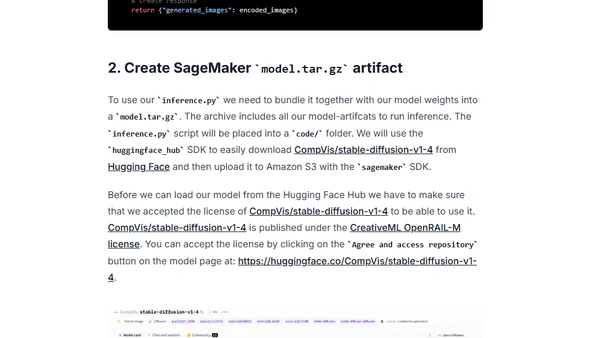

A technical guide on deploying the Stable Diffusion text-to-image model to Amazon SageMaker for real-time inference using the Hugging Face Diffusers library.