Train and Deploy BLOOM with Amazon SageMaker and PEFT

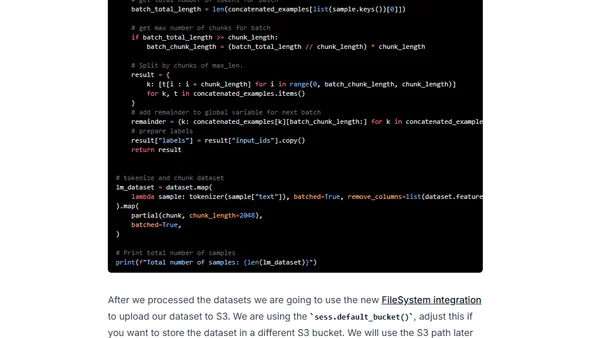

Read OriginalThis tutorial demonstrates how to apply Parameter Efficient Fine-Tuning (PEFT) methods, specifically LoRA, to fine-tune the 7B parameter BLOOMZ model on a single GPU. It covers setting up the environment with Hugging Face libraries, preparing a dataset, performing efficient fine-tuning with 8-bit quantization, and deploying the trained model to an Amazon SageMaker endpoint for inference.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser