Getting started with Transformers and TPU using PyTorch

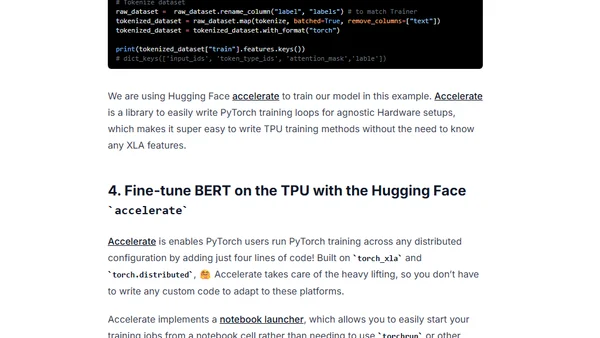

Read OriginalThis technical guide provides step-by-step instructions for setting up a Google Cloud TPU VM, configuring a Jupyter environment, and using PyTorch with the Hugging Face Transformers library to fine-tune a BERT model for a text classification task. It covers environment setup, dataset preparation, and leveraging the accelerate library for TPU training.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser