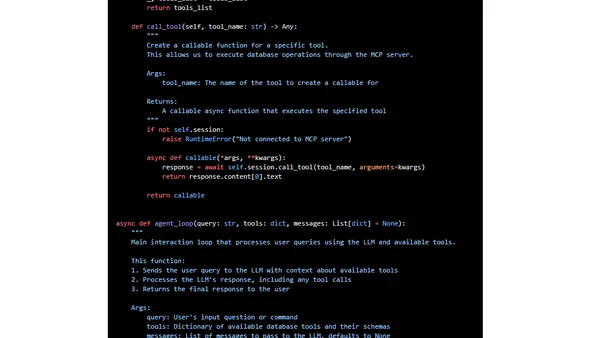

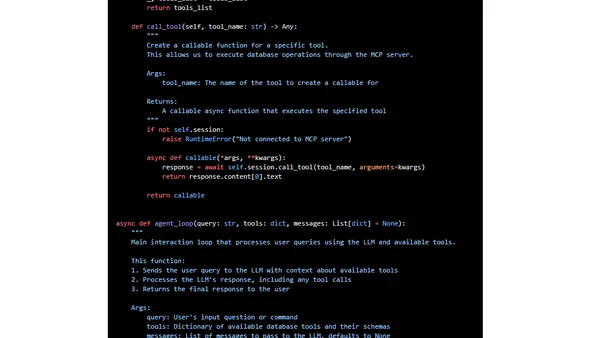

How to use Anthropic MCP Server with open LLMs, OpenAI or Google Gemini

A guide on using Anthropic's Model Context Protocol (MCP) to connect AI agents with tools and data sources using various LLMs like OpenAI or Gemini.

Philipp Schmid is a Staff Engineer at Google DeepMind, building AI Developer Experience and DevRel initiatives. He specializes in LLMs, RLHF, and making advanced AI accessible to developers worldwide.

189 articles from this blog

A guide on using Anthropic's Model Context Protocol (MCP) to connect AI agents with tools and data sources using various LLMs like OpenAI or Gemini.

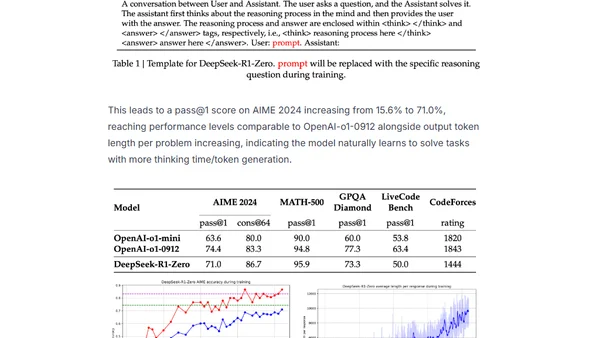

Explains the training of DeepSeek-R1, focusing on the Group Relative Policy Optimization (GRPO) reinforcement learning method.

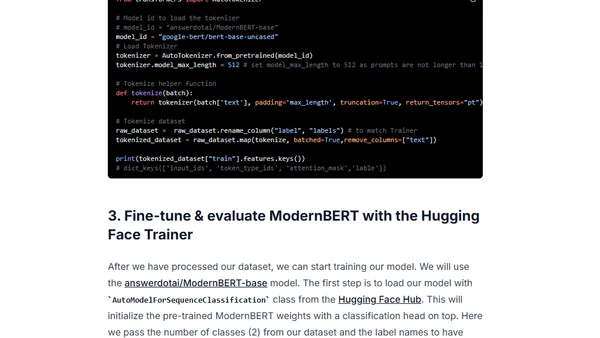

A tutorial on fine-tuning the ModernBERT model for classification tasks to build an efficient LLM router, covering setup, training, and evaluation.

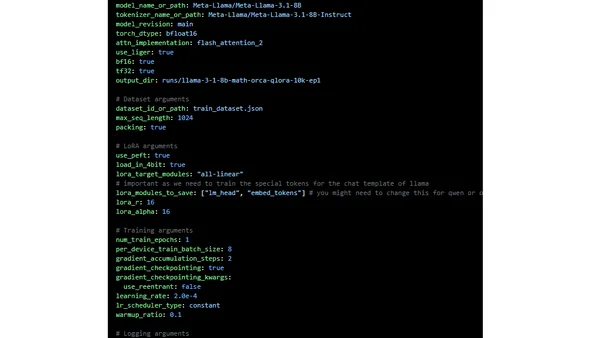

A technical guide on optimizing and scaling the fine-tuning of open-source large language models using Hugging Face tools in 2025.

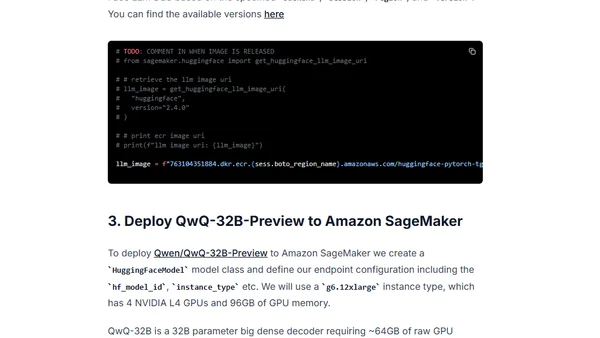

A technical guide on deploying the QwQ-32B-Preview open-source reasoning model on AWS SageMaker using Hugging Face's tools.

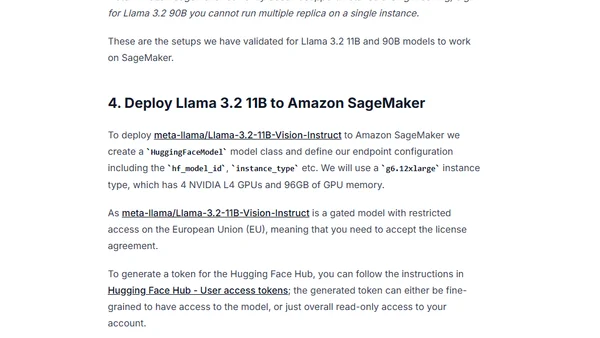

A technical guide on deploying Meta's Llama 3.2 Vision model on Amazon SageMaker using the Hugging Face LLM DLC.

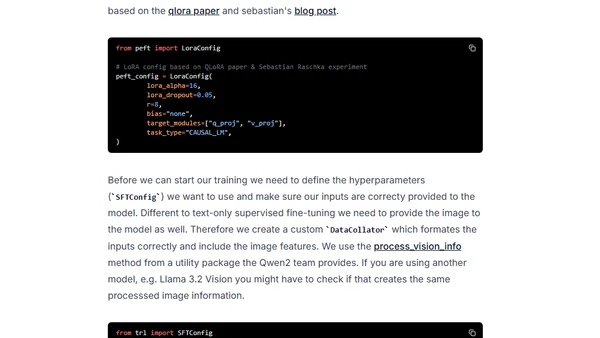

A technical guide on fine-tuning Vision-Language Models (VLMs) using Hugging Face's TRL library for custom applications like image-to-text generation.

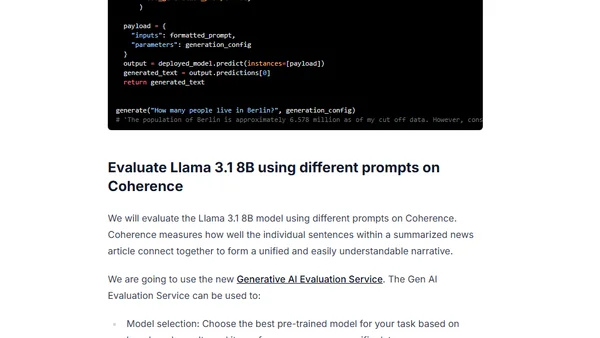

A technical guide on using Google's Vertex AI Gen AI Evaluation Service with Gemini to evaluate open LLM models like Llama 3.1.

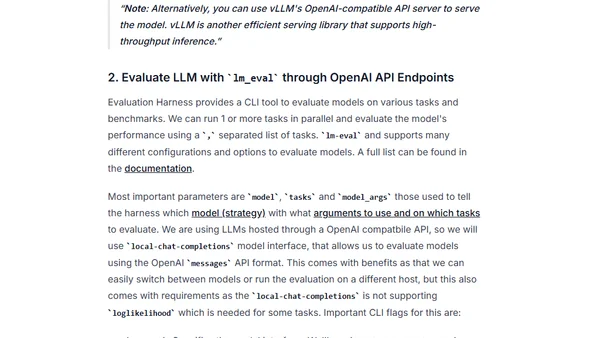

A guide to evaluating Large Language Models (LLMs) using the Evaluation Harness framework and optimized serving tools like Hugging Face TGI and vLLM.

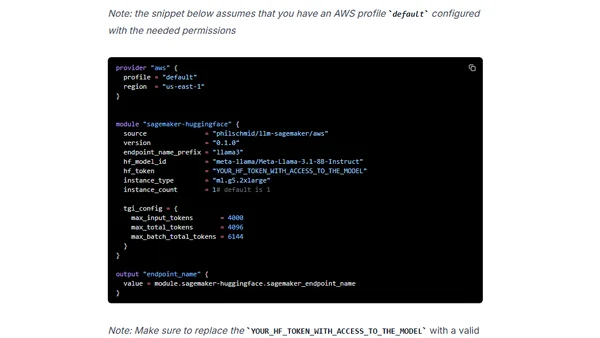

A guide to deploying open-source LLMs like Llama 3 to Amazon SageMaker using Terraform for Infrastructure as Code.

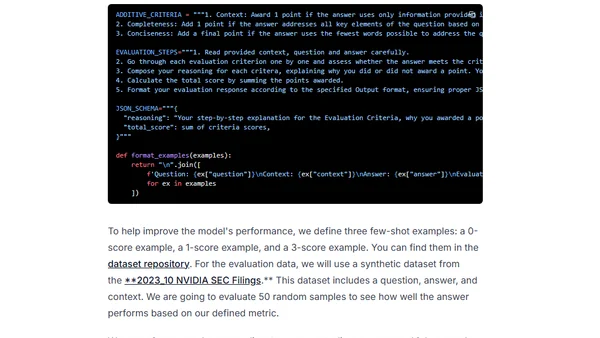

A guide to simplifying LLM evaluation workflows using clear metrics, chain-of-thought, and few-shot prompts, inspired by real-world examples.

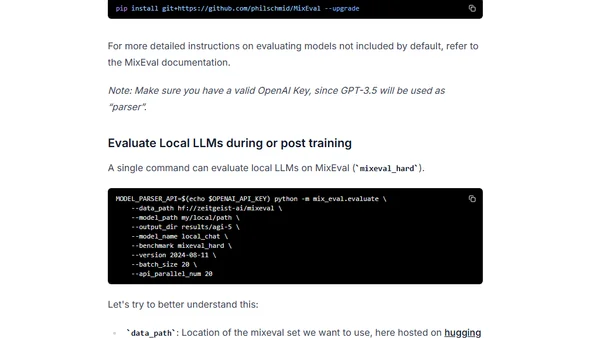

Introduces MixEval, a cost-effective LLM benchmark with high correlation to Chatbot Arena, for evaluating open-source language models.

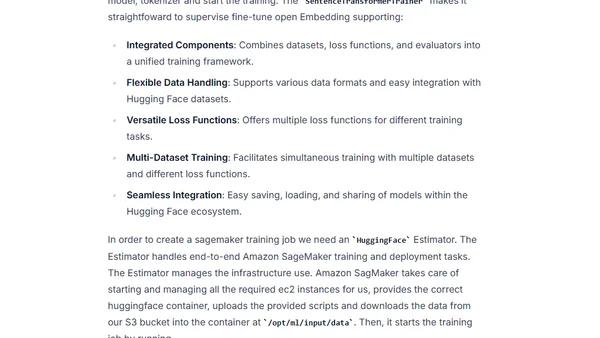

A guide to fine-tuning and deploying custom embedding models for RAG applications on Amazon SageMaker using Sentence Transformers v3.

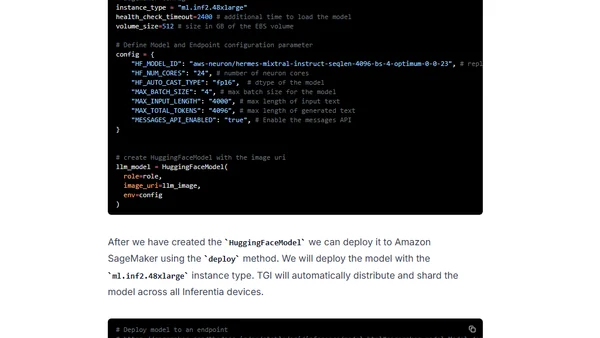

A technical guide on deploying the Mixtral 8x7B LLM on AWS Inferentia2 using Hugging Face Optimum and Amazon SageMaker.

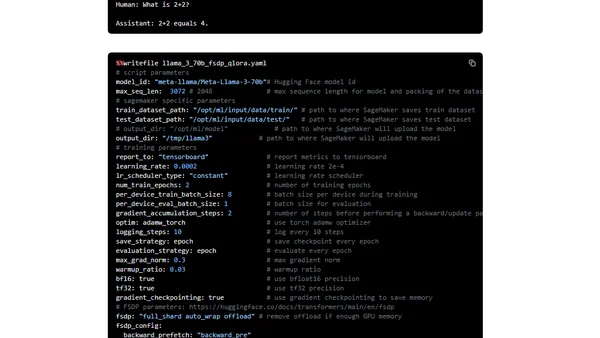

A technical guide on fine-tuning the Llama 3 LLM using PyTorch FSDP and Q-Lora on Amazon SageMaker for efficient training.

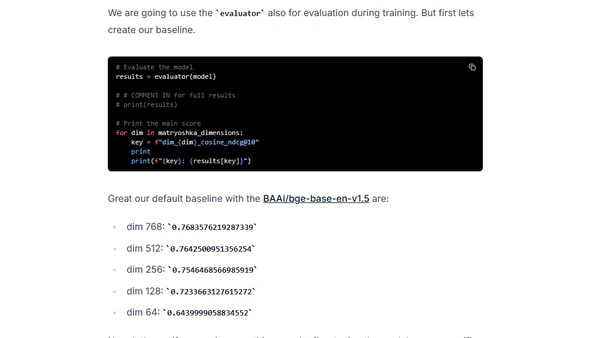

A guide to fine-tuning embedding models for RAG applications using Sentence Transformers 3, featuring Matryoshka Representation Learning for efficiency.

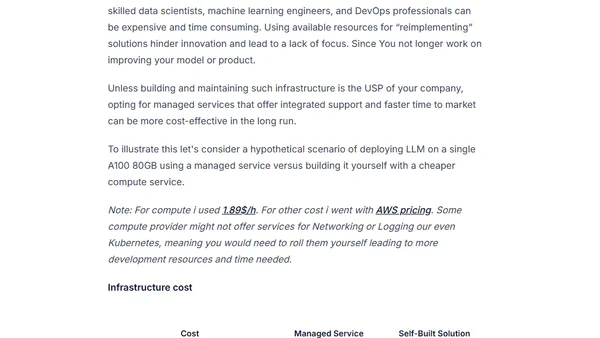

Analyzes the complex total cost of ownership for deploying generative AI models in production, beyond just raw compute expenses.

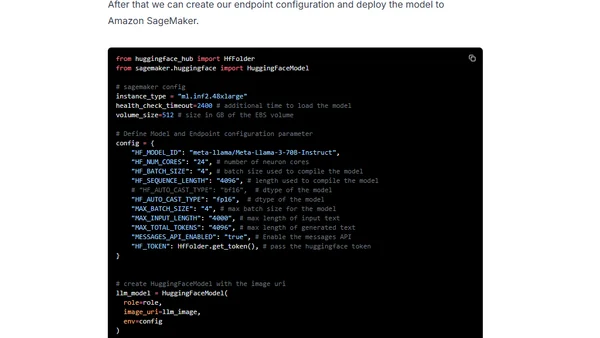

A technical guide on deploying Meta's Llama 3 70B Instruct model on AWS Inferentia2 using Hugging Face Optimum and Amazon SageMaker.

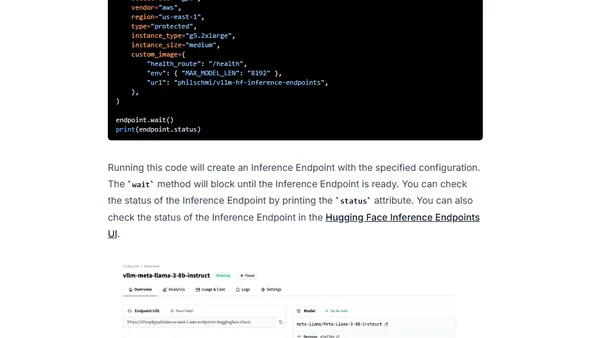

A tutorial on deploying open-source large language models (LLMs) like Llama 3 using the vLLM framework on Hugging Face Inference Endpoints.

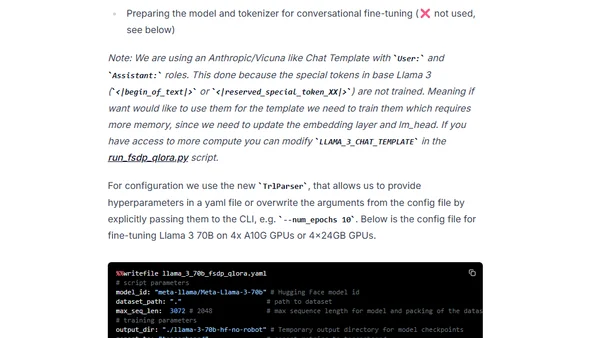

A technical guide on fine-tuning the Llama 3 70B model using PyTorch FSDP and Q-Lora for efficient training on limited GPU hardware.