Fine-tune Llama 3 with PyTorch FSDP and Q-Lora on Amazon SageMaker

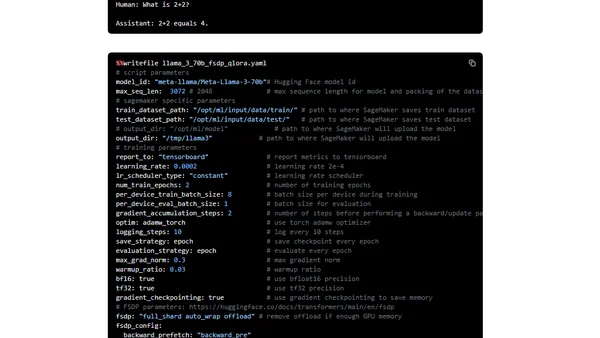

Read OriginalThis detailed tutorial explains how to fine-tune the Llama 3 large language model using PyTorch FSDP (Fully Sharded Data Parallel) and Q-Lora techniques on Amazon SageMaker. It covers setting up the environment with Hugging Face libraries, preparing datasets, and optimizing the training process for specific AWS instances to reduce memory footprint and computational requirements.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser