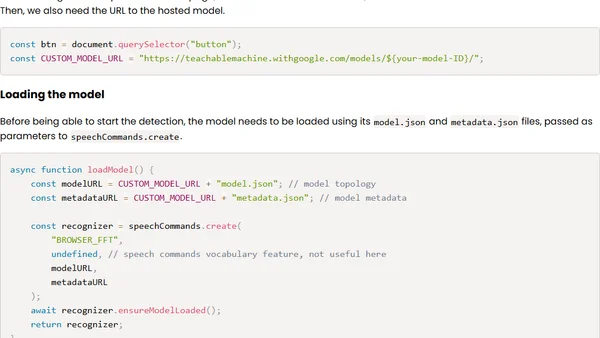

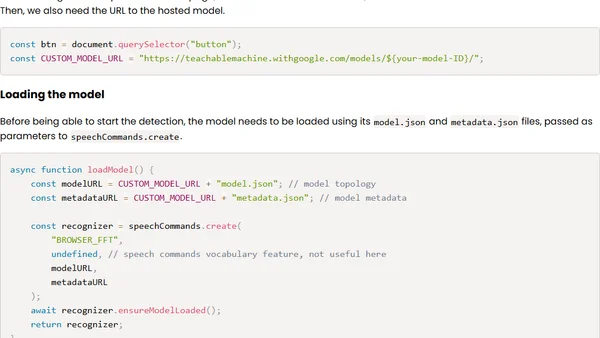

Control UIs using wireless earbuds and on-face interactions

A developer recreates a research project using wireless earbuds' microphones to detect facial touch gestures and control UIs via machine learning in JavaScript.

A developer recreates a research project using wireless earbuds' microphones to detect facial touch gestures and control UIs via machine learning in JavaScript.

A critical analysis of the machine learning bubble, arguing its lasting impact will be a proliferation of low-quality, automated content and services, not true AGI.

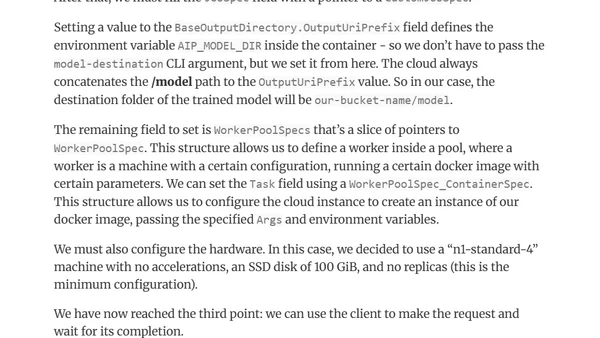

A technical guide on building and deploying a custom machine learning model using Vertex AI on Google Cloud, implemented with Go, Python, and Docker.

Interview with Itai Bar Sinai, co-founder of Mona Labs, discussing AI monitoring, product-oriented data science, and the Israeli ML community.

A developer shares insights from building an AI audit prototype, discussing the importance of defensibility and lessons from banking model audits.

Author shares how following curiosity in writing and tech led to writing a machine learning book, promotions, and career growth.

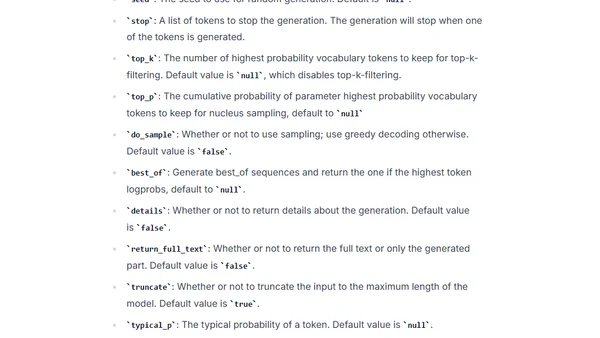

A guide to deploying open-source Large Language Models (LLMs) like Falcon using Hugging Face's managed Inference Endpoints service.

Interview with Chetan Sharma, CEO of Eppo, discussing A/B testing, statistics engineering, and building a modern experimentation platform.

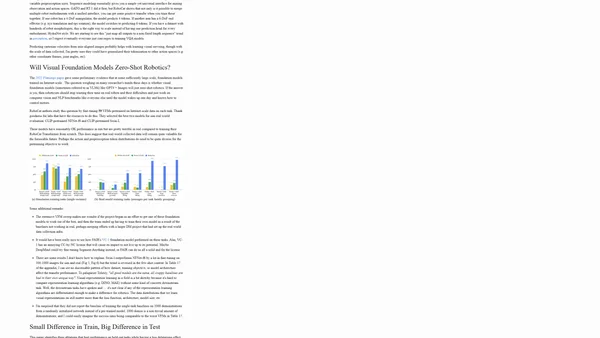

A summary and analysis of DeepMind's RoboCat paper, a self-improving foundation agent for robotic manipulation using Transformer models.

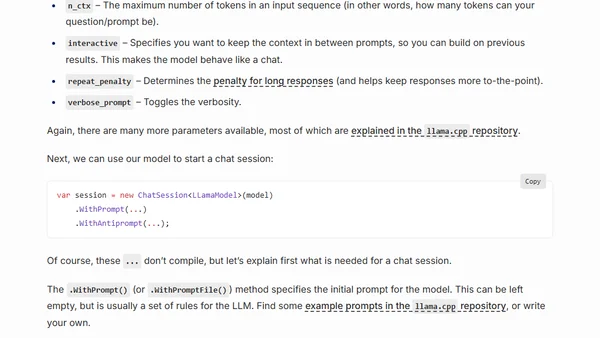

A guide to running open-source Large Language Models (LLMs) like LLaMA locally on your CPU using C# and the LLamaSharp library.

Interview with AI researcher Curtis Northcutt on his journey from rural Kentucky to MIT, founding Cleanlab, and his work on confident learning and dataset improvement.

Interview with Frank Liu on vector databases, embeddings, his career in ML/hardware, and work culture differences between China and the US.

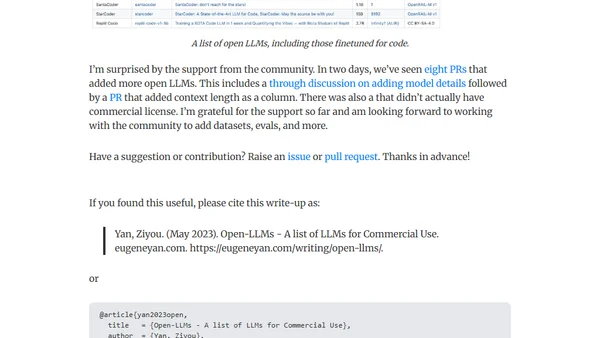

A curated list of open-source Large Language Models (LLMs) available for commercial use, including community-contributed updates and details.

An introduction to artificial neural networks, explaining the perceptron as the simplest building block and its ability to learn basic logical functions.

Learn about Low-Rank Adaptation (LoRA), a parameter-efficient method for finetuning large language models with reduced computational costs.

Explains Low-Rank Adaptation (LoRA), a parameter-efficient technique for fine-tuning large language models to reduce computational costs.

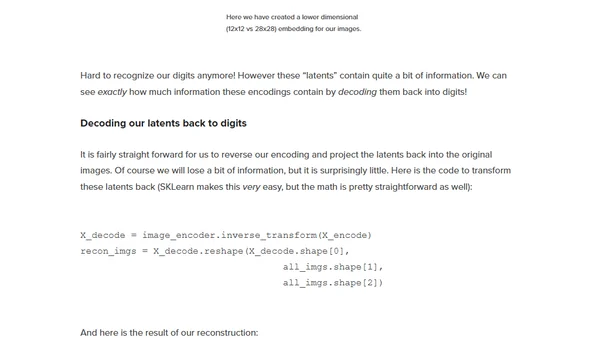

Introducing Linear Diffusion, a novel diffusion model built entirely from linear components for generating simple images like MNIST digits.

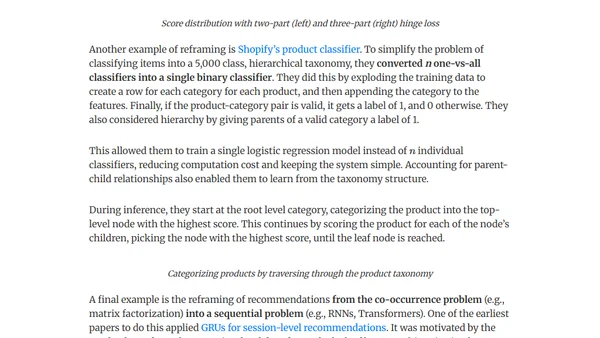

Explores essential design patterns for building efficient and maintainable machine learning systems in production, focusing on data pipelines and best practices.

A critical analysis of GPT-4's capabilities, questioning the 'miracle' narrative and exploring the technical foundations behind its success.

Explores privacy risks, bias, dependence, misinformation, and manipulation dangers associated with conversational AI and chatbots.