Building LLMs from the Ground Up: A 3-hour Coding Workshop

A 3-hour coding workshop video covering the implementation, training, and use of Large Language Models (LLMs) from scratch.

A 3-hour coding workshop video covering the implementation, training, and use of Large Language Models (LLMs) from scratch.

A 3-hour coding workshop teaching how to implement, train, and use Large Language Models (LLMs) from scratch with practical examples.

A philosophical and technical exploration of how Large Language Models (LLMs) transform 'next token prediction' into meaningful answer generation.

A hands-on review of K8sGPT, an AI-powered CLI tool for analyzing and troubleshooting Kubernetes clusters, including setup with local LLMs.

A technical review of the latest pre-training and post-training methodologies used in state-of-the-art large language models (LLMs) like Qwen 2 and Llama 3.1.

Analyzes the latest pre-training and post-training methodologies used in state-of-the-art LLMs like Qwen 2, Apple's models, Gemma 2, and Llama 3.1.

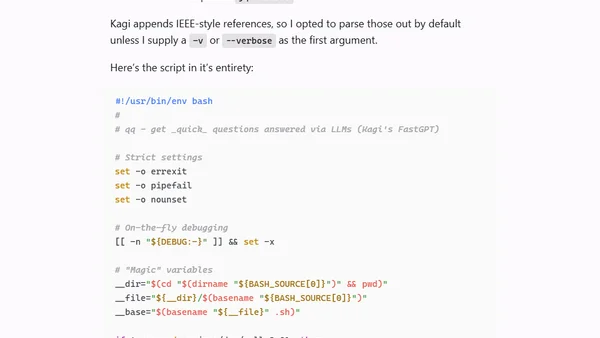

A developer creates a Bash script called 'qq' to query Kagi's FastGPT API from the terminal, improving on a command-line LLM tool concept.

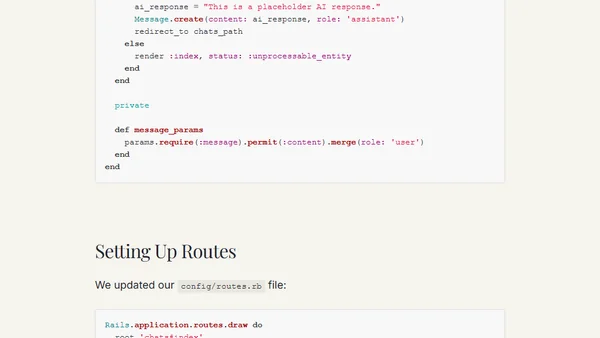

A tutorial on building a ChatGPT-like chat application using Ruby on Rails and the Claude 3.5 Sonnet AI model, covering setup, models, and integration.

Analyzing if a Codenames bot can win using only card layout patterns, without understanding word meanings.

Explores how AI can revolutionize communication by bridging context gaps between people, using tools like RAG and AI assistants as proxies.

A satirical web app uses LLMs to roast GitHub profiles, highlighting a Svelte-based implementation and straightforward API.

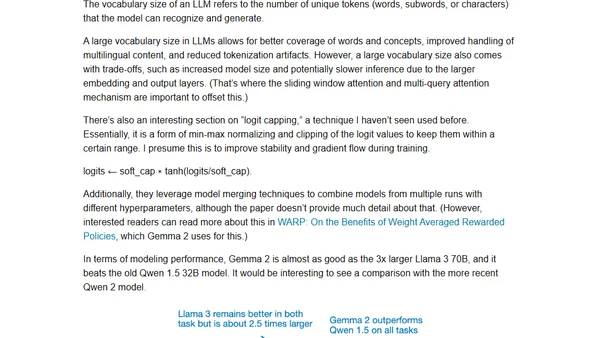

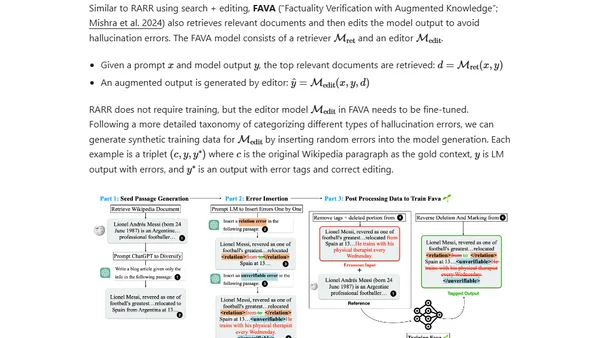

Explores recent research on instruction finetuning for LLMs, including cost-effective data generation methods and an overview of new models like Gemma 2.

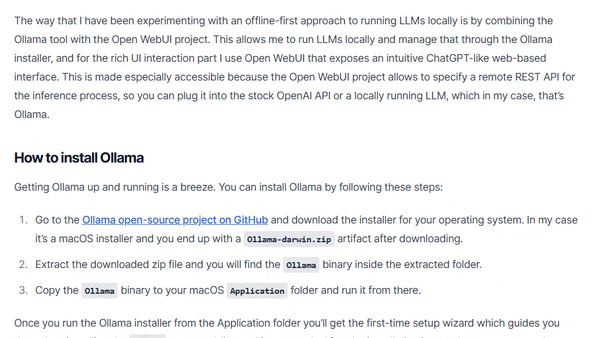

A guide on running Large Language Models (LLMs) locally for inference, covering tools like Ollama and Open WebUI for privacy and cost control.

Analyzes token consumption in Microsoft's Graph RAG for local and global queries, including setup with LiteLLM and Langfuse for monitoring.

Explores Microsoft's Graph RAG, an advanced RAG technique using knowledge graphs to answer global questions about datasets, with a hands-on setup guide.

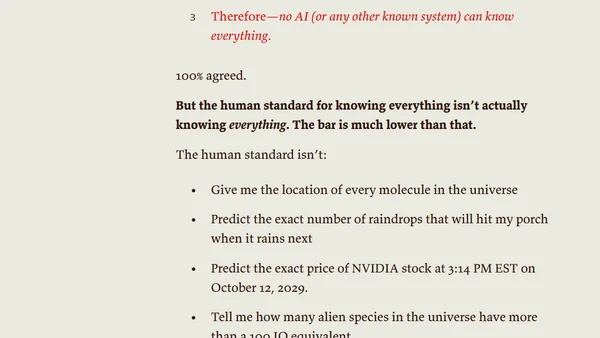

Explores the causes and types of hallucinations in large language models, focusing on extrinsic hallucinations and how training data affects factual accuracy.

A detailed comparison of Anthropic's Claude 3 and the newer Claude 3.5 Sonnet AI models, covering performance, capabilities, and benchmarks.

Reflections on delivering the closing keynote at the AI Engineer World's Fair 2024, sharing lessons from a year of building with LLMs.

Explains the limitations of Large Language Models (LLMs) and introduces Retrieval Augmented Generation (RAG) as a solution for incorporating proprietary data.

The article argues for a shift from subscription-based online LLMs to offline-first Small Language Models (SLMs) due to privacy, security, and cost concerns.