Implementing A Byte Pair Encoding (BPE) Tokenizer From Scratch

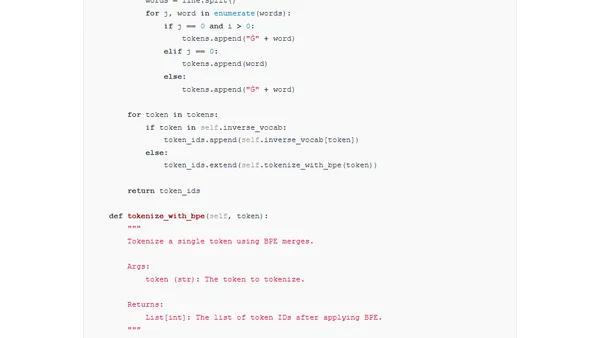

A step-by-step guide to implementing the Byte Pair Encoding (BPE) tokenizer from scratch, used in models like GPT and Llama.

A step-by-step guide to implementing the Byte Pair Encoding (BPE) tokenizer from scratch, used in models like GPT and Llama.

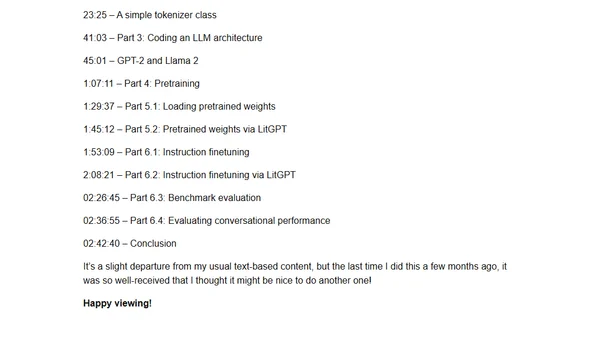

A 3-hour coding workshop teaching how to implement, train, and use Large Language Models (LLMs) from scratch with practical examples.

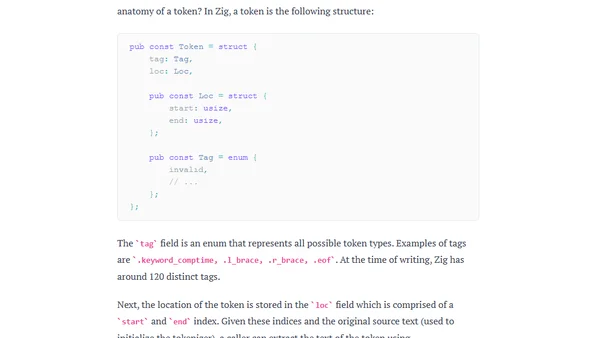

Explains how tokenization works in the Zig compiler, detailing the tokenizer's structure, usage, and key properties like being allocation-free.