How to align open LLMs in 2025 with DPO and and synthetic data

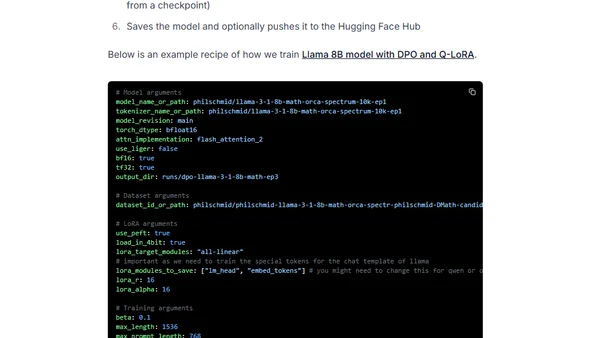

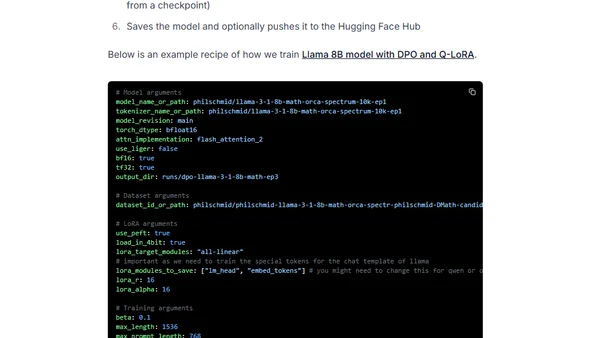

A technical guide on aligning open-source large language models (LLMs) in 2025 using Direct Preference Optimization (DPO) and synthetic data.

A technical guide on aligning open-source large language models (LLMs) in 2025 using Direct Preference Optimization (DPO) and synthetic data.

Analyzes the latest pre-training and post-training methodologies used in state-of-the-art LLMs like Qwen 2, Apple's models, Gemma 2, and Llama 3.1.

A technical review of the latest pre-training and post-training methodologies used in state-of-the-art large language models (LLMs) like Qwen 2 and Llama 3.1.