World Model + Next Token Prediction = Answer Prediction

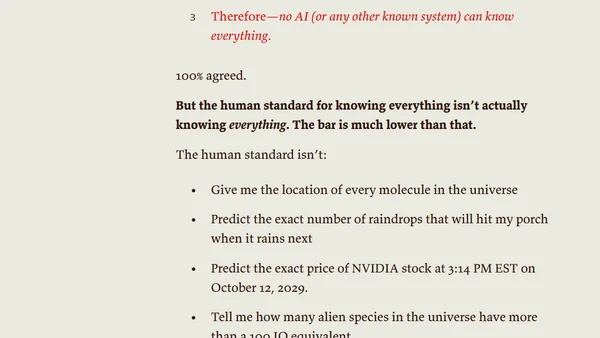

Read OriginalThis article analyzes the common critique that LLMs are 'just next token predictors,' arguing that with a sufficiently accurate world model, predicting the next token is functionally equivalent to providing correct answers. It presents a tiered framework for understanding LLM capabilities and discusses the philosophical implications of model-based knowledge versus human understanding.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser