Instruction Pretraining LLMs

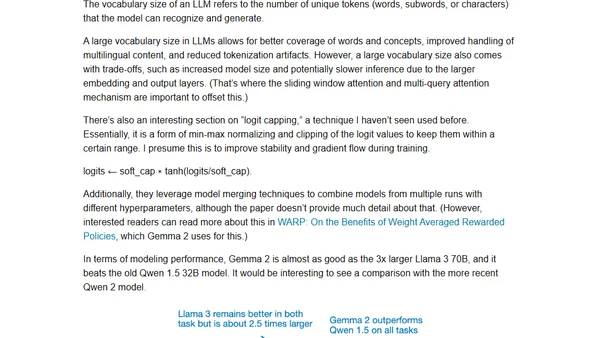

Read OriginalThis technical article focuses on recent advancements in instruction finetuning for Large Language Models (LLMs). It details a novel, cost-effective method for generating synthetic instruction datasets from scratch using local models, compares results with major models like Llama 3, and provides an overview of other significant research and releases from June, including Google's Gemma 2.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser