Physica: The Physics World Model for Scientific AI

Introducing Physica, a Physics World Model AI that enforces physical laws to prevent errors in AI-generated simulations, moving beyond token fluency.

Introducing Physica, a Physics World Model AI that enforces physical laws to prevent errors in AI-generated simulations, moving beyond token fluency.

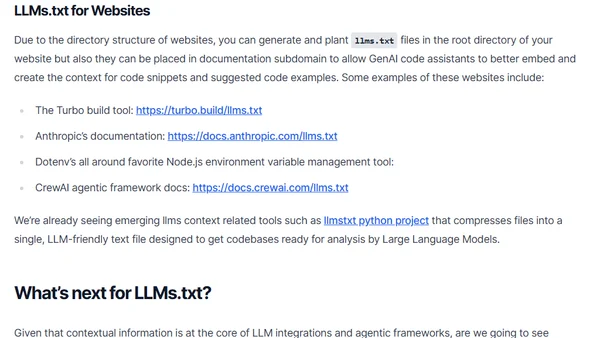

Explains the LLMs.txt file, a new standard for providing context and metadata to Large Language Models to improve accuracy and reduce hallucinations.

Introducing Tinbox, an LLM-based tool designed to translate sensitive historical documents that standard models often refuse to process.

An analysis of the ethical debate around LLMs, contrasting their use in creative fields with their potential for scientific advancement.

Reflections on the first unit of the Hugging Face Agents course, focusing on the potential and risks of code agents and their evaluation.

Learn to automate pull request descriptions in Azure DevOps using Azure OpenAI's GPT-4o to generate summaries of code changes.

A developer shares insights from Jfokus 2025, highlighting talks on Java performance optimization and AI agents in software development.

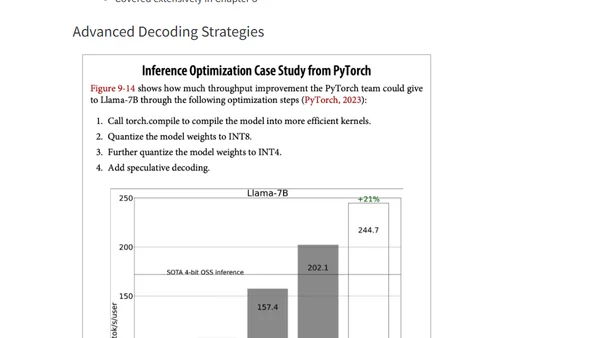

Summary of key concepts for optimizing AI inference performance, covering bottlenecks, metrics, and deployment patterns from Chip Huyen's book.

A tutorial on installing and running the Mistral AI large language model locally on a Linux system using the Ollama platform.

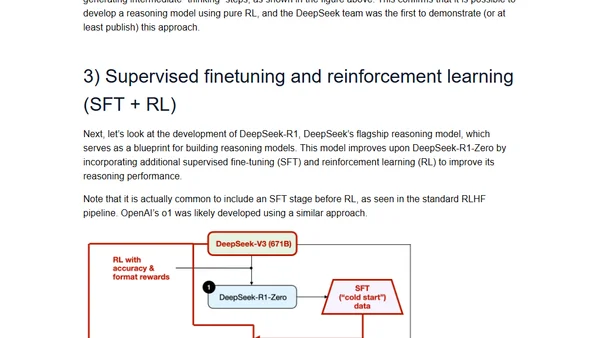

Explores four main approaches to building and enhancing reasoning capabilities in Large Language Models (LLMs) for complex tasks.

Argues that learning to code remains essential in 2025 despite advanced AI, emphasizing critical thinking, debugging, and career value.

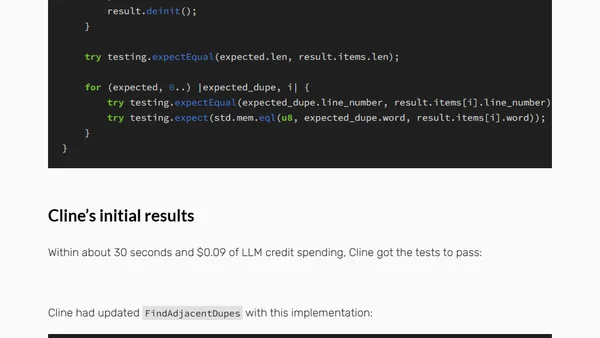

A developer's experience with the Cline AI coding assistant, exploring its capabilities for bug fixing and the implications for programmers.

A guide to the best newsletters, blogs, and resources for staying updated on the fast-moving field of Artificial Intelligence in 2025.

A tutorial on installing the Deepseek AI model locally on a Linux machine using the Ollama platform.

A practical guide to writing effective AI prompts, debunking the complexity of prompt engineering and offering simple tips for better results.

A developer's weekly update covering work on an LLM project, custom scripting, PICO-8 experimentation, and blog theme adjustments.

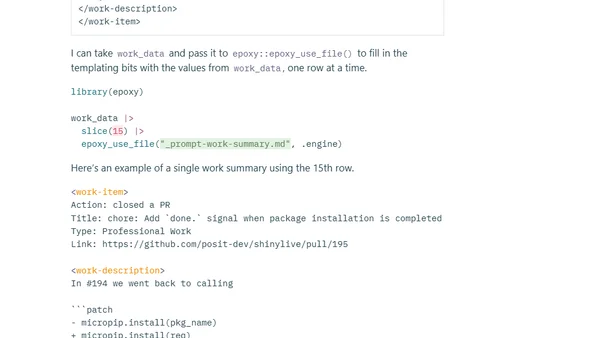

A software engineer explores using LLMs and R code to analyze GitHub activity to track and summarize their weekly work, addressing the challenge of remembering tasks.

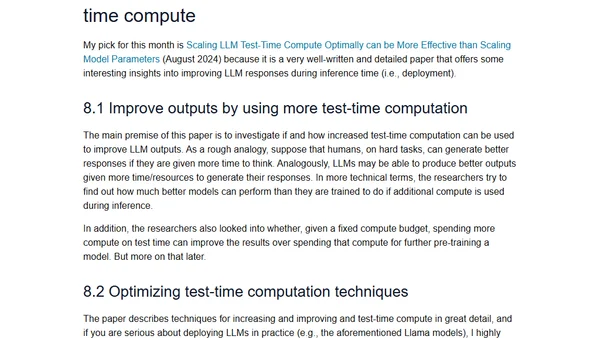

A curated list of 12 influential LLM research papers from 2024, highlighting key advancements in AI and machine learning.

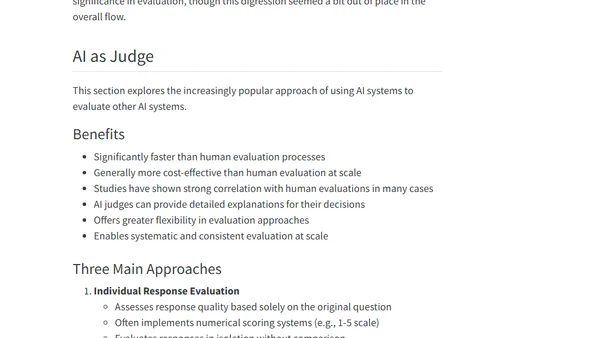

Summarizes key challenges and methods for evaluating open-ended responses from large language models and foundation models, based on Chip Huyen's book.

A summary and discussion of Chapter 1 of Chip Huyen's book, exploring the definition of AI Engineering, its distinction from ML, and the AI Engineering stack.