Install Mistral AI on Linux

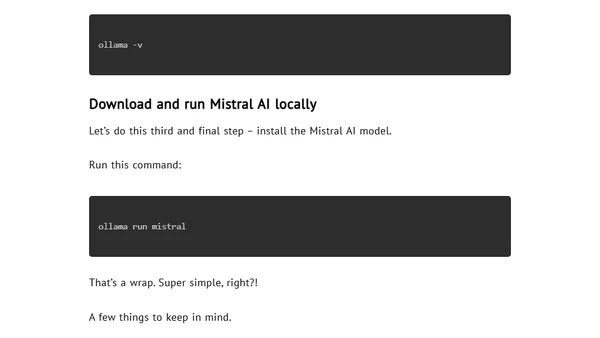

Read OriginalThis technical tutorial provides step-by-step instructions for installing the Ollama platform on Linux, verifying the installation, and then downloading and running the Mistral AI large language model (LLM) locally on your machine. It explains LLMs briefly and encourages exploring other available models.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser