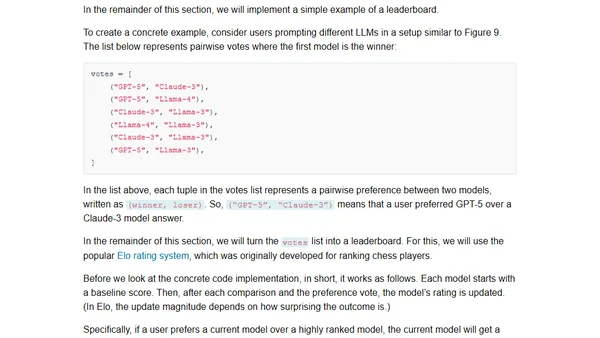

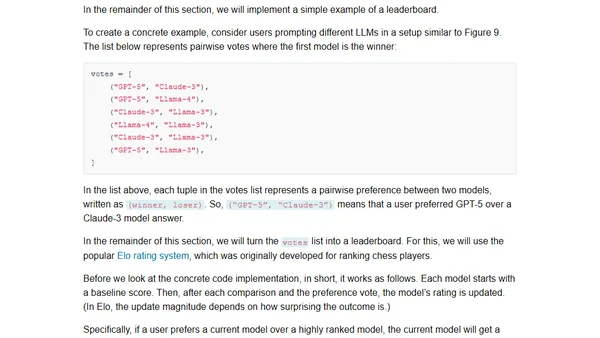

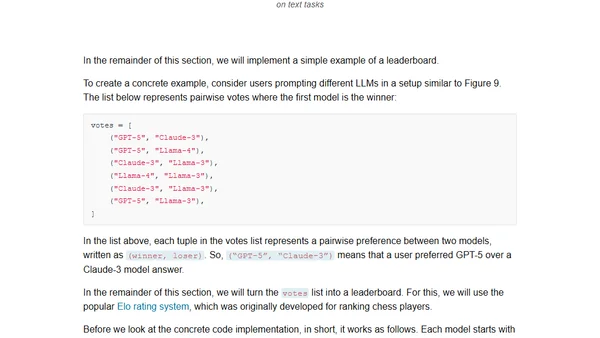

Understanding the 4 Main Approaches to LLM Evaluation (From Scratch)

Explores four main methods for evaluating Large Language Models (LLMs), including code examples for implementing each approach from scratch.

Explores four main methods for evaluating Large Language Models (LLMs), including code examples for implementing each approach from scratch.

A guide to the four main methods for evaluating Large Language Models, including code examples and practical implementation details.

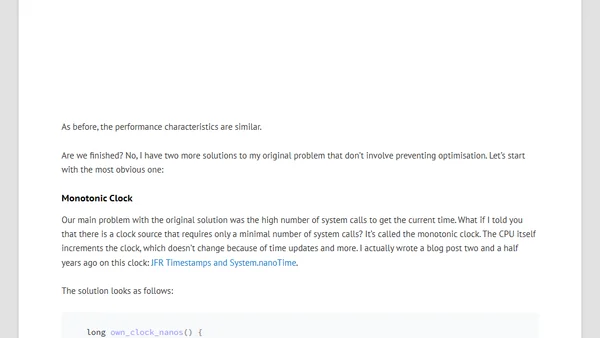

A technical exploration of seven methods to intentionally waste CPU time for precise durations, focusing on user-land implementations for profiling tests.

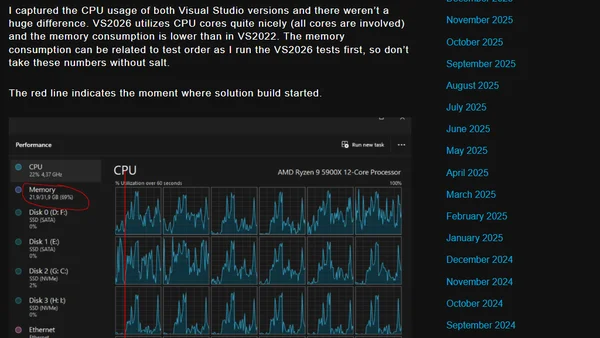

A performance comparison of Visual Studio 2026 vs. 2022, focusing on build times and resource usage for a large .NET Framework solution.

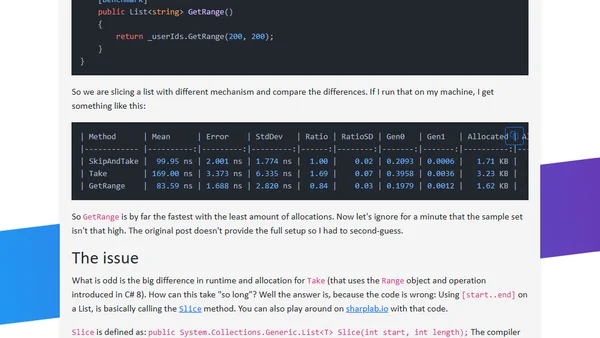

Analyzes C# performance benchmarks for slicing lists, comparing Skip/Take, Range operator, and GetRange methods, highlighting a common benchmarking error.

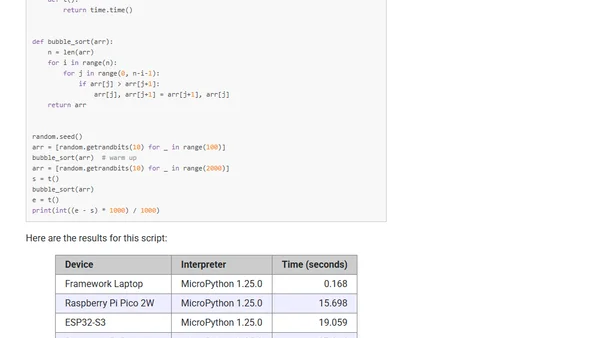

A developer benchmarks MicroPython performance on various microcontrollers, comparing them to a Raspberry Pi 4 and a laptop.

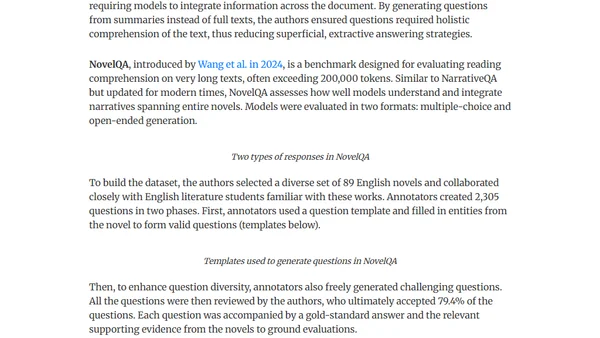

Explores challenges and methods for evaluating question-answering AI systems when processing long documents like technical manuals or novels.

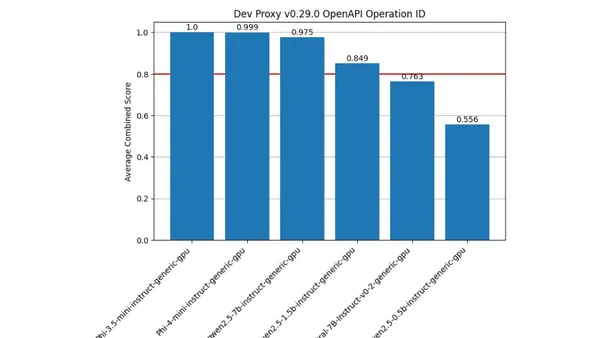

A guide to benchmarking language models using a Jupyter Notebook that supports any OpenAI-compatible API, including Ollama and Foundry Local.

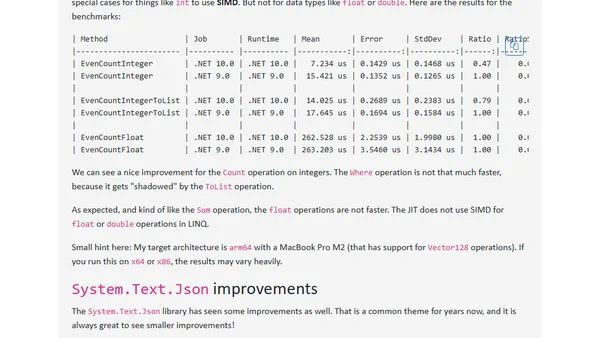

Highlights key performance improvements in .NET 10, including stack allocation optimizations and delegate escape analysis, with benchmark comparisons.

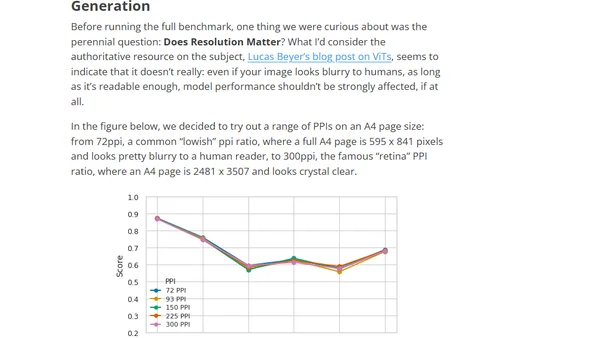

Introduces ReadBench, a benchmark for evaluating how well Vision-Language Models (VLMs) can read and extract information from images of text.

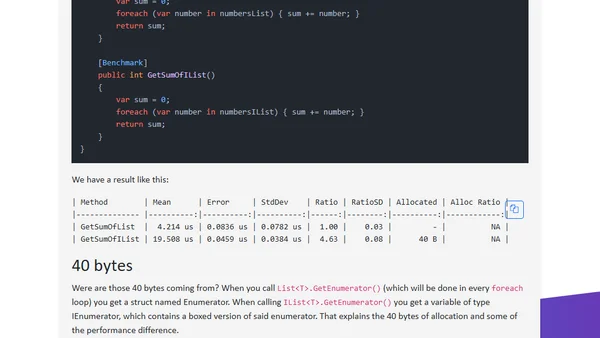

Explains why iterating over a concrete List<T> in C# is faster than iterating over an IList<T> interface, covering boxing and virtual method overhead.

Explores the concept of software benchmarks as falsifiable hypotheses for predicting real-world system performance, not just speed tests.

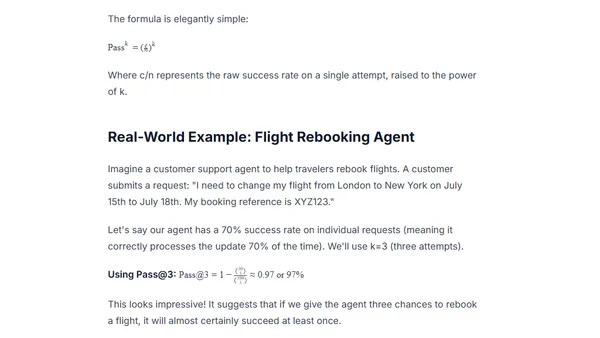

Explains the difference between Pass@k and Pass^k metrics for evaluating AI agent reliability, highlighting why consistency matters in production.

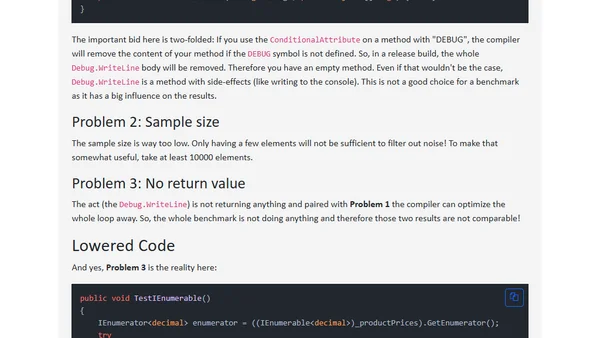

Analyzes a viral LinkedIn claim about IEnumerable vs IEnumerator performance in C#, debunking the 2x speed difference with a flawed benchmark.

Analysis of Chip Huyen's chapter on AI system evaluation, covering evaluation-driven development, criteria, and practical implementation.

A performance and cost comparison of Azure's V3, V5, and V6 virtual machines, focusing on benchmarks and upgrade viability.

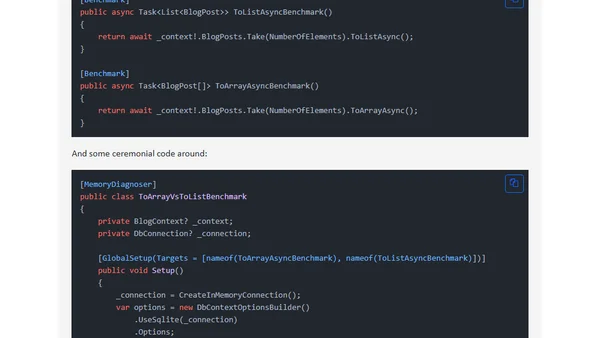

Performance comparison between ToArrayAsync and ToListAsync methods in Entity Framework 8, using benchmarks to analyze memory and speed.

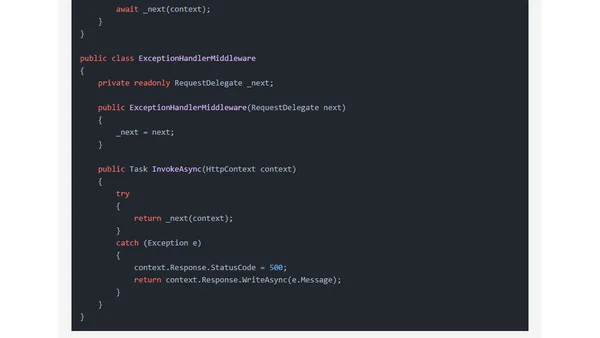

Benchmarking performance of ASP.NET Minimal API vs classic Controllers in .NET 8 and 9 using BenchmarkDotNet.

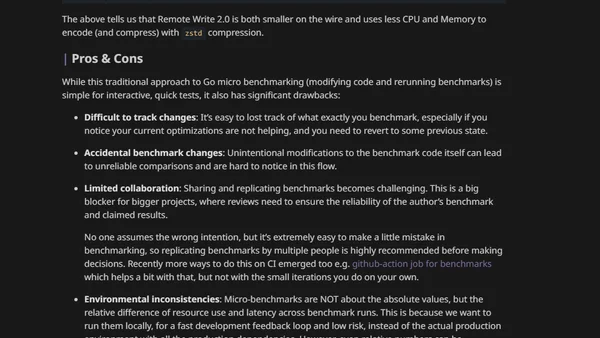

A guide to using the benchstat tool for advanced Go benchmark analysis, including projections and comparisons across code versions.

A guide to evaluating Large Language Models (LLMs) using the Evaluation Harness framework and optimized serving tools like Hugging Face TGI and vLLM.