TIL: Vision-Language Models Read Worse (or Better) Than You Think

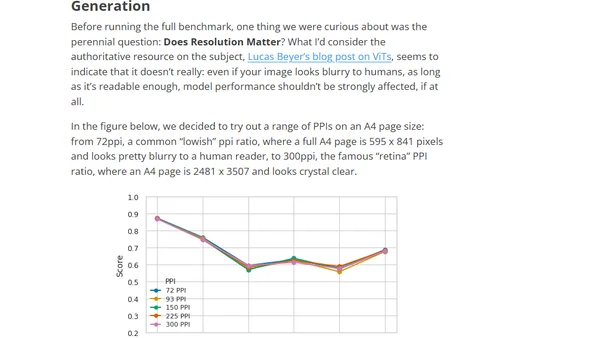

Introduces ReadBench, a benchmark for evaluating how well Vision-Language Models (VLMs) can read and extract information from images of text.

Introduces ReadBench, a benchmark for evaluating how well Vision-Language Models (VLMs) can read and extract information from images of text.

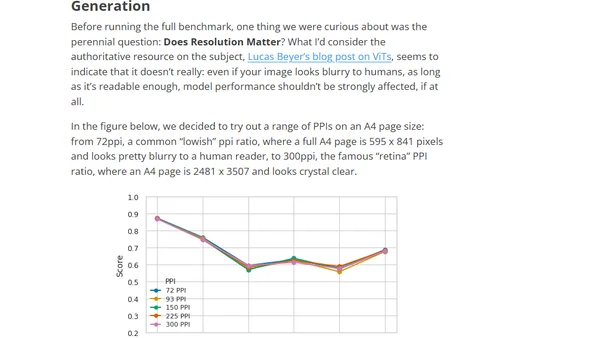

A technical guide on fine-tuning Vision-Language Models (VLMs) using Hugging Face's TRL library for custom applications like image-to-text generation.

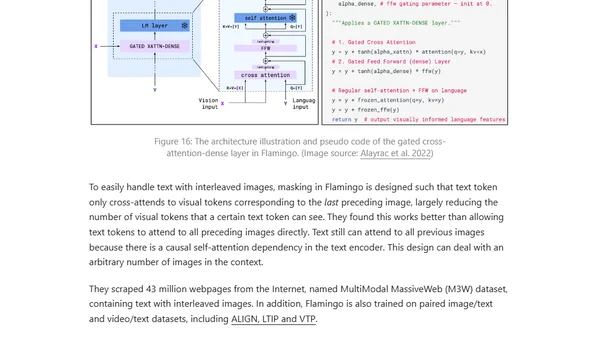

Explores methods for extending pre-trained language models to process visual information, focusing on four approaches for vision-language tasks.