Fine-tune Falcon 180B with DeepSpeed ZeRO, LoRA and Flash Attention

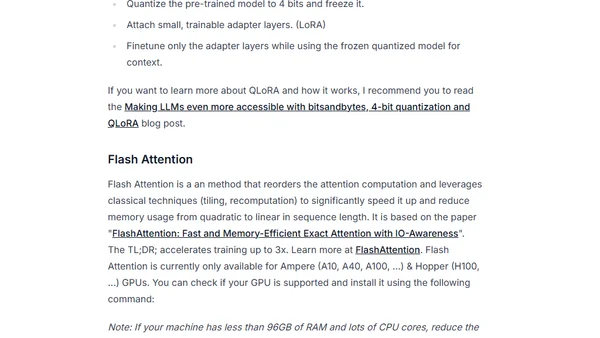

A technical guide on fine-tuning the massive Falcon 180B language model using DeepSpeed ZeRO, LoRA, and Flash Attention for efficient training.

Philipp Schmid is a Staff Engineer at Google DeepMind, building AI Developer Experience and DevRel initiatives. He specializes in LLMs, RLHF, and making advanced AI accessible to developers worldwide.

189 articles from this blog

A technical guide on fine-tuning the massive Falcon 180B language model using DeepSpeed ZeRO, LoRA, and Flash Attention for efficient training.

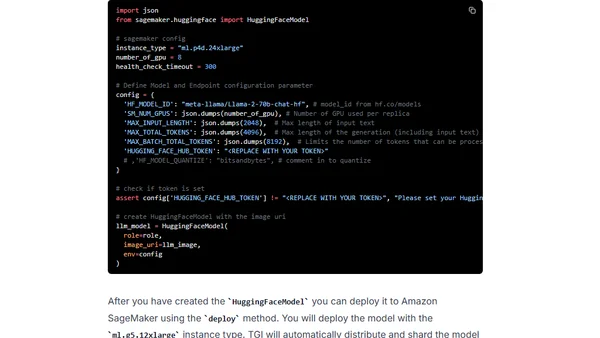

A technical guide on fine-tuning the massive Falcon 180B language model using QLoRA and Flash Attention on Amazon SageMaker.

A technical guide on deploying the Falcon 180B open-source large language model to Amazon SageMaker using the Hugging Face LLM DLC.

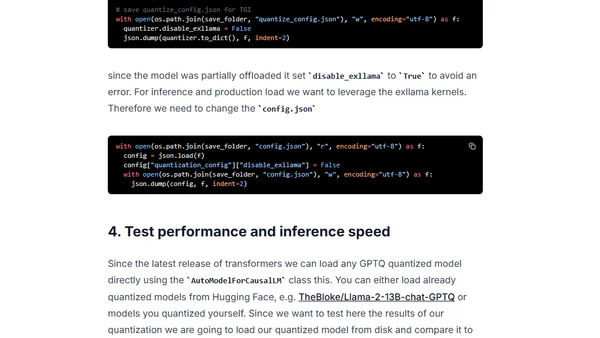

A guide to using GPTQ quantization with Hugging Face Optimum to compress open-source LLMs for efficient deployment on smaller hardware.

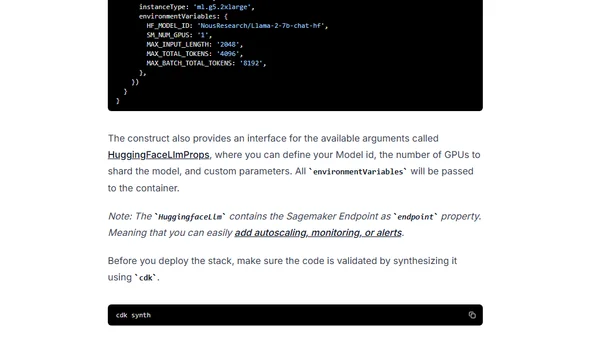

A technical guide on deploying open-source LLMs like Llama 2 using Infrastructure as Code with AWS CDK and the Hugging Face LLM construct.

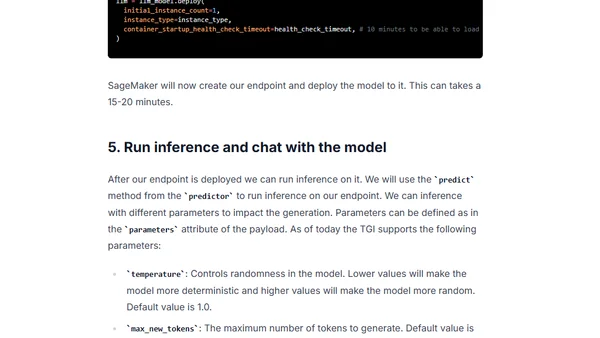

A technical guide on deploying Meta's Llama 2 large language models (7B, 13B, 70B) on Amazon SageMaker using the Hugging Face LLM DLC.

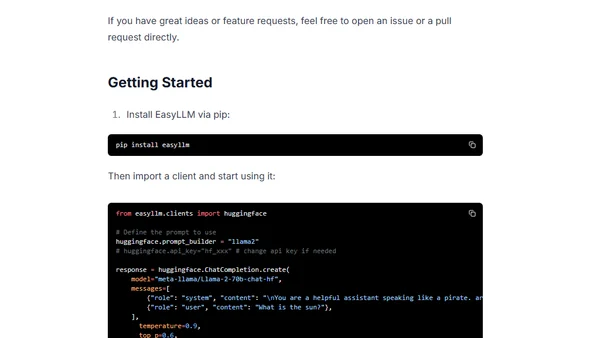

Introduces EasyLLM, an open-source Python package for streamlining work with open large language models via OpenAI-compatible clients.

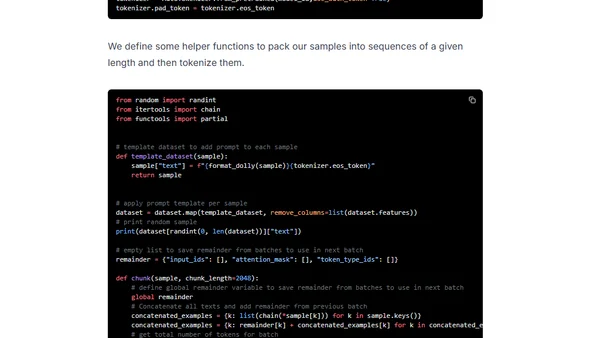

A technical guide on instruction-tuning Meta's Llama 2 model to generate instructions from inputs, enabling personalized LLM applications.

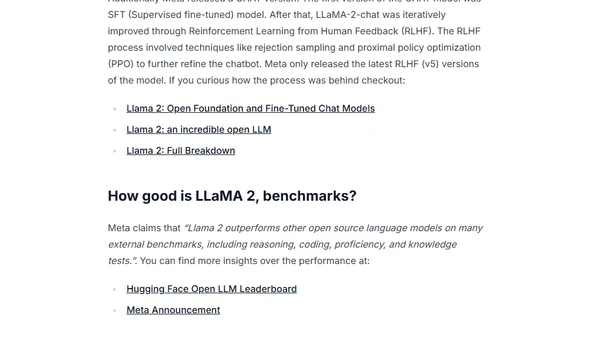

A comprehensive guide to Meta's LLaMA 2 open-source language model, covering resources, playgrounds, benchmarks, and technical details.

A technical guide on fine-tuning LLaMA 2 models (7B to 70B) using QLoRA and PEFT on Amazon SageMaker for efficient large language model adaptation.

A technical guide on using QLoRA to efficiently fine-tune the Falcon 40B large language model on Amazon SageMaker.

A guide to deploying open-source Large Language Models (LLMs) like Falcon using Hugging Face's managed Inference Endpoints service.

A tutorial on optimizing and deploying a BERT model for low-latency inference using AWS Inferentia2 accelerators and Amazon SageMaker.

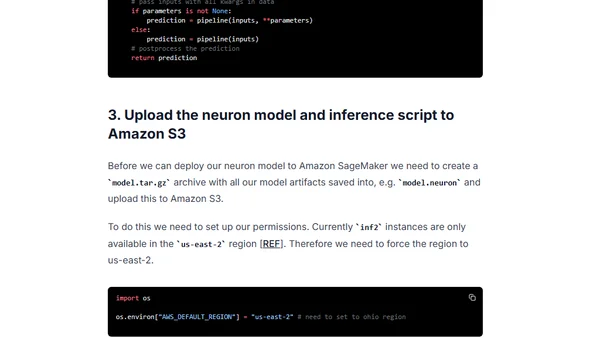

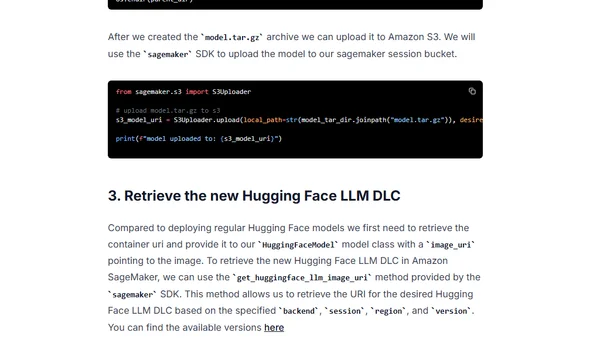

A technical guide on deploying open-source Large Language Models (LLMs) from Amazon S3 to Amazon SageMaker using Hugging Face's LLM Inference Container within a VPC.

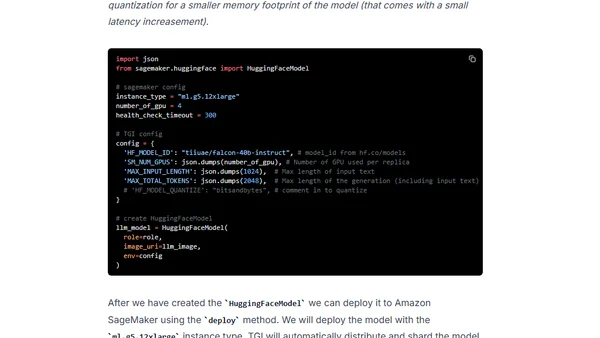

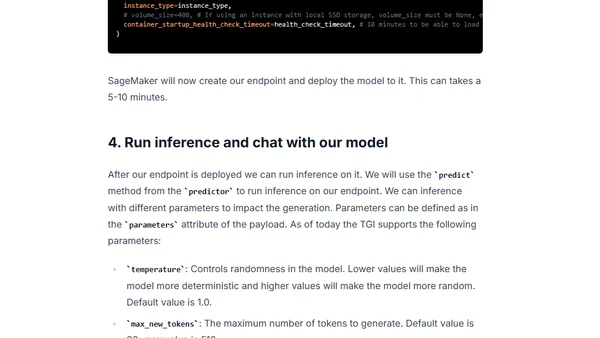

A technical guide on deploying the open-source Falcon 7B and 40B large language models to Amazon SageMaker using the Hugging Face LLM Inference Container.

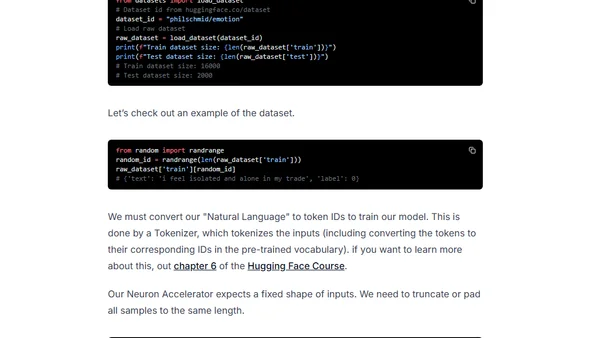

A tutorial on fine-tuning a BERT model for text classification using AWS Trainium instances and Hugging Face Transformers.

Guide to deploying open-source LLMs like BLOOM and Open Assistant to Amazon SageMaker using Hugging Face's new LLM Inference Container.

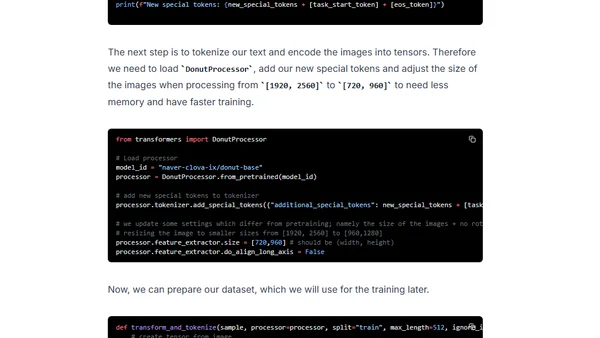

Tutorial on fine-tuning and deploying the Donut model for OCR-free document understanding using Hugging Face and Amazon SageMaker.

A technical tutorial on fine-tuning a 20B+ parameter LLM using PyTorch FSDP and Hugging Face on Amazon SageMaker's multi-GPU infrastructure.

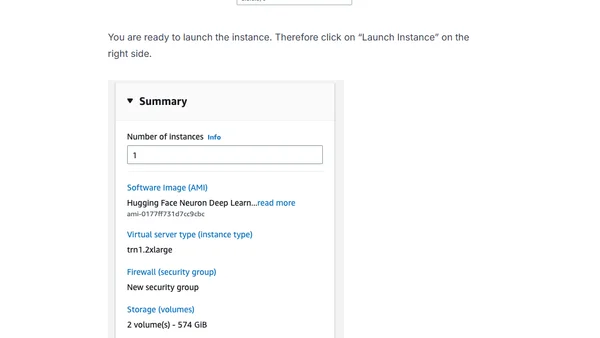

A guide to setting up an AWS Trainium instance using the Hugging Face Neuron Deep Learning AMI to fine-tune a BERT model for text classification.