Securely deploy LLMs inside VPCs with Hugging Face and Amazon SageMaker

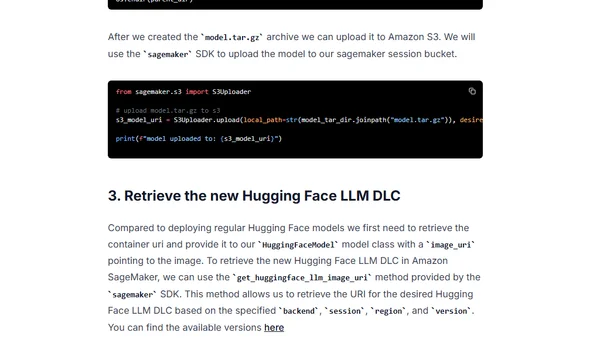

Read OriginalThis tutorial demonstrates how to securely deploy open-source LLMs like HuggingFaceH4/starchat-beta inside an Amazon VPC using Amazon SageMaker and the Hugging Face LLM Inference Container. It covers setting up the environment, uploading models to S3 for offline access, deploying the model, running inference, and cleanup, specifically for scenarios requiring no internet access from the endpoint.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser