Fine-tune Falcon 180B with QLoRA and Flash Attention on Amazon SageMaker

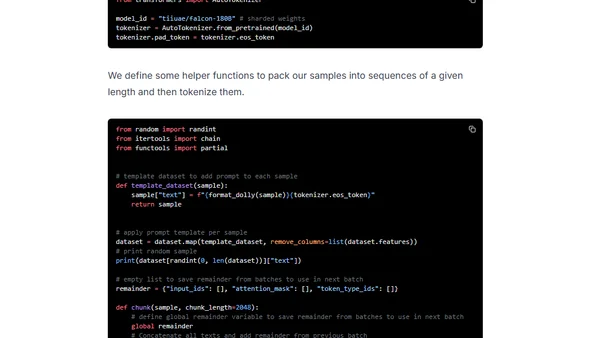

Read OriginalThis article provides a detailed tutorial on efficiently fine-tuning the Falcon 180B open-source LLM. It covers using QLoRA for parameter-efficient fine-tuning on a single GPU, integrating Flash Attention, and implementing the process on Amazon SageMaker with Hugging Face tools and the Dolly dataset.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser