Connect Dremio Software to Dremio Cloud: Hybrid Federation Across Deployments

Guide on connecting self-managed Dremio Software to Dremio Cloud for hybrid data federation, enabling queries across on-prem and cloud data sources.

Alex Merced — Developer and technical writer sharing in-depth insights on data engineering, Apache Iceberg, data lakehouse architectures, Python tooling, and modern analytics platforms, with a strong focus on practical, hands-on learning.

388 articles from this blog

Guide on connecting self-managed Dremio Software to Dremio Cloud for hybrid data federation, enabling queries across on-prem and cloud data sources.

Introduces Dremio's built-in Open Catalog for Apache Iceberg, offering a zero-configuration, production-ready lakehouse solution with automated management.

Guide on connecting any Apache Iceberg REST Catalog to Dremio Cloud for universal lakehouse data access and management.

Guide on connecting Databricks Unity Catalog to Dremio Cloud to query Delta Lake tables with federation, AI analytics, and performance acceleration.

Guide on connecting Snowflake Open Catalog to Dremio Cloud for multi-engine Apache Iceberg analytics, federation, and cost optimization.

A comprehensive guide to data modeling, explaining its meaning, three abstraction levels, techniques, and importance for modern data systems.

Explains dimensional modeling for analytics, covering facts, dimensions, grains, and table design for query performance.

Explores how data modeling principles adapt for modern lakehouse architectures using open formats like Apache Iceberg and the Medallion pattern.

Compares Star Schema and Snowflake Schema data models, explaining their structures, trade-offs, and when to use each for optimal data warehousing.

Explains the three levels of data modeling (conceptual, logical, physical) and their importance in database design.

A guide to the core principles and systems thinking required for data engineering, beyond just learning specific tools.

A practical, tool-agnostic checklist of essential best practices for designing, building, and maintaining reliable data engineering pipelines.

Explains the importance of pipeline observability for data health, covering metrics, logs, and lineage to detect issues beyond simple execution monitoring.

Explains the importance of automated testing for data pipelines, covering schema validation, data quality checks, and regression testing.

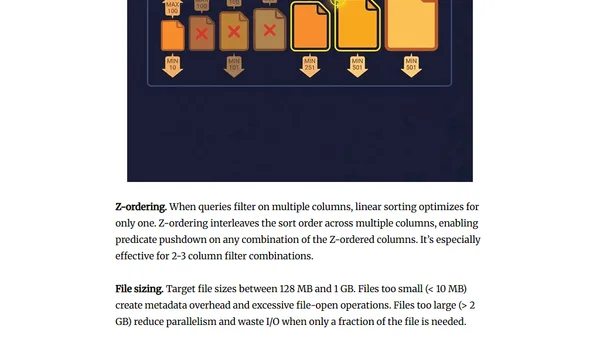

Explains data partitioning and organization strategies to drastically improve query performance in analytical databases.

A guide to choosing between batch and streaming data processing models based on actual freshness requirements and cost.

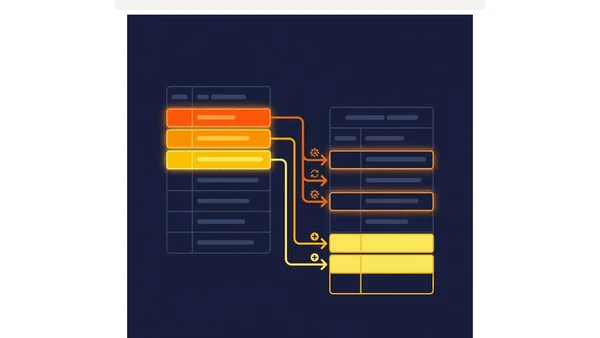

Explains how to safely evolve data schemas using API-like discipline to prevent breaking downstream systems like dashboards and ML pipelines.

Explains idempotent data pipelines, patterns like partition overwrite and MERGE, and how to prevent duplicate data during retries.

Argues that data quality must be enforced at the pipeline's ingestion point, not patched in dashboards, to ensure consistent, reliable data.

A guide to designing reliable, fault-tolerant data pipelines with architectural principles like idempotency, observability, and DAG-based workflows.