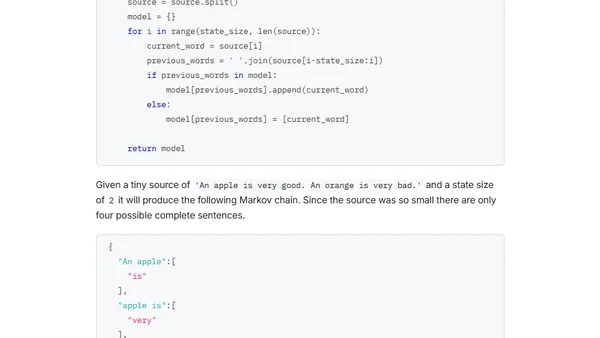

Generating Text With Markov Chains

A technical guide to generating realistic text using Markov chains, from basic weather simulation to building a text generator from scratch in Python.

A technical guide to generating realistic text using Markov chains, from basic weather simulation to building a text generator from scratch in Python.

An analysis of GPT-3's capabilities, potential for misuse in generating fake news and spam, and its exclusive licensing by Microsoft.

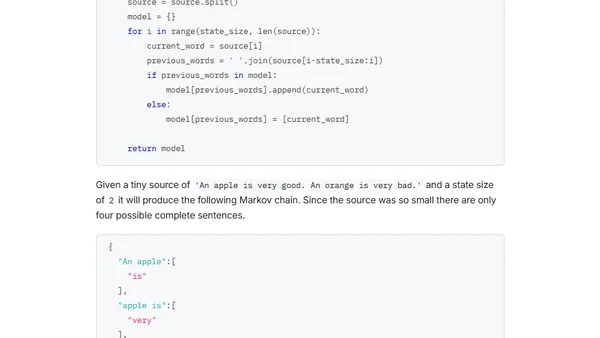

A tutorial on fine-tuning a German GPT-2 language model for text generation using Huggingface's Transformers library and a dataset of recipes.

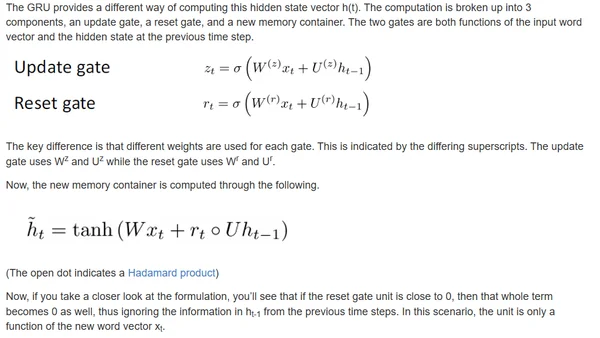

A chronological survey of key NLP models and techniques for supervised learning, from early RNNs to modern transformers like BERT and T5.

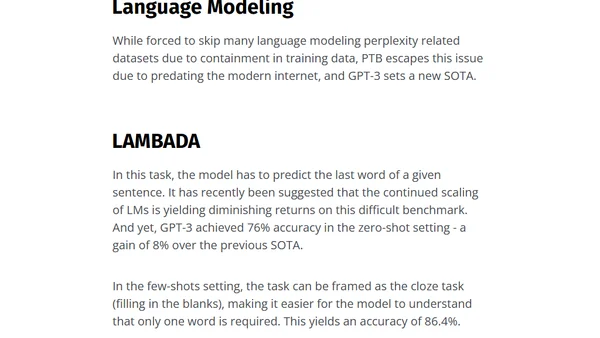

An analysis of OpenAI's GPT-3 language model, focusing on its 175B parameters, in-context learning capabilities, and performance on NLP tasks.

A summary of a meetup talk on advanced recommender systems, exploring techniques beyond baselines using graph and NLP methods.

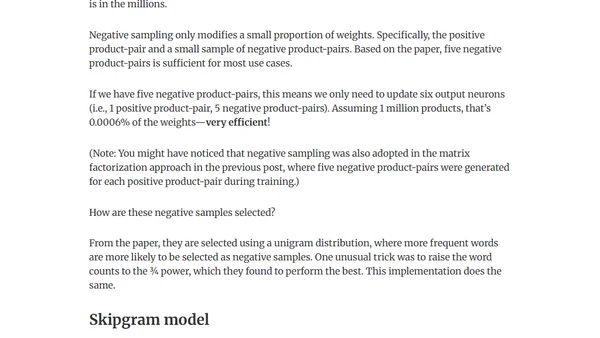

Explores improving recommender systems using graph-based methods and NLP techniques like word2vec and DeepWalk in PyTorch.

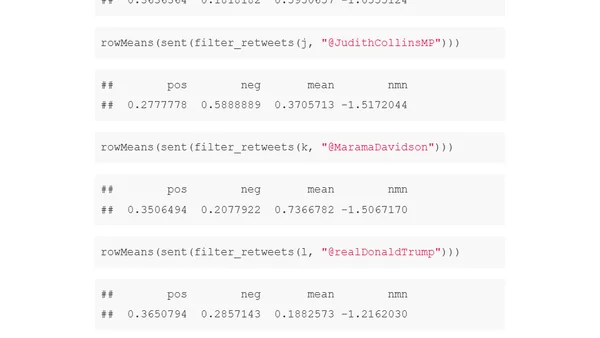

Analyzing tweet sentiment towards public figures using R, word embeddings, and logistic regression models to measure online negativity.

A developer explores investigative journalism, drawing parallels between source control diffs and uncovering truth in legal documents and online comments.

A tutorial on text data classification using the BBC news dataset and PHP-ML for machine learning, covering data loading and preprocessing.

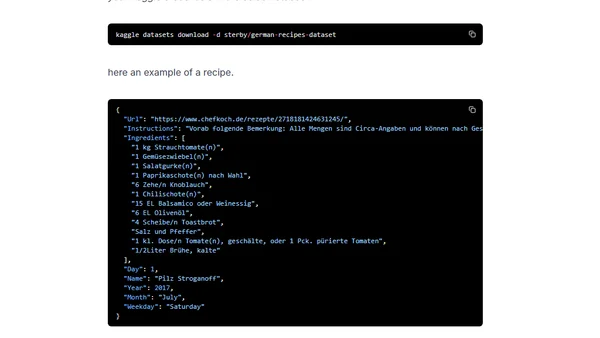

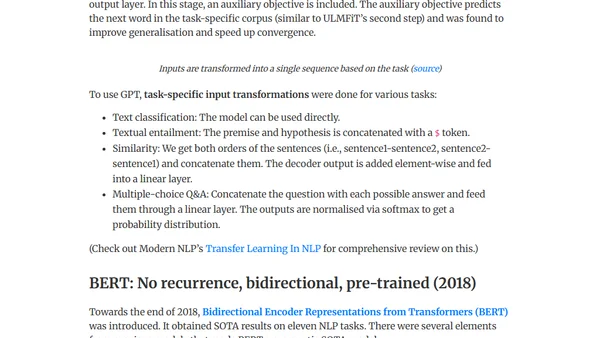

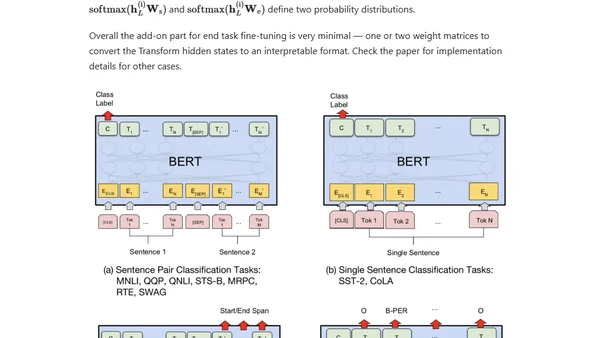

A technical overview of the evolution of large-scale pre-trained language models like BERT, GPT, and T5, focusing on contextual embeddings and transfer learning in NLP.

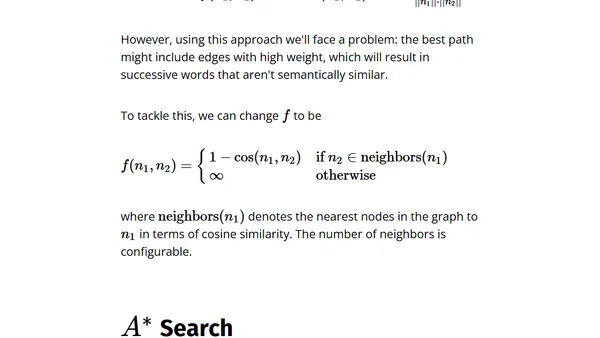

Explores word morphing using word2vec embeddings and A* search to find semantic paths between words, like 'tooth' to 'light'.

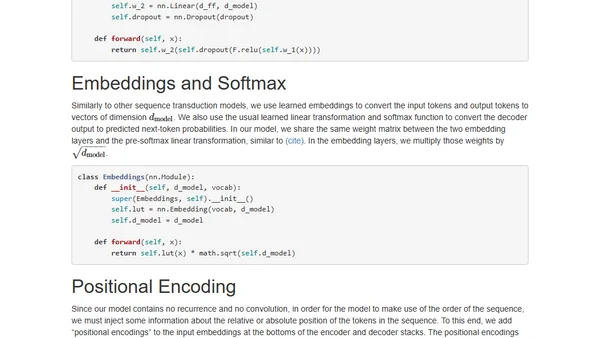

An annotated, line-by-line implementation of the Transformer architecture from 'Attention is All You Need' in PyTorch.

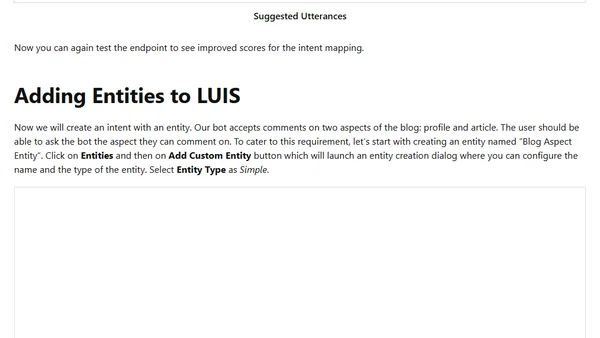

Part 4 of a series on the Microsoft Bot Framework, focusing on adding natural language processing using LUIS (intents, entities, utterances).

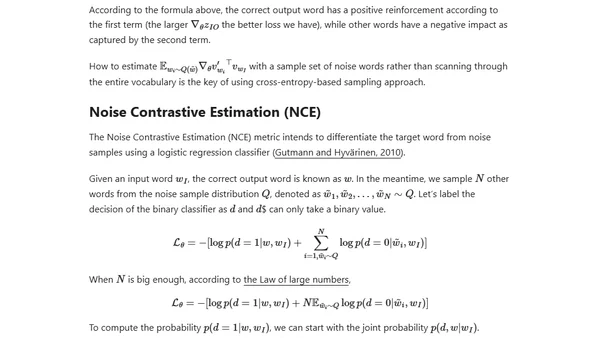

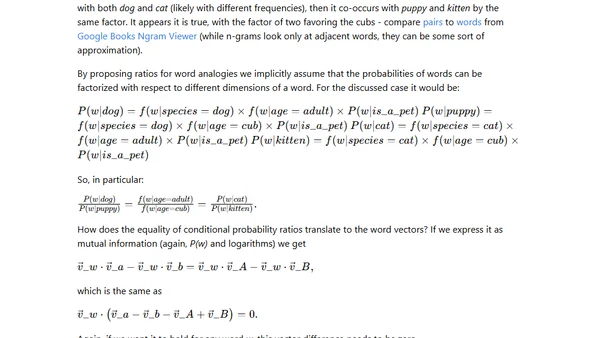

Explains word embeddings, comparing count-based and context-based methods like skip-gram for converting words into dense numeric vectors.

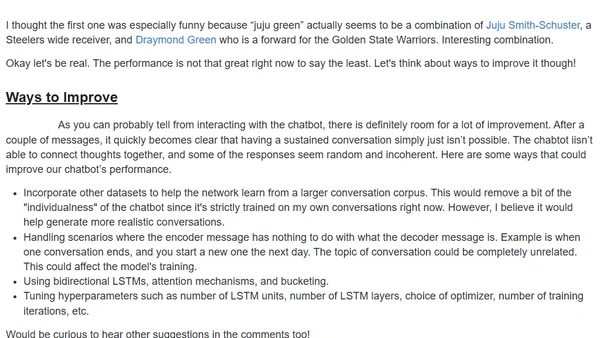

A developer explores using deep learning and sequence-to-sequence models to train a chatbot on personal social media data to mimic their conversational style.

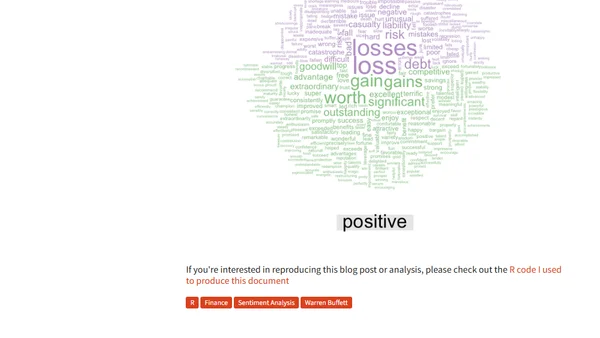

A technical analysis using sentiment analysis on Warren Buffett's shareholder letters from 1977-2016 to identify trends in tone and market influence.

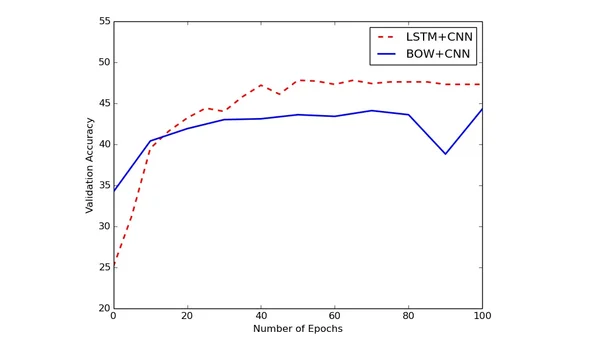

A deep dive into applying deep learning techniques to Natural Language Processing (NLP), covering word vectors and research paper summaries.

Explains the word2vec algorithm and the famous 'king - man + woman = queen' analogy using vector arithmetic and word co-occurrences.

Explores Visual Question Answering (VQA) as an alternative Turing Test, detailing neural network approaches using Python and Keras.