Fine-tune a non-English GPT-2 Model with Huggingface

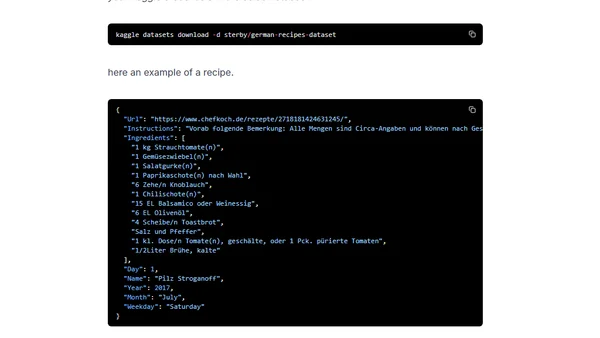

Read OriginalThis technical tutorial provides a step-by-step guide to fine-tuning a non-English (German) GPT-2 model using the Huggingface Transformers library. It covers loading a dataset of German recipes, preparing the data, using the Trainer class for training, and testing the resulting model for text generation, all within a Google Colab environment.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser