TabICL: Pretraining the best tabular learner

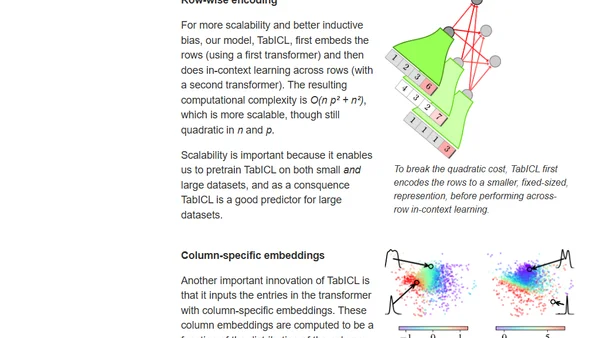

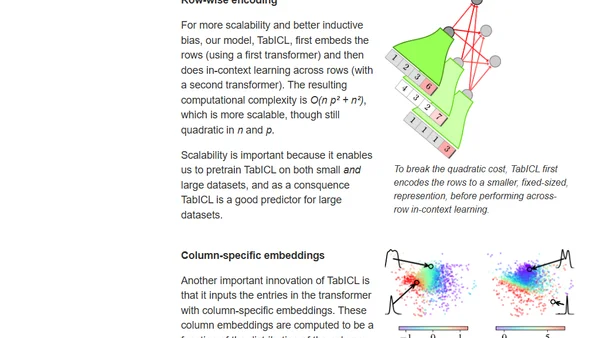

Introducing TabICL, a state-of-the-art table foundation model that uses in-context learning and improved architecture for fast, scalable tabular data prediction.

Introducing TabICL, a state-of-the-art table foundation model that uses in-context learning and improved architecture for fast, scalable tabular data prediction.

Explores how Large Language Models perform implicit Bayesian inference through in-context learning, connecting exchangeable sequence models to prompt-based learning.

An analysis of OpenAI's GPT-3 language model, focusing on its 175B parameters, in-context learning capabilities, and performance on NLP tasks.