Building LLMs from the Ground Up: A 3-hour Coding Workshop

A 3-hour coding workshop video covering the implementation, training, and use of Large Language Models (LLMs) from scratch.

A 3-hour coding workshop video covering the implementation, training, and use of Large Language Models (LLMs) from scratch.

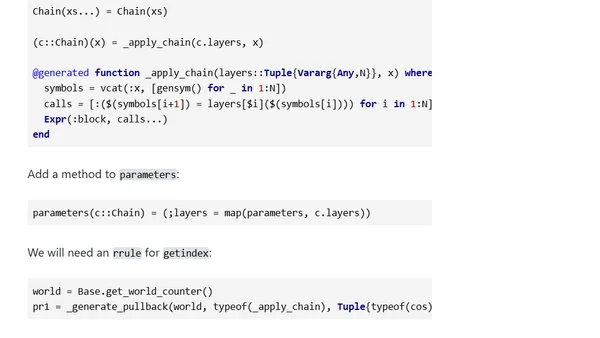

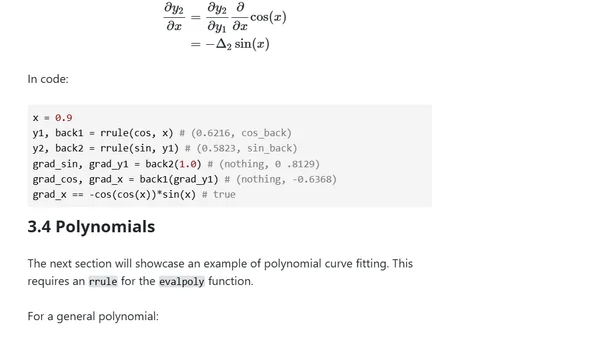

Part 5 of a series on building an automatic differentiation package in Julia, demonstrating its use to create and train a multi-layer perceptron on the moons dataset.

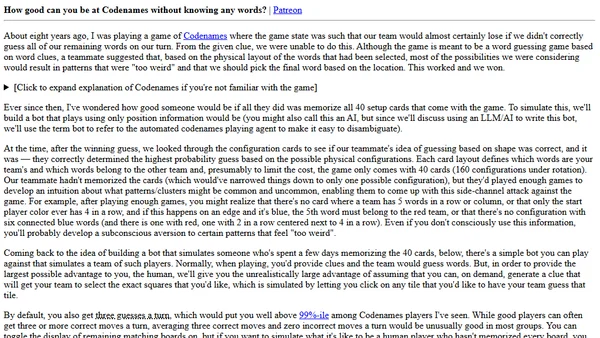

Analyzing if a Codenames bot can win using only card layout patterns, without understanding word meanings.

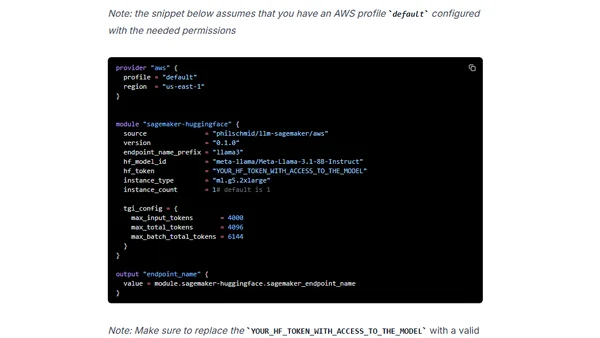

A guide to deploying open-source LLMs like Llama 3 to Amazon SageMaker using Terraform for Infrastructure as Code.

A technical article exploring deep neural networks by comparing classic computational methods to modern ML, using sine function calculation as an example and implementing it in Kotlin.

An introduction to building a minimal automatic differentiation package in Julia, focusing on explicit chain rules and the Julia AD ecosystem.

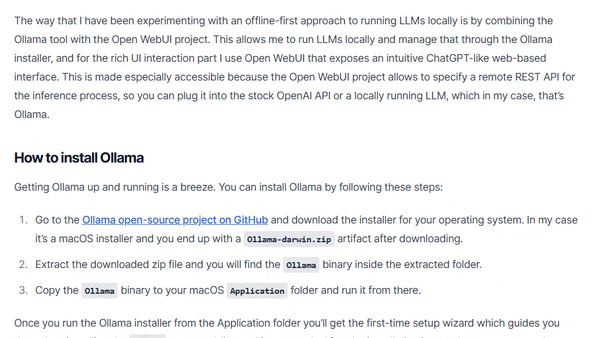

A guide on running Large Language Models (LLMs) locally for inference, covering tools like Ollama and Open WebUI for privacy and cost control.

A guide to designing a reliable and valid interview process for hiring machine learning and AI engineers, covering technical skills, data literacy, and interview structure.

Explores Java's current and future capabilities in AI/ML development, challenging the notion that Java is unsuitable for AI tasks.

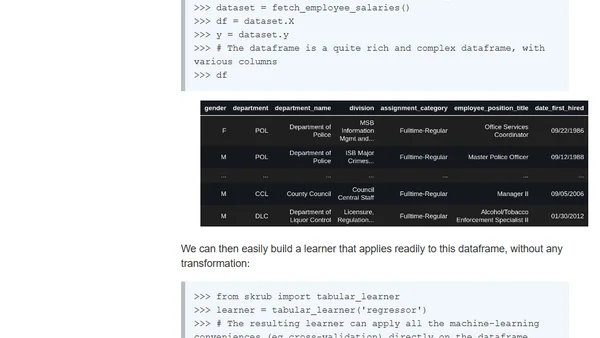

Announcing skrub 0.2.0, a library update simplifying machine learning on complex dataframes with new features like tabular_learner.

Explains the key difference between AI models and algorithms, using linear regression and OLS as examples.

Analyzes public reactions to AI bias claims, contrasting them with responses to traditional software bugs, using a viral example.

The article discusses the spin-off of scikit-learn's open-source development from Inria to a new mission-driven enterprise, Probabl, focusing on sustainable funding and growth.

A guide on using Microsoft's Phi-3 Small Language Model with C# and Semantic Kernel for local AI applications.

A summary of a talk on applying Large Language Models (LLMs) to build and deploy recommendation systems at scale, presented at Netflix's PRS workshop.

A developer's experience using ChatGPT 4 as a tool for exploring and learning new technical concepts, from programming to machine learning.

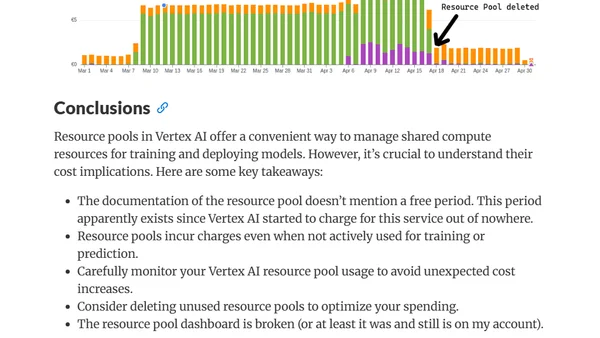

A developer's investigation into unexpected cost spikes from a Vertex AI resource pool, with a cautionary guide on managing cloud ML resources.

Explains kernel ridge regression and scaling RBF kernels using random Fourier features for efficient large-scale machine learning.

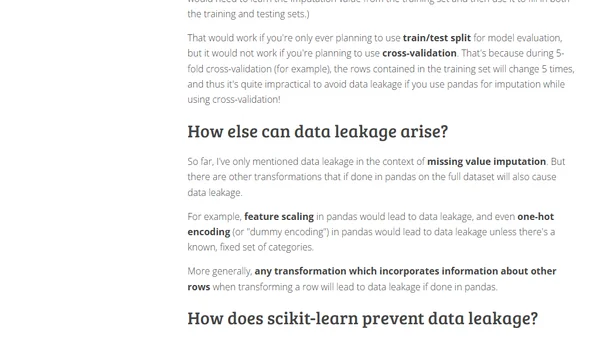

Explains data leakage in ML, why it's harmful, and how to prevent it when using pandas and scikit-learn for tasks like missing value imputation.

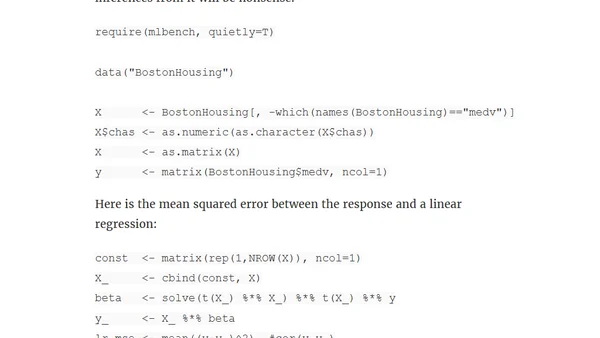

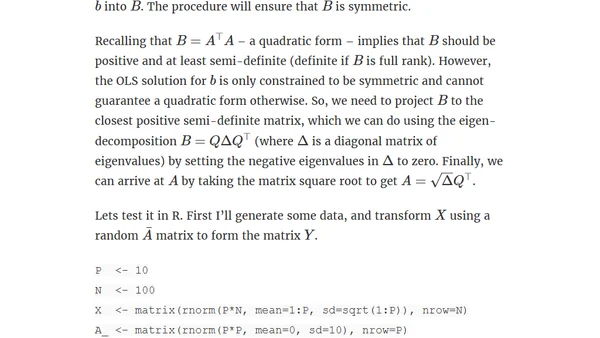

Explores a closed-form solution for linear metric learning, deriving a transformation matrix to align feature distances with response distances.