Kimi K2.5: Visual Agentic Intelligence

Kimi K2.5 is a new multimodal AI model with visual understanding and a self-directed agent swarm for complex, parallel task execution.

Kimi K2.5 is a new multimodal AI model with visual understanding and a self-directed agent swarm for complex, parallel task execution.

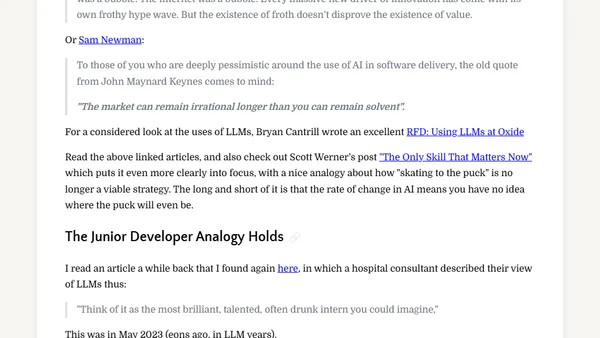

A developer reflects on the dual nature of LLMs in 2026, highlighting their transformative potential and the societal risks they create.

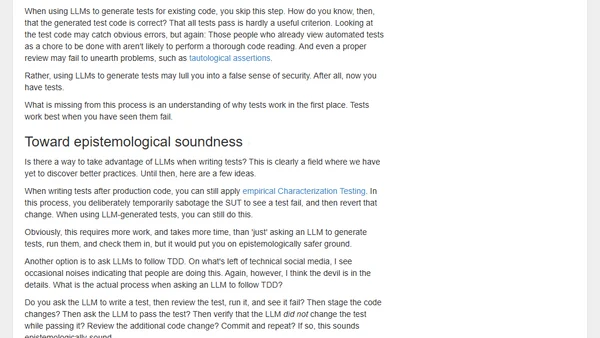

An analysis of using AI to generate automated tests, arguing it misses the point of TDD and reduces testing to a superficial ceremony.

A software engineer's personal journey from skepticism to embracing AI coding assistants, examining the tribalistic debates surrounding LLMs in development.

An overview of inference-time scaling methods for improving LLM reasoning, categorizing techniques like chain-of-thought and self-consistency.

An overview of inference-time scaling methods for improving LLM reasoning, categorizing techniques and highlighting recent research.

Prompt engineering is evolving from a niche skill to a core capability, similar to spreadsheet proficiency, as AI adoption grows across industries.

Explores developer context-switching challenges and workflow changes when integrating AI coding agents like Claude and Gemini into software engineering.

Explores how companies can effectively market to developers on Reddit, discussing pitfalls, advertising strategies, and platform culture.

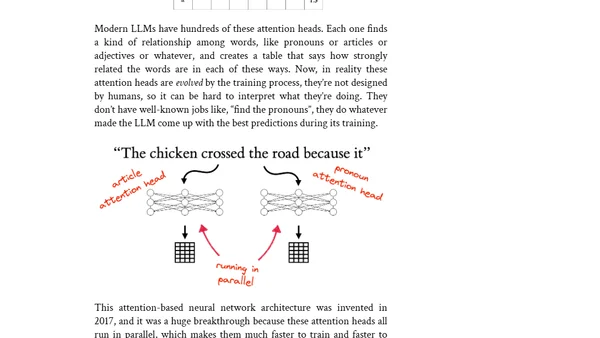

A computer scientist explains how large language models (LLMs) work, tracing their history from the Turing Test and ELIZA to modern AI, demystifying their operation.

A conversation on how LLMs help shape software abstractions and manage cognitive load in building systems that survive change.

Explores how GenAI tools like ChatGPT are harming the online communities and open-source projects they were trained on, and discusses potential solutions.

![[notes] Thinking About Anything Besides the Big Thing Right Now](https://alldevblogs.blob.core.windows.net/thumbs/article-c4ebb3c4bdb9-full-1064b50f.webp)

A software engineer explains their decision to avoid writing about dominant tech trends like LLMs, focusing instead on other important and enduring topics in the field.

Explores the AI-driven evolution of software engineering from autocomplete to autonomous agents, shifting the developer's role from coder to orchestrator.

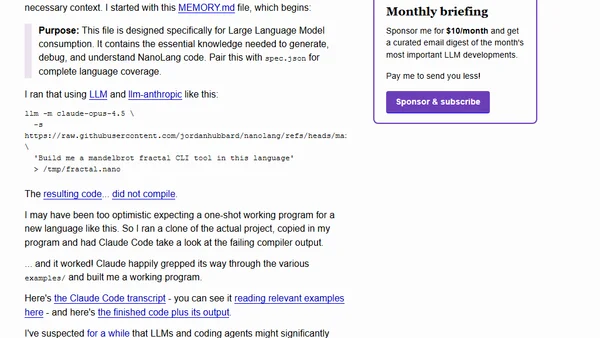

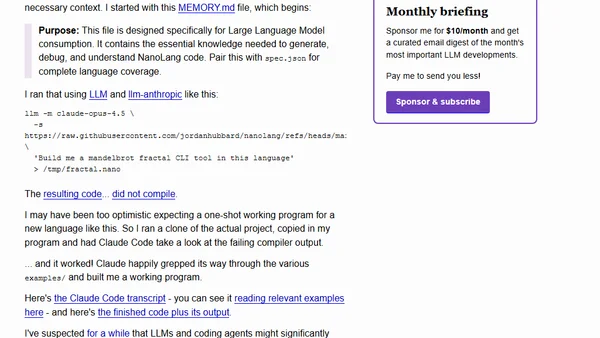

Explores NanoLang, a new programming language designed for LLMs, and tests AI's ability to generate working code in it.

Explores NanoLang, a new programming language designed for LLMs, and tests AI's ability to generate working code in it.

Discusses how LLMs fit into a software developer's career, emphasizing the enduring importance of understanding fundamental computer science concepts.

Introduces Open Responses, a vendor-neutral JSON API standard for hosted LLMs, based on OpenAI's Responses API and backed by major industry partners.

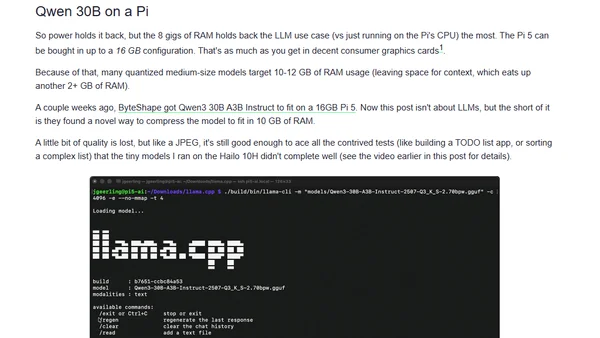

Raspberry Pi's new AI HAT+ 2 adds an NPU and 8GB RAM for local AI/LLM tasks, but testing shows performance and use-case limitations.

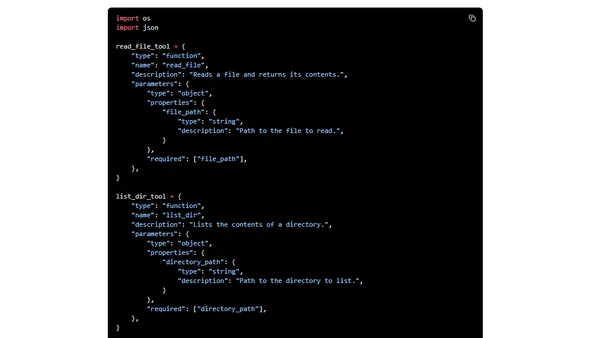

A guide to building AI agents using the Gemini Interactions API, covering core concepts and a step-by-step CLI implementation.