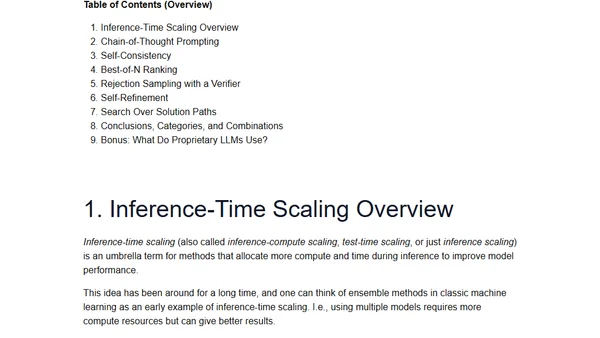

Categories of Inference-Time Scaling for Improved LLM Reasoning

Read OriginalThis technical article categorizes and explains inference-time scaling methods used to enhance the reasoning and accuracy of large language models (LLMs). It discusses techniques such as chain-of-thought prompting, self-consistency, and rejection sampling, based on the author's research and experimentation for a book on building reasoning models. The content is aimed at practitioners and researchers in AI and machine learning.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser