Retrieval-Augmented Generation (RAG) simply explained

A simple explanation of Retrieval-Augmented Generation (RAG), covering its core components: LLMs, context, and vector databases.

A simple explanation of Retrieval-Augmented Generation (RAG), covering its core components: LLMs, context, and vector databases.

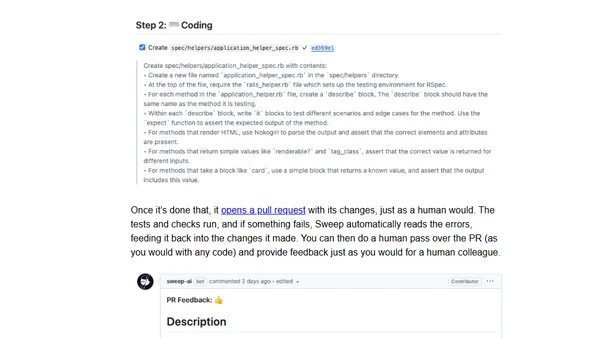

A developer's experience using Sweep, an LLM-powered tool that generates pull requests to write unit tests and fix code in a GitHub workflow.

An analysis of ChatGPT's knowledge cutoff date, testing its accuracy on celebrity death dates to understand the limits of its training data.

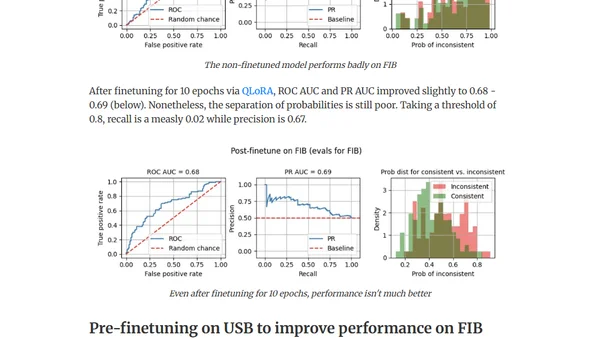

Explores using out-of-domain data to improve LLM finetuning for detecting factual inconsistencies (hallucinations) in text summaries.

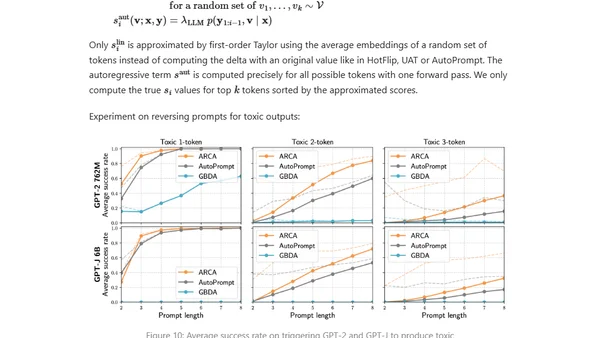

Explores adversarial attacks and jailbreak prompts that can make large language models produce unsafe or undesired outputs, bypassing safety measures.

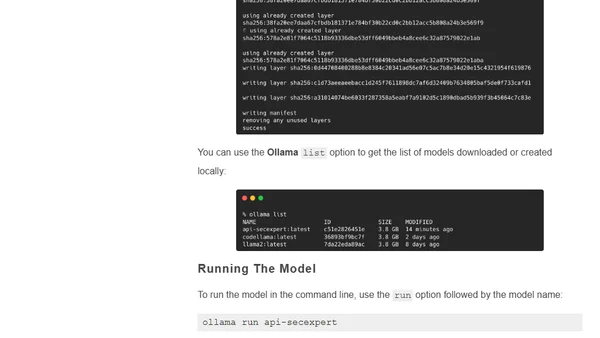

A guide on using Ollama's Modelfile to create and deploy a custom large language model (LLM) for specific tasks, like an API security assistant.

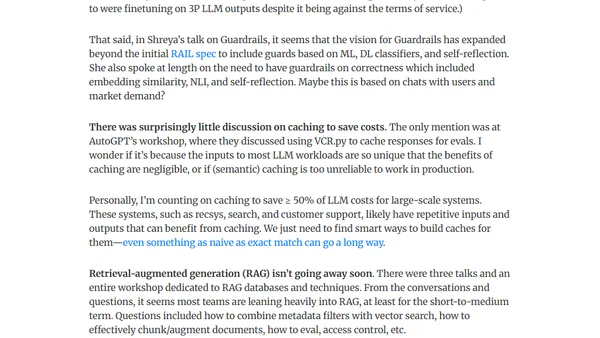

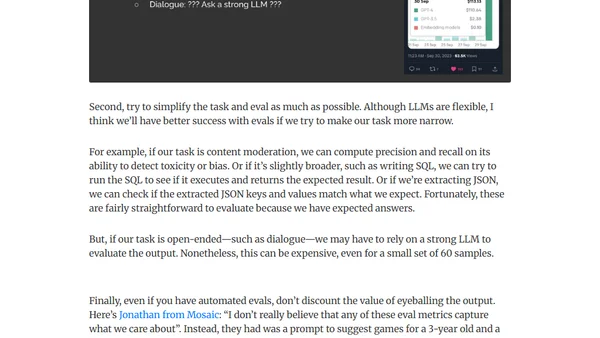

Key takeaways from the AI Engineer Summit 2023, focusing on challenges in LLM deployment like evaluation methods and serving costs.

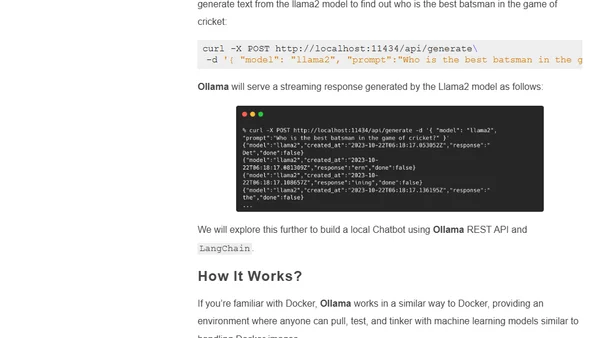

A guide to using Ollama, an open-source CLI tool for running and customizing large language models like Llama 2 locally on your own machine.

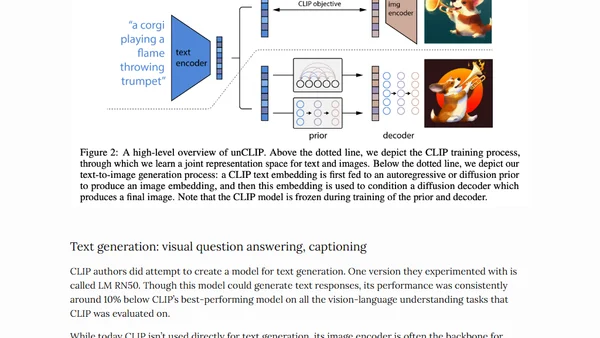

An in-depth exploration of Large Multimodal Models (LMMs), covering their fundamentals, key architectures like CLIP and Flamingo, and current research directions.

A summary of a keynote talk on essential building blocks for production LLM systems, covering evaluations, RAG, and guardrails.

A developer's weekly learning log covering Azure Machine Learning, Prompt Flow, Microsoft Fabric, Copilot, and an LLM hallucination paper.

Explains why traditional debugging fails for LLMs and advocates for observability-driven development to manage their non-deterministic nature in production.

Strategies for improving LLM performance through dataset-centric fine-tuning, focusing on instruction datasets rather than model architecture changes.

Explores dataset-centric strategies for fine-tuning LLMs, focusing on instruction datasets to improve model performance without altering architecture.

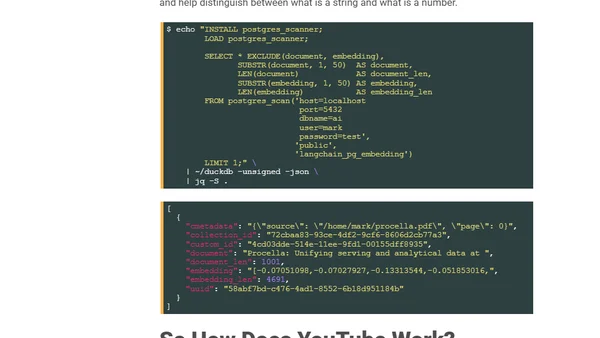

A technical guide on using an LLM (Platypus2) with LangChain and pgvector to analyze YouTube's Procella database paper.

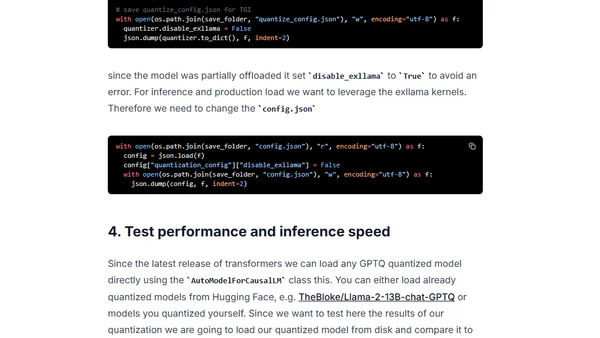

A guide to using GPTQ quantization with Hugging Face Optimum to compress open-source LLMs for efficient deployment on smaller hardware.

A critical analysis of the machine learning bubble, arguing its lasting impact will be a proliferation of low-quality, automated content and services, not true AGI.

A developer's weekly learning log covering Power BI data refresh, LLM architectures, Azure OpenAI costs, AI news, Python in Excel, and Azure SQL updates.

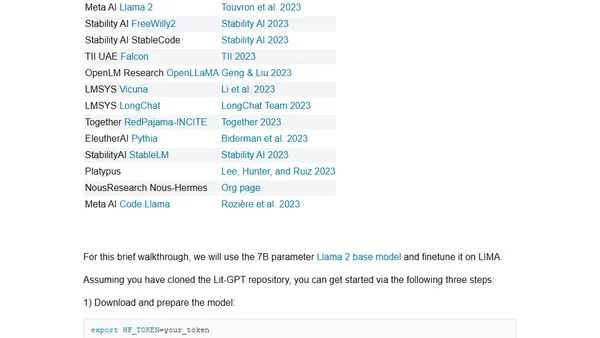

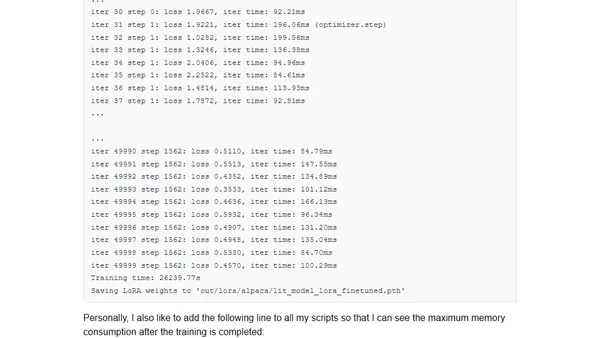

A guide to participating in the NeurIPS 2023 LLM Efficiency Challenge, focusing on efficient fine-tuning of large language models on a single GPU.

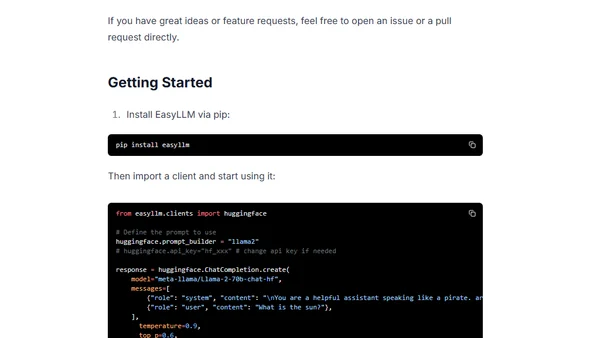

Introduces EasyLLM, an open-source Python package for streamlining work with open large language models via OpenAI-compatible clients.