New Serverless Transformers using Amazon SageMaker Serverless Inference and Hugging Face

Guide to deploying Hugging Face Transformer models using Amazon SageMaker Serverless Inference for cost-effective ML prototypes.

Philipp Schmid is a Staff Engineer at Google DeepMind, building AI Developer Experience and DevRel initiatives. He specializes in LLMs, RLHF, and making advanced AI accessible to developers worldwide.

189 articles from this blog

Guide to deploying Hugging Face Transformer models using Amazon SageMaker Serverless Inference for cost-effective ML prototypes.

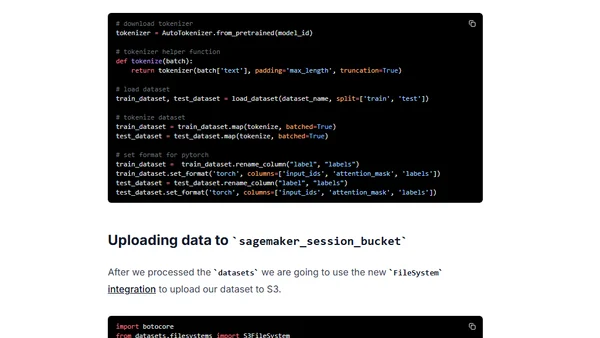

Guide to fine-tuning a Hugging Face BERT model for text classification using Amazon SageMaker and the new Training Compiler to accelerate training.

Learn how to integrate the Hugging Face Hub as a model registry with Amazon SageMaker for MLOps, including training and deployment.

A guide to attending AWS re:Invent 2021 machine learning and NLP sessions remotely, featuring keynotes and top session recommendations.

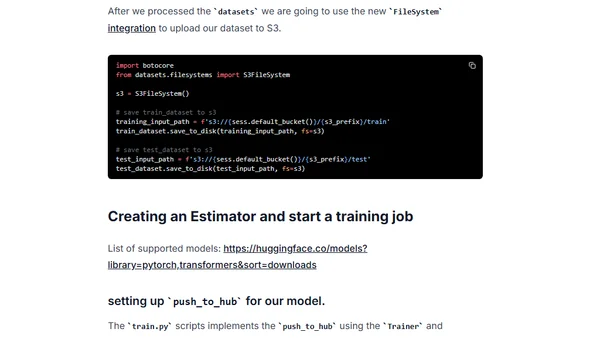

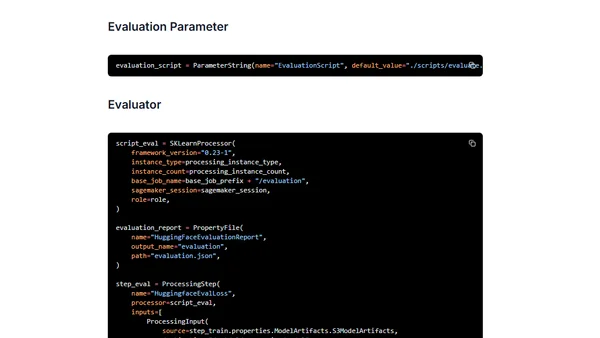

Guide to building an end-to-end MLOps pipeline for Hugging Face Transformers using Amazon SageMaker Pipelines, from training to deployment.

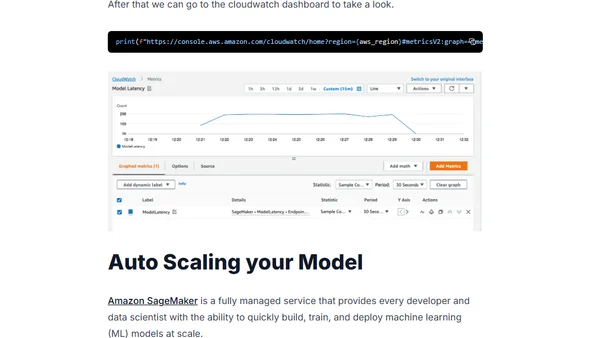

A guide to deploying and auto-scaling Hugging Face Transformer models for real-time inference using Amazon SageMaker.

A tutorial on deploying the BigScience T0_3B language model to AWS and Amazon SageMaker for production use.

A tutorial on deploying Hugging Face Transformer models to production using AWS SageMaker, Lambda, and CDK for scalable, secure inference endpoints.

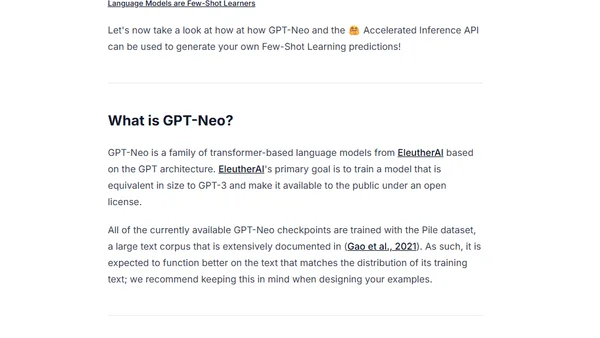

A guide to implementing few-shot learning using the GPT-Neo language model and Hugging Face's inference API for NLP tasks.

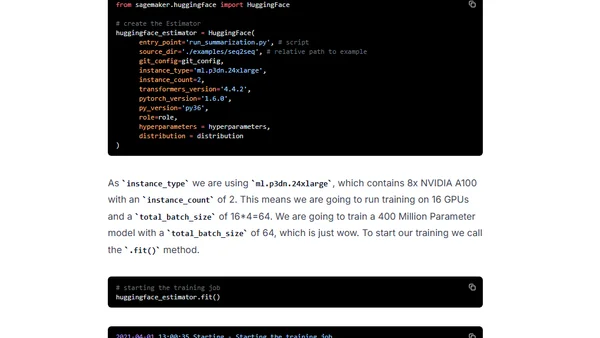

A tutorial on using Hugging Face Transformers and Amazon SageMaker for distributed training of BART/T5 models on a text summarization task.

Tutorial on building a multilingual question-answering API using XLM RoBERTa, HuggingFace, and AWS Lambda with container support.

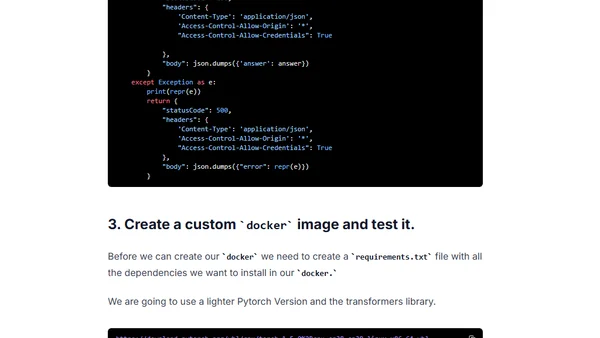

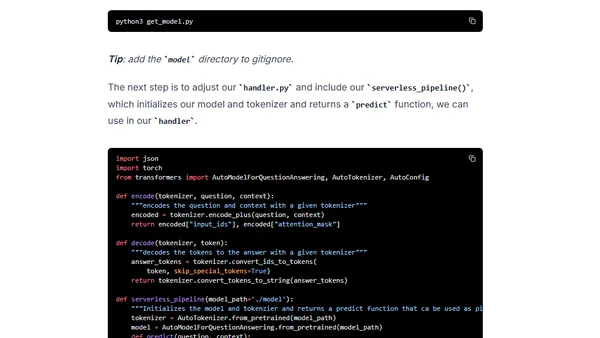

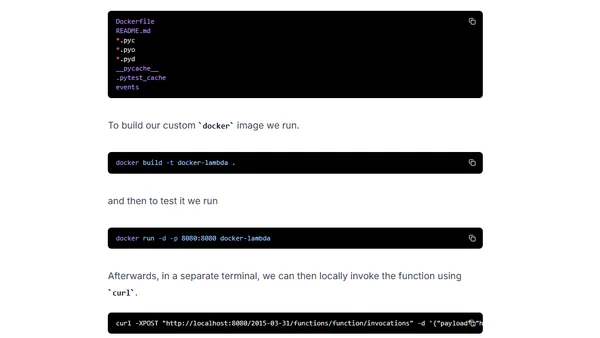

A tutorial on deploying a BERT Question-Answering API using HuggingFace Transformers, AWS Lambda with Docker container support, and the Serverless Framework.

Guide to using custom Docker images as runtimes for AWS Lambda, including setup with AWS SAM and ECR.

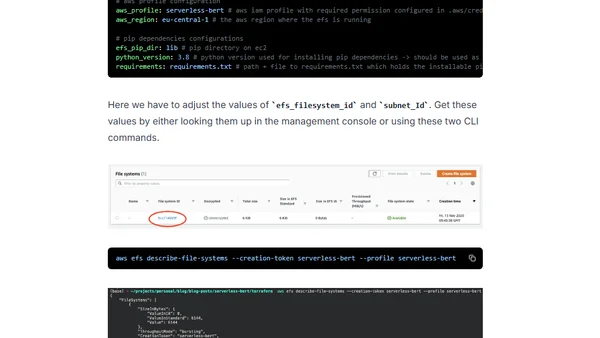

A tutorial on building a serverless question-answering API using BERT, Hugging Face, AWS Lambda, and EFS to overcome dependency and model load limitations.

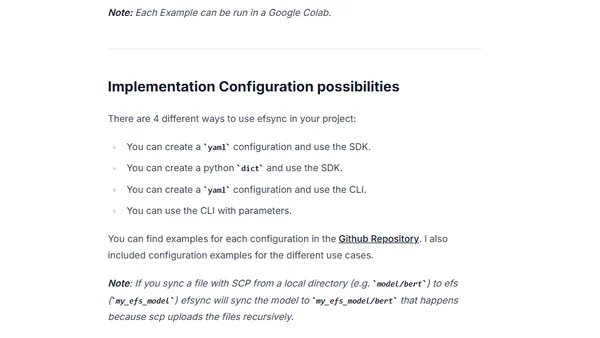

Introducing efsync, an open-source MLOps toolkit for syncing dependencies and model files to AWS EFS for serverless machine learning.

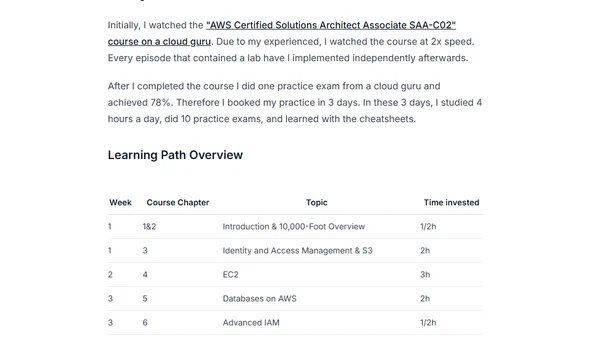

A machine learning engineer shares his 7-week journey and study plan to pass the AWS Certified Solutions Architect - Associate exam.

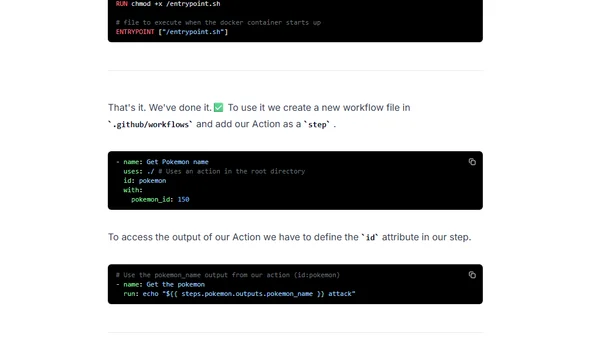

A tutorial on creating a custom GitHub Action in four steps, including defining inputs/outputs and writing a bash script.

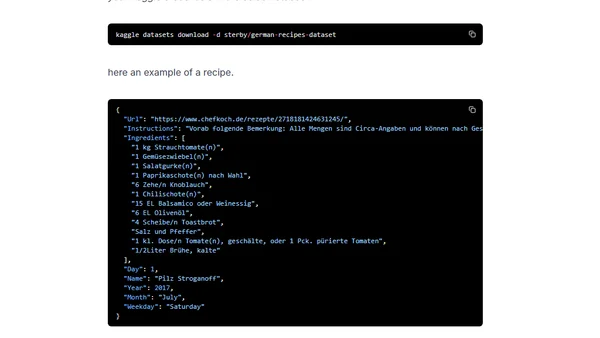

A tutorial on fine-tuning a German GPT-2 language model for text generation using Huggingface's Transformers library and a dataset of recipes.

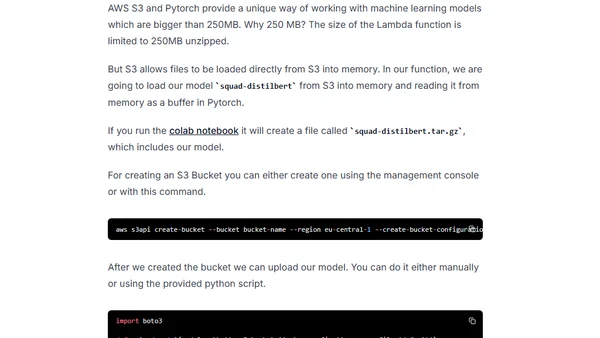

A tutorial on integrating AWS EFS storage with AWS Lambda functions using the Serverless Framework, focusing on overcoming storage limits for serverless applications.

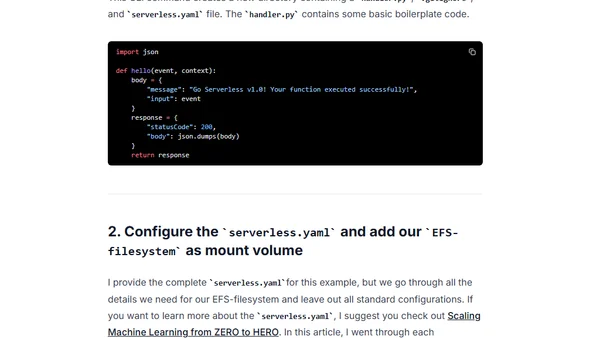

A tutorial on deploying a BERT question-answering model in a serverless environment using HuggingFace Transformers and AWS Lambda.